Introduction to the Knowledge Gap in Artificial Intelligence

The rapid proliferation of large language models has fundamentally altered the landscape of enterprise automation, software engineering, and digital workflows. However, as artificial intelligence transitions from generative, text-based chatbots to autonomous, agentic systems capable of executing complex tasks, a critical structural limitation has become increasingly apparent: the knowledge gap. Modern artificial intelligence models, despite their immense parameter counts and sophisticated reasoning capabilities, are inherently static by design. They are frozen in time at the precise moment their training data collection concludes. In fast-paced ecosystems such as software development, clinical medicine, and financial regulations, this static nature becomes a severe operational liability. Technologies evolve daily, application programming interfaces (APIs) are deprecated, and compliance frameworks are rewritten, leaving foundation models ill-equipped to handle real-world, real-time tasks without external intervention.

When deployed in production environments, this knowledge gap manifests as a high propensity for hallucinations, the suggestion of outdated software development kits (SDKs), and a general inability to adhere to current enterprise standards. To circumvent this limitation, developers have historically relied on monolithic system prompts, injecting massive amounts of instructional data directly into the model’s context window. This approach, known as prompt engineering, is computationally expensive, highly rigid, and prone to “context rot”—a phenomenon where the model’s performance degrades as the context window becomes oversaturated with disparate instructions.

The industry has recognized that relying on a static model for dynamic operational tasks is mathematically and practically unsustainable. The solution lies not merely in training increasingly larger models, but in fundamentally altering the architecture of artificial intelligence interaction. This architectural evolution introduces a “knowledge middleware layer” that sits between the user’s prompt and the model’s inference engine. At the core of this middleware layer is the concept of “Agent Skills”—a paradigm shift that transforms artificial intelligence from a probabilistic system that guesses answers based on latent neural pathways to a grounded, deterministic system that fetches, reads, and executes based on verified, real-time sources of truth.

Deconstructing Agent Skills: The New Paradigm of Procedural Knowledge

Agent Skills represent a sophisticated, open-standard methodology for extending the capabilities of artificial intelligence agents with specialized, domain-specific knowledge and repeatable workflows. Originally incubated by Anthropic and subsequently released as an open standard, Agent Skills are portable, version-controlled, and highly reusable capability packages. They function as the procedural memory of an artificial intelligence agent, dictating exactly how a complex task should be approached, orchestrated, and executed according to strict organizational conventions.

Unlike traditional prompt engineering, which relies on ephemeral, session-based instructions that must be repeated in every interaction, Agent Skills encapsulate procedural logic, workflows, and task-specific parameters into a structured, filesystem-based format that an agent can discover and invoke autonomously. This transforms the model from a generalist engine into a highly specialized operational tool.

The Architecture of Progressive Disclosure

The most profound technical innovation introduced by the Agent Skills standard is the mechanism of progressive disclosure. Because large language models possess finite context windows, overwhelming them with every available enterprise procedure simultaneously is inefficient and degrades reasoning quality. Agent Skills solve the context problem by loading knowledge in a tiered, on-demand fashion, ensuring the model only consumes tokens for the exact information it requires at a given moment.

The architecture of an Agent Skill is typically file-based, relying on a strict directory structure that an agent can navigate using standard filesystem commands. This structure utilizes a three-stage loading process to optimize token consumption and maintain high reasoning fidelity.

| Loading Stage | Content Type | Operational Function | Context Window Impact |

| Level 1: Advertise | Metadata (YAML Frontmatter) | Provides the skill name, dependencies, and a brief description. Injected into the system prompt so the agent is aware of available capabilities for semantic routing. | Very Low (~100 tokens per skill). |

| Level 2: Load | Instructions (SKILL.md) | Loaded only when the agent determines the skill is relevant to the current task. Contains step-by-step procedural logic, workflows, and compliance rules. | Medium (Dynamically loaded upon trigger, typically < 5000 tokens). |

| Level 3: Read | Resources and Executable Code | Accessed strictly on demand via tool calls. Includes supplementary reference files, templates, massive API documentation databases, or deterministic scripts. | Variable (Only consumes tokens if explicitly read or executed by the agent). |

This progressive architecture mirrors human expert behavior. A legal professional or senior software engineer does not hold an entire technical manual or legal code in their active working memory; rather, they consult the index, identify the relevant chapter, and extract the necessary procedural steps precisely when required. By adopting this filesystem-based model, Agent Skills allow developers to bundle massive amounts of reference material, scripts, and compliance checklists without incurring a context penalty, as the agent only “checks out” the specific knowledge required for the immediate micro-task.

Furthermore, skills can include executable code—such as Bash, Python, or JavaScript scripts—located in a dedicated scripts/ directory. These scripts perform deterministic operations, meaning the script’s code never loads into the artificial intelligence’s context window; only the script’s output consumes tokens. This allows skills to bundle powerful, reliable operational logic without a context penalty.

The Agentic Stack: Reconciling Skills, MCP, and RAG

As the agentic artificial intelligence ecosystem matures, a unified stack of open protocols is emerging. The proliferation of terminology has led to market confusion, particularly regarding the overlapping roles of Agent Skills, the Model Context Protocol (MCP), and Retrieval-Augmented Generation (RAG). A precise technical distinction between these three paradigms is critical for enterprise architects tasked with building scalable, autonomous systems.

Retrieval-Augmented Generation: The Discovery Layer

Retrieval-Augmented Generation focuses purely on data discovery and semantic search. It solves the static knowledge problem by embedding vast quantities of unstructured enterprise data into vector databases, allowing the model to retrieve relevant passages to ground its responses. RAG is highly effective for interactive problem-solving and information retrieval, acting as the “researcher” of the agentic stack. However, traditional RAG systems retrieve text fragments based on semantic similarity; they lack the inherent capacity to execute actions, follow strict multi-step procedures, or interact with external software systems.

Model Context Protocol: The Sensory and Actuation Interface

The Model Context Protocol, launched in November 2024 and maintained by the Agentic AI Foundation, standardizes the communication interface between an artificial intelligence system and external data sources. Utilizing a JSON-RPC 2.0 protocol, MCP acts as a universal connector—frequently described by software engineers as the “USB-C for artificial intelligence applications”. MCP solves the connectivity problem. It allows developers to build a single server that exposes databases, enterprise applications, and internal APIs to any compliant agent framework without writing custom integration code for every new model. If an agent requires access to a Jira ticketing system, a Snowflake database, or a Slack workspace, the MCP server provides the secure, standardized pathway. In the anatomy of an agent, MCP provides the “hands” and sensory interfaces to interact with the external world.

Agent Skills: The Procedural Orchestrator

Agent Skills, conversely, solve the procedural problem. If MCP provides the hands and the tools, Agent Skills provide the brain, the blueprint, and the operational recipes. A skill dictates the exact sequence of tool invocations, the logic required to evaluate the output, and the organizational standards that must be adhered to during execution. Skills provide internal know-how, ensuring that when an agent is granted access to a tool via MCP, it uses that tool competently and predictably.

| Architectural Layer | Core Function | Technical Implementation | Enterprise Application |

| Retrieval-Augmented Generation (RAG) | Knowledge Discovery | Vector databases, embeddings, similarity search algorithms. | Querying unstructured proprietary enterprise data; interactive research. |

| Model Context Protocol (MCP) | Tool Connectivity and Access | Client-Server architecture utilizing JSON-RPC 2.0 over standard transports. | Real-time integration with external systems, APIs, and enterprise software (e.g., Salesforce, GitHub). |

| Agent Skills | Procedural Orchestration | File-based directories (SKILL.md) utilizing progressive disclosure. | Enforcing repeatable workflows, coding standards, and deterministic step-by-step execution logic. |

These technologies are fundamentally complementary, representing a classic separation of concerns within software architecture. A highly mature agentic system will utilize Agentic RAG to research a complex server error, consult an Agent Skill to load the specific standard operating procedure for remediation, and leverage an MCP server to safely execute the necessary bash scripts and update the incident management tracking software. The convergence of these open protocols is rapidly becoming the foundational blueprint for enterprise artificial intelligence architecture, ensuring that systems are not only capable of acting, but acting correctly according to established governance.

Frameworks for Enterprise Implementation: Google Agent Development Kit

The drive to close the knowledge gap and build scalable multi-agent systems has spurred the development of robust, open-source frameworks designed to orchestrate these complex interactions. Google’s Agent Development Kit (ADK) represents a significant advancement in this domain, providing a structured, code-first environment for deploying agentic architectures.

Google’s Agent Development Kit is an open-source, model-agnostic framework available in Python, TypeScript, Go, and Java. Designed to treat agent creation as rigorous software development rather than mere prompt engineering, ADK provides the orchestration layer necessary to manage complex systems. It handles the internal logic, decision-making loops, hierarchical agent routing, and state management required for an agent to process a task from inception to completion. ADK introduces native support for integrating both MCP servers via the McpToolset primitive and Agent Skills, allowing developers to dynamically modify skills within the agent’s code using specialized model classes or to rely on standard file-based definitions.

The framework is tightly integrated into the Google Cloud ecosystem, offering seamless connectivity to BigQuery, Cloud Storage, Vertex AI, and Cloud Run. Through the Google Cloud API Registry, developers can utilize fully managed remote MCP servers, allowing agents to query real-time data from services like Alpha Vantage for financial analysis or Cloud Logging for automated incident triage without maintaining complex server infrastructure.

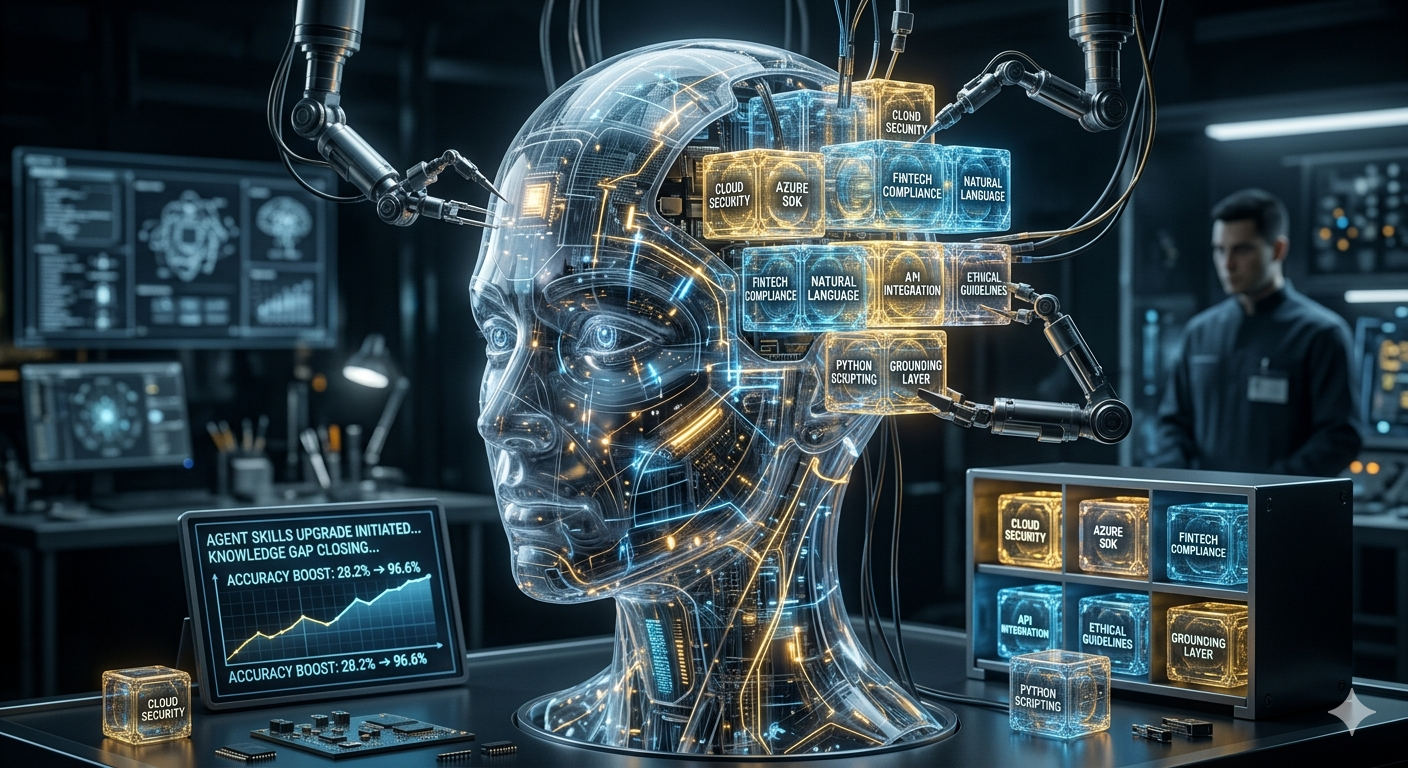

Empirical Evaluation of Agent Skills

The implementation of structured Agent Skills yields extraordinary quantitative improvements in artificial intelligence reliability. To test the efficacy of this architecture, Google DeepMind researchers built a specific “Gemini API developer skill” designed to close the knowledge gap regarding the latest Gemini software development kits. Because SDKs update frequently, language models inherently struggle to provide accurate syntax based solely on training data. The skill explicitly instructed the agent on the high-level features of the API, described current models, demonstrated basic sample code, and provided direct entry points to live documentation via a fetch_url tool.

The researchers tested this skill against a rigorous evaluation harness of 117 complex prompts requiring the generation of Python and TypeScript code. The results were highly definitive. Baseline prompt engineering without the skill resulted in a success rate of only 28.2%, with the model frequently hallucinating deprecated methods or older model versions. Upon the introduction of the Agent Skill, the success rate surged to 96.6%.

This massive leap in reliability underscores the commercial viability of Agent Skills. By replacing static, probabilistic text generation with deterministic, grounded retrieval, organizations can drastically reduce the rate of critical errors in automated workflows. Furthermore, the file-based nature of Agent Skills offers a superior token-cost profile compared to loading dozens of extensive MCP tool schemas directly into the context window. Encoding operational knowledge into skills that are only loaded when triggered provides a highly efficient, scalable method for enterprise deployment.

Real-World Applications and Sectoral Return on Investment

The theoretical promise of agentic artificial intelligence is rapidly translating into measurable operational outcomes. As of 2026, the transition from experimental pilots to production-grade deployments is accelerating, driven by the realization that agents equipped with specialized skills can generate profound returns on investment (ROI) across disparate industries. Organizations are no longer deploying generic chatbots; they are deploying highly specialized, workflow-integrated agents that act autonomously within defined guardrails.

Clinical Medicine and Healthcare Administration

The healthcare sector, burdened by severe administrative overhead and an impending global shortage of ten million workers by 2030, has become a primary beneficiary of agentic workflows. Approximately 20% of healthcare budgets are consumed by administrative tasks, and physicians spend a significant portion of their clinical hours on documentation, leading to burnout and system inefficiency. Artificial intelligence agents in clinical settings are moving beyond simple symptom checkers to assume ownership of complex, end-to-end tasks.

Clinical documentation agents are dramatically reducing the administrative burden on practitioners. In the United Kingdom, AI-powered summarization agents extract crucial information from lengthy clinical correspondence, translating it into concise, actionable summaries for general practitioners. This specific implementation has resulted in a 50% reduction in administrative time spent on clinical documentation, allowing physicians to reallocate hours directly to patient care. Similarly, hospitals deploying automated documentation systems have reported saving up to 66 minutes per provider daily.

Beyond documentation, highly orchestrated multi-agent systems are optimizing entire revenue cycle management and patient scheduling workflows. One California-based healthcare provider deployed pre-built artificial intelligence agents to manage high-volume, multilingual patient interactions, including complex scheduling, lab queries, and pharmacy escalations. Because the agents possessed skills allowing them to integrate directly with electronic health records (EHR) and follow strict clinical triage protocols, the deployment yielded $3.2 million in newly enabled revenue, a staggering 468% return on investment, and achieved a 24% inquiry containment rate without requiring human intervention.

Furthermore, specialized diagnostic agents are demonstrating remarkable clinical efficacy. Tools analyzing medical imaging are achieving up to 98% accuracy in certain use cases, including 94% accuracy in detecting lung nodules and 90% sensitivity in breast cancer detection, actively outperforming human baselines and reducing diagnostic errors by 5%. This proves that agent skills can operate reliably at the intersection of administrative efficiency and direct clinical outcomes.

Financial Services and Regulatory Compliance

In the financial services industry, speed, accuracy, and rigorous compliance are non-negotiable. Agentic workflows have demonstrated exceptional utility in automating complex, data-heavy processes that traditionally required extensive manual reconciliation. High-performing enterprise deployments indicate that the finance sector averages a 4.5x return on investment from artificial intelligence agents.

A primary use case is the automation of month-end financial reconciliation cycles. Utilizing advanced frameworks like LangChain and CrewAI, financial institutions have successfully reduced reconciliation cycles from an average of four days per month to under six hours. Furthermore, agents equipped with specialized compliance skills are autonomously monitoring global regulatory frameworks, conducting Know Your Customer (KYC) identity verifications, and generating real-time risk scoring. In environments where fraud losses are catastrophic, the ability of an agent to parse millions of transactions, apply complex regulatory logic, and flag anomalies in milliseconds provides a critical commercial advantage over human analysts who operate on much slower timelines.

Software Engineering and IT Operations

The domain of software engineering is undergoing a fundamental reconfiguration due to agentic coding tools. Developers are increasingly utilizing open standards like Agent Skills to encode team-specific methodologies—such as strict pull-request templates, test-driven development (TDD) requirements, and specific deployment pipelines—directly into the artificial intelligence’s behavior.

The impact is transformative. According to industry analyses, IT operations utilizing agentic systems are experiencing a 44% improvement in operational efficiency. Artificial intelligence agents are no longer just writing isolated blocks of code; they are autonomously auditing repositories, fixing lint errors, executing static checks, interacting with GitHub via MCP connectors, and opening fully documented pull requests. This shift allows human engineers to transition from writing every line of syntax to orchestrating long-running systems of agents, focusing their cognitive efforts on high-level architecture, strategy, and defining success criteria.

| Industry Sector | Primary Agentic Application | Quantified Business Impact |

| Healthcare | Clinical documentation, Patient triage, Diagnostic imaging analysis. | 468% ROI; 50% reduction in documentation time; $3.2M revenue enablement; 98% image analysis accuracy. |

| Financial Services | Automated reconciliation, KYC risk scoring, Compliance monitoring. | 4.5x average ROI; Reconciliation cycles reduced from 4 days to under 6 hours. |

| Software Engineering | Autonomous code auditing, PR generation, Test-driven development workflows. | 44% improvement in IT operations efficiency; Massive acceleration in deployment velocity. |

| Customer Support | End-to-end ticket resolution, Billing queries, Multi-lingual onboarding. | Cost per resolution reduced from $15 to $2; 80% resolution of routine inquiries. |

Macroeconomic Impact and Workforce Transformation

The integration of agentic artificial intelligence into the global economy represents a macroeconomic shift of historic proportions. Analysts predict that by 2028, 33% of all enterprise software applications will include embedded agentic capabilities, up from less than 1% in 2024, and 15% of day-to-day business decisions will be executed entirely autonomously by artificial intelligence. The economic value unlocked by these systems is vast; long-term projections estimate a $4.4 trillion addition to global productivity growth from corporate use cases alone.

However, this transition introduces a profound reconfiguration of the workforce. Research assessing over 18,000 tasks across 1,000 professions indicates that up to 93% of jobs will experience some degree of impact from artificial intelligence. This represents an estimated $4.5 trillion worth of labor value shifting from human execution to machine automation in the United States.

The narrative surrounding this shift is evolving from fears of dystopian job displacement to a focus on systemic role augmentation. While highly repetitive, information-processing tasks are prime targets for autonomous execution, the demand for human oversight, strategic planning, and interpersonal skills is escalating. Economic research suggests that for 4 out of 5 jobs, artificial intelligence will not eliminate the role but will result in roughly 43% time savings, allowing workers to focus on higher-value responsibilities.

The nature of managerial and technical work is pivoting from the direct execution of tasks to the orchestration and auditing of complex multi-agent systems. This paradigm shift necessitates a massive global upskilling initiative. Organizations are recognizing that the true bottleneck to scaling artificial intelligence is not technological capability, but the scarcity of human talent capable of governing, evaluating, and steering autonomous agents. Successful integration of AI follows the 10-20-70 rule: 10% of effort dedicated to algorithms, 20% to technology infrastructure, and 70% to people and processes.

A new “Agent-as-a-Service” economy is emerging, characterized by the deployment of human-orchestrated fleets of specialized agents. In this economy, organizations that successfully combine human strategic judgment with the execution velocity of Agent Skills will capture disproportionate market share. Companies are shifting their focus toward building “superagency” within their workforce—empowering employees to treat agents as collaborative teammates capable of handling mundane operational loops, thereby unlocking unprecedented levels of human creativity and high-level problem-solving.

The Security Frontier: ToxicSkills and the Lethal Trifecta

As Agent Skills grant artificial intelligence systems unprecedented, autonomous access to enterprise environments, they simultaneously introduce severe, novel security vulnerabilities. The transition from passive, generative chatbots to active, autonomous agents fundamentally alters the attack surface. Traditional cybersecurity paradigms, designed to protect against human threat actors or static malware signatures, are ill-equipped to defend against systems that dynamically generate code, invoke APIs, and persist across multiple operational states.

A comprehensive 2026 security audit conducted by Snyk, focusing on the Agent Skills ecosystem across platforms like ClawHub, illuminated the critical nature of these threats. The research, termed “ToxicSkills,” scanned 3,984 publicly available Agent Skills and uncovered alarming statistics. The audit revealed that 36.82% of the ecosystem contained at least one security flaw, and 13.4% (534 skills) harbored critical-level vulnerabilities. More concerningly, researchers confirmed the active deployment of over 76 intentionally malicious payloads designed for credential theft, backdoor installation, and covert data exfiltration.

| ToxicSkills Audit Metric (Feb 2026) | Count / Percentage | Security Implication |

| Total Agent Skills Scanned | 3,984 | Represents the largest public corpus of agent skills analyzed. |

| Skills with Security Flaws | 1,467 (36.82%) | High prevalence of hardcoded API keys and insecure credential handling. |

| Skills with Critical Issues | 534 (13.4%) | Direct exposure to malware distribution and prompt injection vectors. |

| Confirmed Malicious Payloads | 76+ | Active exploitation targeting credential theft and data exfiltration. |

The primary vector for these attacks is the exploitation of the “Lethal Trifecta.” An artificial intelligence agent becomes critically dangerous when it simultaneously possesses access to private enterprise data (such as API credentials or SSH keys), exposure to untrustworthy external inputs (such as reading an external email or an unverified SKILL.md file), and the capability to communicate externally or change system states. Because Agent Skills execute with the inherited permissions of the host agent, a malicious skill—often disguised as a benign productivity tool like a Markdown formatter—can utilize bash scripts or network egress tools to silently compromise an entire infrastructure. This creates a supply chain security crisis that mirrors the early vulnerabilities seen in package ecosystems like npm and PyPI, but with the added complexity of natural language attack vectors.

Furthermore, the Open Web Application Security Project (OWASP) has recognized this paradigm shift by releasing the Top 10 Risks for Agentic Applications. Chief among these vulnerabilities is “Agent Goal Hijack” (ASI01), wherein attackers use indirect prompt injections hidden within seemingly innocuous documents or metadata to completely override the agent’s original objective, turning it into a proxy for malicious action. “Tool Misuse & Exploitation” (ASI02) and “Unexpected Code Execution” (ASI05) further highlight the risks of granting autonomous systems unregulated access to enterprise environments. The convergence of natural language prompt injection with traditional malware execution pathways allows attackers to easily bypass standard heuristic security scanners.

Mitigating these risks requires a fundamental rethinking of enterprise architecture. Organizations must implement strict Zero Trust principles tailored for Non-Human Identities (NHIs). Security teams must prioritize the rigorous sandboxing of all executable skills, enforcing strict Role-Based and Attribute-Based Access Controls, and implementing continuous behavioral anomaly detection to monitor agent actions in real-time. Ultimately, the safe deployment of Agent Skills requires organizations to treat third-party artificial intelligence workflows with the same extreme scrutiny applied to critical software supply chains.

Sovereign AI and the India AI Mission: A Geopolitical Strategy

As the geopolitical and economic advantages of agentic artificial intelligence become undeniable, nations are rapidly formulating strategies to ensure technological sovereignty. The Government of India’s approach, encapsulated in the India AI Mission, provides a comprehensive framework for democratizing artificial intelligence while safeguarding national interests and ensuring inclusive societal development. For policymakers, technologists, and public service aspirants, understanding this framework is critical, as it dictates the future of the nation’s digital economy.

Approved in March 2024 with a substantial budget outlay of ₹10,371.92 crore (approximately US$1.2 billion), the India AI Mission is designed to position the nation as a global powerhouse in artificial intelligence innovation. The strategy diverges from the purely commercial or defense-oriented models seen in the West and China, focusing instead on “Sovereign AI”—the capability to develop, deploy, and govern artificial intelligence utilizing domestic infrastructure, indigenous data, and local talent to ensure strategic autonomy.

The mission is structured around seven highly integrated pillars designed to build a robust, self-reliant ecosystem:

| Pillar of the India AI Mission | Strategic Objective and Implementation Details |

| Compute Capacity | Establishment of a massive computing infrastructure, beginning with the procurement of 10,000 Graphics Processing Units (GPUs) and scaling towards a national compute crossing 38,000 GPUs. Designed to provide affordable, high-performance computing access to startups and researchers. |

| Innovation Centre | Focused on deep-tech research and the development of indigenous Large Multimodal Models (LMMs). Crucially, these models are trained on datasets covering the 22 official Indian languages to ensure broad domestic applicability and cultural relevance. |

| Datasets Platform (AIKosh) | Creation of a unified, national platform providing seamless access to high-quality, non-personal, and anonymized datasets to fuel algorithm training and innovation. |

| Application Development | Prioritizing the creation of impactful artificial intelligence solutions targeted at critical socio-economic sectors, particularly healthcare, agriculture, and governance. |

| FutureSkills | Addressing the massive talent gap by establishing Data and AI Labs in Tier 2 and Tier 3 cities, offering fellowships, and expanding educational programs to meet the projected demand for one million AI professionals by 2026. |

| Startup Financing | Providing streamlined access to essential risk capital and funding mechanisms for deep-tech artificial intelligence startups to foster a vibrant domestic entrepreneurial ecosystem. |

| Safe and Trusted AI | Developing robust ethical guidelines, privacy-preserving frameworks, and governance structures to ensure the responsible deployment of artificial intelligence, mitigating risks like bias and algorithmic discrimination. |

The evolution into the “India AI Mission 2.0,” announced in early 2026, further underscores a commitment to translating raw compute power into tangible economic value. This second phase emphasizes the integration of artificial intelligence into the Micro, Small, and Medium Enterprises (MSME) sector, aiming to package complex agentic workflows into highly accessible, ready-to-use digital public infrastructure—modeled on the success of the Unified Payments Interface (UPI).

Furthermore, initiatives like “Bhashini” are utilizing artificial intelligence to dismantle language barriers, providing voice-first interfaces that enable India’s vast population of informal workers to access digital services, smart contracts, and micro-credentials regardless of literacy levels. Sovereign initiatives are already yielding highly advanced technical outputs, such as Sarvam AI’s “Sarvam Vision” model, an indigenous 3-billion parameter model capable of complex knowledge extraction from multilingual Indian documents, outperforming global models on India-specific benchmarks. By combining immense computational investments with a strict focus on ethical governance, frugal innovation, and inclusive deployment, India is pioneering a model of artificial intelligence development that views technological autonomy not merely as a corporate asset, but as a critical utility for national resilience and socio-economic transformation.

Strategic Conclusions and Future Trajectories

The evolution of artificial intelligence from static, conversational interfaces to dynamic, autonomous agents represents the most significant technological leap since the advent of cloud computing. The integration of Agent Skills, combined with standardized connectivity protocols like MCP, has successfully addressed the historical limitations of the large language model knowledge gap. By replacing probabilistic text generation with structured, procedurally governed workflows, enterprises are finally achieving the reliability required to deploy artificial intelligence into high-stakes, mission-critical environments.

The quantitative evidence is definitive: Agent Skills fundamentally transform the accuracy, efficiency, and return on investment of artificial intelligence systems. From achieving 96.6% accuracy in complex software development tasks to generating multi-million dollar returns in healthcare administration and slashing financial reconciliation times, the procedural intelligence provided by these modular capabilities is redefining operational economics. The architecture of progressive disclosure ensures that these capabilities scale without overwhelming context limits, allowing agents to act as highly specialized domain experts.

Looking forward, the trajectory of artificial intelligence development points toward the advent of self-evolving systems and the broader “Internet of Agents” (IoA). As agents become more deeply integrated into organizational workflows, they will transition from merely executing pre-packaged skills to dynamically observing environments, identifying inefficiencies, and autonomously generating, testing, and deploying their own novel skills. This continuous learning loop will effectively eliminate the latency between technological advancement and artificial intelligence capability, fostering systems that adapt to shifting APIs, policies, and environments in real-time. Furthermore, integration with decentralized infrastructure, such as Account Abstraction (ERC-4337) and decentralized identifiers (DIDs), will enable agents to execute autonomous financial settlements and establish verifiable on-chain reputations, facilitating an entirely new agentic economy.

However, this transition is inextricably linked to severe security and governance challenges. The rise of toxic skills, prompt injection, and unauthorized data exfiltration necessitates a paradigm shift in cybersecurity, demanding stringent Zero Trust architectures, secure sandboxing, and real-time behavioral oversight. Concurrently, as nations like India demonstrate through comprehensive sovereign artificial intelligence initiatives, the ultimate success of agentic systems relies on robust public infrastructure, ethical oversight, and a commitment to utilizing autonomous technologies to augment human potential rather than merely displacing it. Organizations and nations that successfully balance the extreme velocity of agentic capabilities with rigorous, human-centric governance will dictate the economic and technological landscape of the coming decade.

+ There are no comments

Add yours