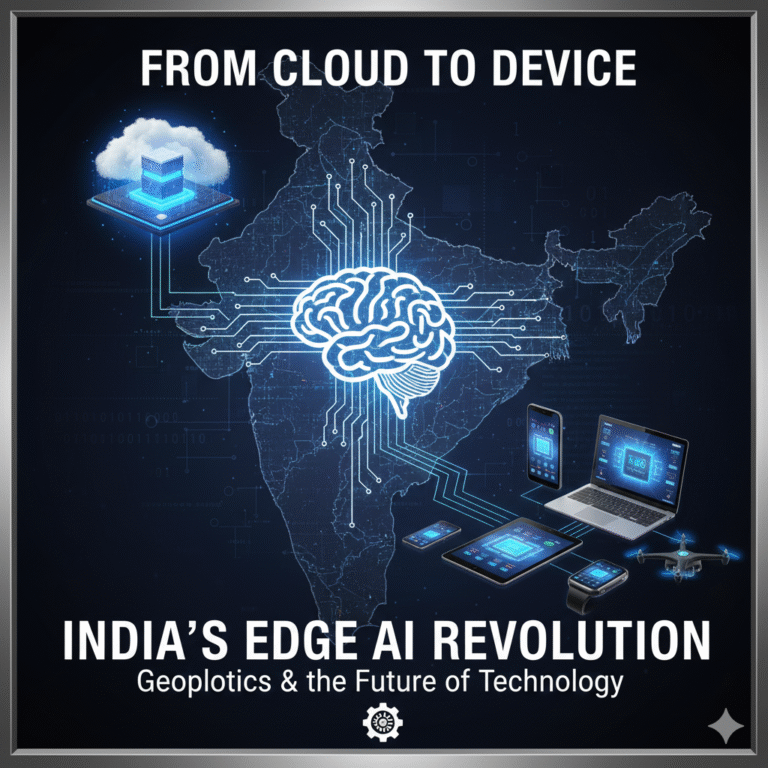

Artificial intelligence (AI) has entered a new phase: massive language models and real-time analytics are straining conventional computer architectures. The solution lies in a hardware revolution – specialized chips and infrastructure built to handle AI’s unique demands. Globally, governments and industry are racing to build computing power. India, for instance, plans to ramp up its AI ‘compute arsenal’ from ~38,000 GPUs today to nearly 200,000, alongside offering tax breaks for data centres. moneycontrol

This surge reflects a broader trend: legacy CPU-based systems can’t keep up, so GPUs, neuromorphic chips, photonic processors and other accelerators are stepping in. Why it matters: these advanced chips underpin everything from ChatGPT-style AI to smart infrastructure. Investments in AI hardware have strategic, economic and security dimensions – influencing everything from tech competitiveness to national defense. This article breaks down the AI hardware revolution: what’s happening, how it works, who’s involved, and what it means for India and the world.

Key Highlights

- National Compute Push: India’s government eyes ~200,000 GPUs for its AI mission – up from 38k today – and granted long-term tax holidays for data centres, aiming to attract $200B in AI infrastructure investments.

- Chipmaking Fund: New policies back domestic chip production. In 2026 India announced a $11B fund for local chip manufacturing, plus a Semicon India Programme with incentives and 10 approved semiconductor units.

- Innovative Research: Indian researchers achieved a breakthrough with a brain-inspired “neuromorphic” chip storing 16,500 memory states, potentially enabling complex AI tasks on smaller devices. This aligns with the nation’s Semiconductor Mission 2.0.

- Global Chip Trends: Worldwide, specialized AI chips are booming. Industry giants and startups – from NVIDIA’s GPUs and Google’s TPUs to photonic interconnects (Lightmatter, PsiQuantum) and edge-AI chips – are sprouting to meet AI’s data deluge.

- Strategic Importance: As analysis shows, advanced AI models now require computing power doubling every ~6 months. Countries see chips as strategic assets: U.S.-China tech rivalry hinges on chip access, while India uses Make-in-India, AI missions, and global partnerships to stay competitive.

Contextual Understanding:

Over the past decade, AI workloads have grown from simple models to huge deep networks. Training a top AI model now needs exponentially more compute – one study finds requirements have doubled ~every six months since 2018. cfr.org Traditional CPUs (general-purpose processors) cannot handle this load efficiently. GPUs (graphics processing units) turned out to be the key enabler: originally designed for parallel graphics, their architecture excels at the matrix math that deep learning requires. Companies like NVIDIA (GPUs) and Google (TPUs) built specialized chips and ecosystems.

Simultaneously, the global chip supply chain entered the spotlight. Recent years saw chip shortages in smartphones, cars and data centers – partly from surging demand. Major powers responded with industrial policies: e.g., the U.S. CHIPS Act, China’s semiconductor programs, and India’s semiconductor incentives. In India, the Atmanirbhar Bharat (self-reliant India) vision has prioritized semiconductors as foundational. Since 2021 the government launched the Semicon India Programme, secured ₹1.6 lakh crore of investment, and in 2026 unveiled the India Semiconductor Mission 2.0. These policies aim to create an end-to-end chip ecosystem – design, fabrication, packaging – to reduce dependence on imports.

Meanwhile, AI itself is spreading across sectors (healthcare, finance, governance) and devices (phones, IoT). Indian initiatives like IndiaAI focus on language-model development, AI startups, and data lake frameworks. But all these need one thing in common: massive computing power. This sets the stage for a hardware arms race. As one analysis notes, the U.S.’s lead in AI rests largely on its unparalleled computing capability. India too wants a seat at that table – using policies, partnerships and indigenous R&D to build its computing base.

Core Issue Explained

What is happening? – The AI Chip Boom

The core phenomenon is the surge in AI-dedicated hardware. Unlike decades past when one CPU could run most tasks, modern AI often trains and runs on specialized accelerators. For example, NVIDIA’s data center GPUs (like H100/H200) now dominate AI training. Other players include: Google’s Tensor Processing Units (TPUs), which accelerate neural nets; Graphcore’s Intelligence Processing Units (IPUs); Habana and Cerebras’ AI chips; and leading smartphone SoCs with Neural Processing Units (NPUs). Additionally, new areas like silicon photonics (using light for data interconnect) and neuromorphic chips (brain-inspired analog processors) are gaining momentum.

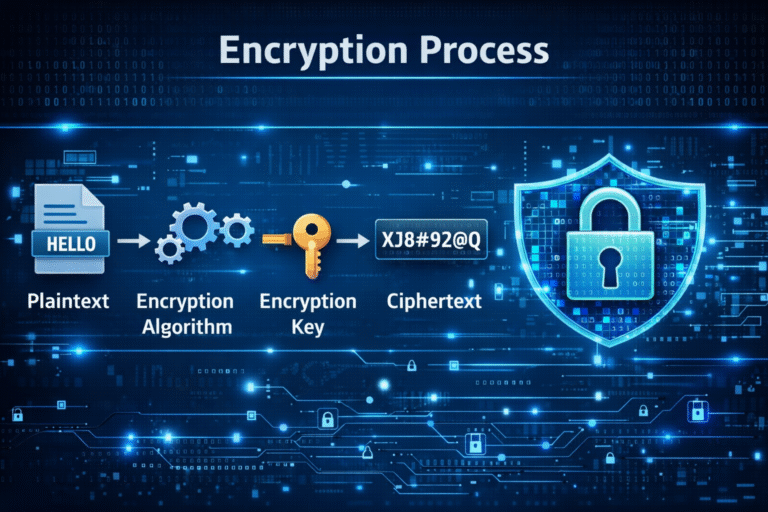

This development works as follows: a model such as a large language model is trained on thousands of GPUs or AI chips running in parallel. These chips perform trillions of matrix multiplications per second. Once trained, the model is deployed on data centers or edge devices with specialized inference chips. Throughout, huge bandwidth and low latency between chips (and from chip to memory) are crucial. In essence, AI simply moved from a CPU problem to a compute cluster problem, with novel hardware architectures to keep up.

How does it work?

Key Technologies

- GPUs: General-purpose GPUs (e.g., NVIDIA A100, H100) are massively parallel processors. They use hundreds of small cores and high-bandwidth memory (HBM) to process AI workloads. For example, NVIDIA’s H100 can execute ~1,300 billion tensor operations per second. Many GPUs are networked (via InfiniBand or photonic links) in clusters called supercomputers or data centers. This parallelism is critical because AI training scales by throwing more chips at the problem.

- TPUs and ASICs: Google’s TPUs are custom ASICs (application-specific integrated circuits) for AI. They further tailor the architecture (e.g., systolic arrays) for tensor math. Similarly, many companies (Intel, AMD, Qualcomm) design AI accelerators (ASICs or FPGAs) optimized for specific tasks. For instance, Apple’s M-series chips include a Neural Engine for on-device AI (image recognition, Siri). These chips work by integrating additional “AI cores” and memory to run ML tasks efficiently, often with lower power.

- Neuromorphic Chips: These mimic brain-like computation. Instead of binary logic, they use analog or spiking architectures that can store many states per synapse. The IISc “brain-on-chip” discovery can hold ~16,500 conductance states in a tiny molecular film. This allows data processing and storage to occur in the same place, radically lowering energy use for certain tasks. Such chips are still experimental but promise to eventually run AI on devices as small as smartphones, reducing reliance on cloud data centers.

- Photonics and Optical Interconnects: AI data centers are hitting a “networking bottleneck,” as wired electrical links struggle to move colossal data. Enter silicon photonics: lasers and waveguides on a chip. Startups like Lightmatter and Celestial AI use light-based links between chips and racks. For example, Lightmatter’s silicon photonic engine connects chips in 3D with light, achieving record interconnect speeds. Broadcom’s new “Thor Ultra” chip and ARM’s DreamBig also push these advances. Photonic interconnects are poised to double or triple inter-chip bandwidth, keeping GPUs and TPUs fed with data.

- Quantum Computing (Emerging): While true quantum AI chips are nascent, some labs (e.g., Quantinuum) are exploring hybrid quantum-classical setups. These could one day accelerate certain optimization or sampling tasks. However, for now quantum is a future angle rather than a key player in today’s AI hardware landscape.

Who is involved? – Key Players and Institutions

- Industry Giants: NVIDIA (US) leads in AI GPUs, followed by AMD, Intel, Google (TPUs) and Chinese firms like Huawei (Ascend chips). These companies continuously scale chip performance (smaller nanometer processes, more cores). For example, a recent analysis shows NVIDIA’s H200 (2025) outperforms all known Chinese chips by a wide margin, reflecting the technology gap driven by global supply controls. Tech giants also drive R&D: Amazon’s Inferentia chips, Apple’s neural cores, Meta’s in-house AI chips (Slingshot).

- Startups: Deep tech startups are innovating. Neuromorphic: BrainChip (US), Intel’s Loihi, and in India the IISc team. Photonic: Lightmatter, PsiQuantum, and Celestial AI (with funding from Intel, AMD). Edge AI: startups building tiny AI modules for IoT. They often partner with big foundries (TSMC, Intel).

- Government & Policy Bodies: In India: MEITY/MeitY (Ministry of Electronics & IT) leads policy; NITI Aayog (IndiaAI Mission); DST (funds AI R&D). Internationally: U.S. Department of Commerce (CHIPS export controls), EU Chips Act, China’s MIIT (Made in China 2025). Regulatory bodies and think-tanks also shape strategy.

- Academic and Research: Universities and institutes (IISc, IITs) conduct core AI hardware research. The IISc neuromorphic team with MeitY backing is one example. Defense R&D labs (DRDO) explore AI for battlefield (e.g. AI-enabled drones, secure chips). Collaborative forums (like U.S.-India AI Summits) bring together officials and experts on AI hardware policies.

Conceptual Breakdown:

AI hardware relies on several key concepts and mechanisms:

- Parallelism & Matrix Math: Deep learning reduces to linear algebra. Chips accelerate matrix multiplications (MAC operations). GPUs achieve this by having thousands of cores that work in parallel. GPUs use high-bandwidth memory (HBM) and fast interconnect (NVLink) to feed data to cores. TPUs use systolic array architectures to pass data through a grid of multiply-accumulate units in lockstep.

- Precision and Efficiency: Many AI chips optimize numeric precision. e.g., NVIDIA H100 uses Tensor Cores with FP8 precision for speed. Google TPUs use bfloat16. Lower precision (8-bit, even 4-bit) can dramatically cut memory/transmission needs with minimal accuracy loss, allowing faster computation. Neuromorphic chips take this further, inherently working with analog levels or spikes.

- Memory & Data Movement (“Memory Wall”): A core challenge is moving data to/from compute units. Modern chips incorporate stacked memory (HBM2e/3) on-package, and some integrate tiny caches or SRAM for local weight storage. Innovations like the China-origin Taalas chip hard-code model weights into the chip metal, eliminating external memory – but at cost of flexibility (only one model). Overcoming the “memory wall” is a research priority.

- Interconnect and Networking: For multi-chip systems, interconnect tech is crucial. Traditional copper wires hit physical limits at tens of Gbps. Photonic interconnects (using light pulses) promise Tbps links with lower latency/energy per bit. Companies like Broadcom and ARM are developing chips and IP for this. The concept here: imagine linking GPUs or even entire GPU boards via optical fibers embedded on silicon.

- Power and Cooling: High-performance chips generate heat. Data centers invest in liquid cooling, AI-friendly data center design, and even immersion cooling for exascale. Energy efficiency is paramount; it drives interest in analog/neuromorphic chips (less switching) and even memristor-based architectures. Smart power management and specialized deep learning instruction sets also help.

User Device

|

[AI Application]

|

(Inference)

┌─────────────┐ ┌─────────────┐

│ Edge AI Chip│<====>│ Data Center │

│ (NPU/TPU) │ │ GPU/TPU │

└─────────────┘ └─────────────┘

\ /

[Sensors/Data Flow] [Training & Storage]The above shows how edge AI chips (for phones/IoT) connect to larger GPU/TPU clusters for heavy-duty training and data processing.

- Ecosystem Layers: An AI system stack includes hardware, runtime (like CUDA, XLA), frameworks (TensorFlow, PyTorch) and finally applications. Hardware advances (e.g., new GPU architecture) often require updates through this stack. India’s National Digital Communications Policy and electronics policies are starting to include AI stack considerations, aiming to nurture domestic software/hardware synergy.

Policy Implications

The AI hardware trend has broad implications:

- National Security: Advanced chips are dual-use; critical for defense (e.g., satellite imagery analysis, cyber defense, missile systems). The hypersonic defense example showed India integrating AI into systems for missile tracking (AI-enabled radars in Project Kusha). More broadly, owning chip technology is seen as strategic. U.S. export controls on AI chips aim to slow Chinese military AI. India’s push for indigenization (Semicon Mission, Research consortia) is partly about security of supply and tech autonomy.

- Economic Impact: AI chips and data centers can attract huge investment. India’s policies (₹1.6 lakh crore investment commitments, $11B chip fund) aim to capture part of the global $10 trillion semiconductor market. A robust chip sector also creates high-tech jobs and spillovers to automotive, telecom, etc. Conversely, failure to develop chips risks dependency: India currently imports nearly all its high-end chips. Scaling local design (105 design houses, 315 universities with EDA tools as per PIB) could foster startups and R&D.

- Geopolitics: Chips are a new frontier in strategic competition. The US–China tech race centers on semiconductor leadership. India is balancing relations: aiming to tap US tech (RisingBharat Summit discussions, multilateral tech agreements) while also maintaining ties with global players (Nvidia, AMD, ARM all seek Indian markets). Collaboration like the US-India AI Compact (security linkages, DPI integration) hints that India’s AI/semiconductor plans are entwined with allies. However, India also must ensure “national interest” (data sovereignty, pricing).

- Governance & Policy: AI chips pose regulatory questions. Governments must update education (AI engineering courses, chip design training), data laws (for training data on local servers), and energy grids (powering data centers). India’s recent Union Budget gave tax incentives for data centers to boost cloud/AI infrastructure, signaling recognition of this need. Similarly, defense testing (iDEX challenges for AI solutions) and cybersecurity frameworks may incorporate AI chips.

- Social Implications: More AI hardware means more AI applications. This raises concerns about job displacement (automation) and privacy (AI surveillance possible with powerful processors). The government acknowledges this with skilling programs and an AI national strategy focusing on inclusivity. Policymakers must balance innovation with safeguards like AI regulation bills under consideration.

Concerns

- Technological Gaps: India lacks high-end fabrication (fabs) for cutting-edge chips (current projects are 28nm+). Most advanced chips (5nm, 3nm) are made by TSMC (Taiwan) and Samsung. Building such fabs is enormously expensive (~$20B), so India remains behind initially.

- Supply Chain Dependence: Even as design and R&D grow, India must import materials, equipment, and chips. Disruptions (e.g., pandemic, geopolitical tensions) could hamper AI projects.

- Skilled Manpower Shortage: Designing chips requires EDA tool expertise and microelectronics engineers. Despite programs (EDA access to 315 universities), there is still a shortfall in top-tier chip designers. Brain drain to global chip firms is a concern.

- Capital Intensity: Chip plants and AI data centers require massive investment. The ₹1 lakh crore funding and 11 billion USD fund address this, but private investors still fear long gestation. Continuous subsidies and policy support are needed.

- Ethical/Environmental: High-power AI data centers consume large electricity (often carbon-heavy). Balancing AI growth with sustainability (e.g., renewable energy in data centers) is a looming issue. Additionally, as AI capabilities spread, issues of bias and misuse (deepfakes, surveillance) persist, though these relate more to software, policy oversight must keep pace.

Future Outlook

- Scale-up of Infrastructure: Expect more data center parks and GPU cluster installations in India. Government AI Mission 2.0 will likely involve public-private partnerships to build “AI FabLabs” or supercomputing grids.

- New Chip Technologies: In 2030+, we may see India rolling out AI-specific chips (the indigenous neuromorphic chip, 2-nm designs by IIT/IISc?), and piloting photonic links in HPC networks. Early action in these fields could leapfrog current lags.

- Global Collaboration: India will deepen collaborations (as hinted by US-India tech compacts) to import expertise while growing local. For instance, tie-ups for 2nm processes or joint R&D centers may emerge.

- Regulatory Moves: AI hardware surge will prompt regulatory responses: perhaps stricter export controls on AI chips (like U.S.) and domestic standards for data security. India might also push for international norms on AI supply chains at forums like G20.

- Market Evolution: The AI chip market may consolidate. Some analysts predict a shakeout (as smaller AI chip startups merge or get acquired). Indian startups in chip design may either partner with big foundries or license foreign IP.

- Defense & Dual-Use: The Indian military may adopt more AI-capable hardware (drones with onboard AI, secure communication chips). AI-driven radar and sensor systems could become common under defense modernization efforts.

Conclusion

In summary, the AI hardware revolution marks a turning point: raw computing muscle (GPUs, specialized chips, photonic links) is becoming as crucial as AI algorithms themselves. For India, this means a twofold challenge: ramping up domestic chip capabilities (from design labs to fabs) and leveraging global advances (importing high-end chips, collaborating internationally). The combined strategy of ambitious targets (like 200,000 GPUs), funding semiconductors, and fostering homegrown research (e.g. IISc’s neuromorphic chip) is aimed at ensuring India doesn’t miss the AI revolution train.

+ There are no comments

Add yours