Key Takeaways

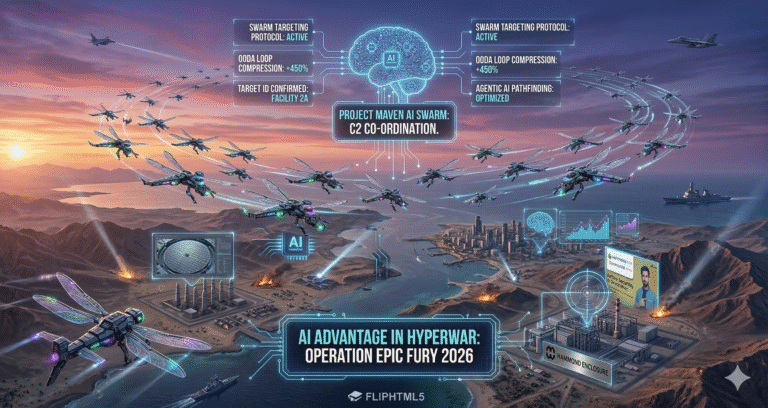

- Speed & Decision Superiority: AI tools have dramatically accelerated US war planning and strikes, enabling “smarter decisions faster than the enemy can react”.

- Comprehensive AI Use: From drone swarms with target-recognition to AI-powered logistics, U.S. forces leverage AI in nearly every facet of the conflict, gaining an asymmetric edge. For example, Anthropic’s Claude model is used to collapse planning timelines.

- Human Oversight Remains Critical: Officially, humans still approve all targeting. However, experts warn of “decision compression” where AI suggestions are so rapid that they risk automatic approval. Maintaining strict ethical controls is a major challenge.

- Iran’s Capabilities Lag: Iran’s AI program is far behind the US/China level, meaning Tehran must rely on older tactics. This technological gap contributes to the U.S. gaining early dominance.

- Global and Indian Context: The conflict is reshaping international norms on autonomous weapons. For India, it underscores the importance of building indigenous defense AI (for strategic autonomy) while upholding humanitarian principles.

The 2026 U.S.–Iran conflict has become a high-tech battleground where artificial intelligence (AI) gives a clear edge to technologically advanced forces. Within days of the campaign, U.S. and allied strikes – nearly 900 in the opening 12 hours – demonstrated a level of speed and precision unseen in past wars. thegaurdian

AI-driven systems are collapsing the “kill chain” (the steps from target ID to engagement) from days or weeks into mere seconds. As one British defense expert noted, these tools produce strike options so quickly that “the advantage is in the speed of decision-making, the collapsing of planning from days or weeks… to minutes or seconds”. This digital advantage has enabled U.S. forces (and Israeli allies) to make faster, more informed decisions than their adversaries. In effect, AI is giving the U.S. “an asymmetric edge” by automating intelligence analysis, logistics and targeting in real time. aljazeera

The implications are profound. As U.S. Central Command head Admiral Brad Cooper announced, AI tools are helping America’s warfighters process vast troves of battlefield data in seconds, allowing leaders to “cut through the noise and make smarter decisions faster than the enemy can react”. In short, AI is acting like a force multiplier: turning hours of human analysis into seconds of computation, and enabling an “AI-first” warfighting force across all domains. This article examines exactly how AI is reshaping the 2026 US–Iran conflict – from the front lines to the command center – why it matters today, and what it means for future warfare.

How AI Is Used in This War

AI tools are being applied across the entire spectrum of military operations. According to Georgetown University analyst Lauren Kahn, the U.S. military in 2026 is using AI “from boardroom to battlefield”. wunc.org

In practice this means:

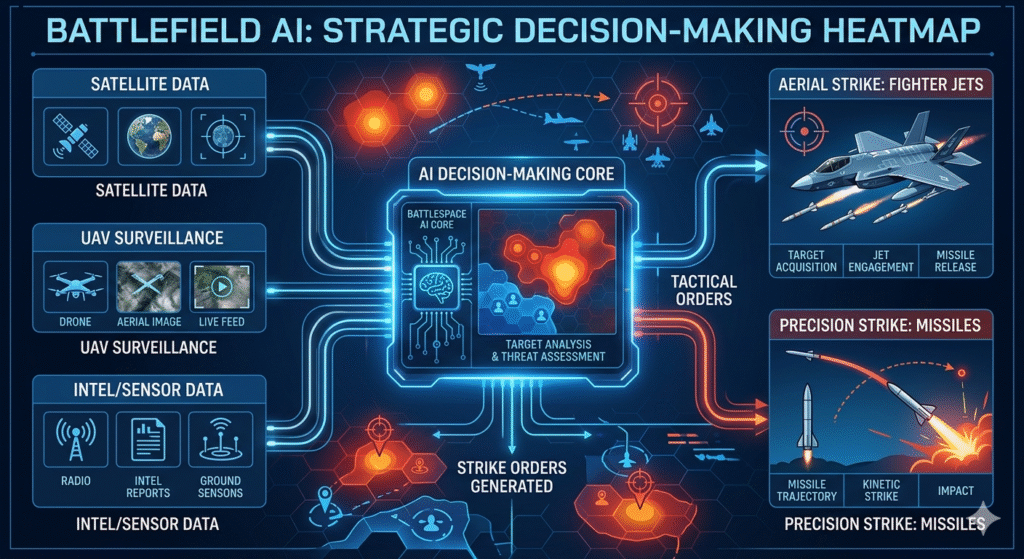

- Intelligence Analysis: Machine-learning models rapidly sift satellite and drone imagery, signals intelligence and communications intercepts to identify threats. For example, systems built by Palantir (in partnership with AI labs like Anthropic) can ingest terabytes of sensor data, flag possible targets, and even suggest optimal weaponry – shortening the kill chain dramatically.

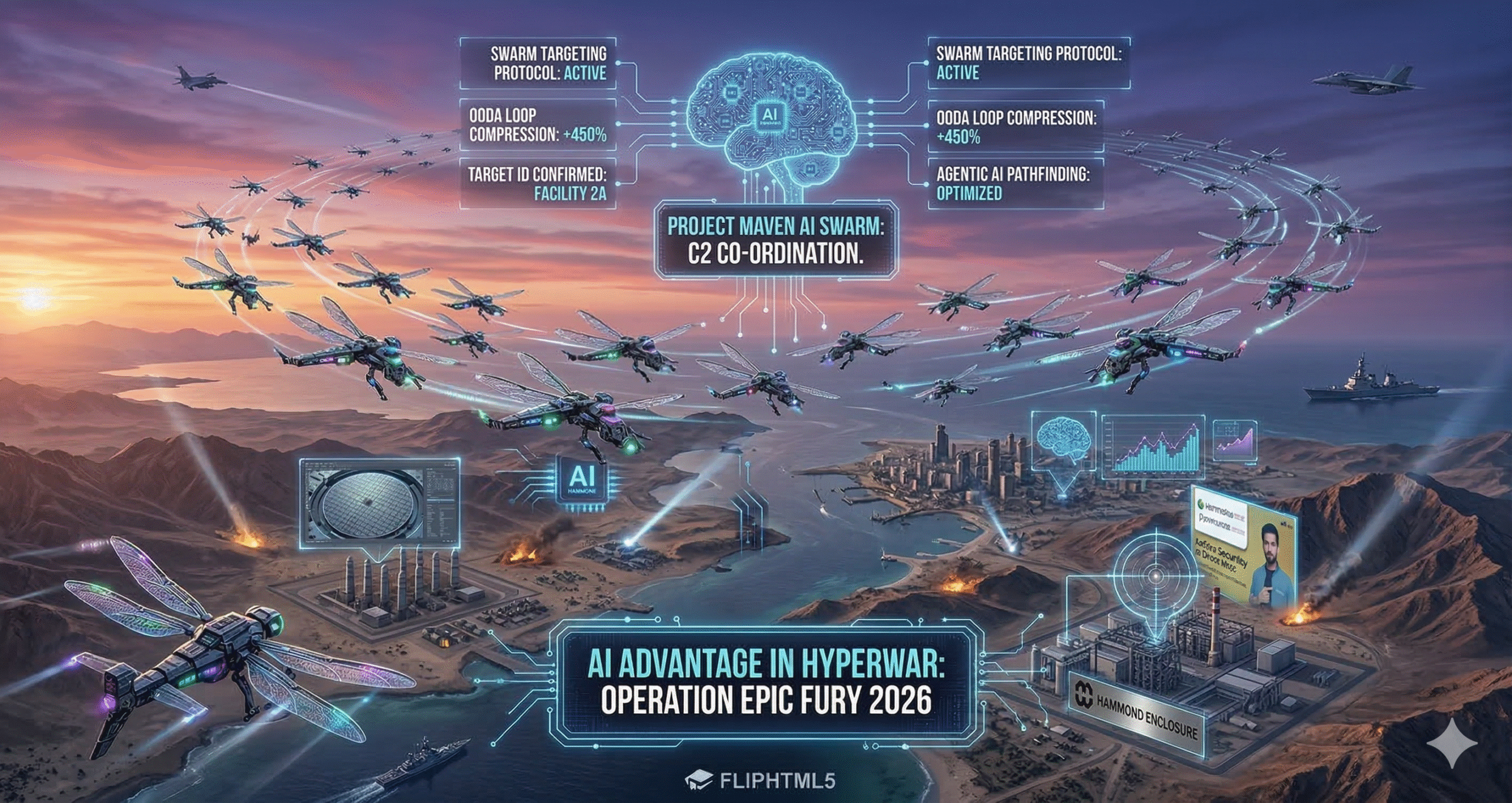

- Autonomous Drones & Swarms: AI algorithms guide fleets of drones in “swarm” formations. Israel (working with U.S. forces) has even deployed a “mother ship” concept: large aircraft or UAVs release hundreds of attack drones that autonomously navigate and strike designated targets. These drones use onboard AI (computer vision and target-recognition) to identify individuals or vehicles, even using facial recognition to execute precise strikes. Such swarms make defense extremely difficult: they can overwhelm air defenses with sheer numbers and coordination.

- Decision-Support & Planning: High-end generative AI (similar to ChatGPT or Google’s Gemini) have been incorporated into war planning rooms. These systems help planners quickly simulate “what-if” scenarios – e.g. predicting enemy movements or logistic bottlenecks – and recommend courses of action. The Pentagon’s new “GenAI.mil” initiative even provides frontline units with generative models for mission briefings and intelligence summaries. This kind of AI acts like an ultra-fast aide, pulling together information from diverse sources (maps, intelligence reports, historical data) so commanders can make timely choices.

- Cyber and Electronic Warfare: On another front, AI is being used to conduct cyber attacks and electronic warfare autonomously. Advanced algorithms try to hack or jam Iranian communications, air defenses and missile guidance systems, often in real time. Reports also indicate the U.S. is using AI to scan Iranian networks for vulnerabilities and deploy countermeasures with minimal human intervention (similar to how AI was used in Ukraine). This cyber domain use of AI extends America’s reach without putting troops in harm’s way.

- Logistics & Maintenance: Even behind the scenes, AI is streamlining warfighting. AI-driven logistics platforms dynamically route supplies, drones, and even repair crews where they are needed. Predictive maintenance uses AI to forecast when jets, tanks or missiles will fail and schedules upkeep before breakdowns occur. One U.S. official noted that by automating routine workflows, AI accelerates resupply and keeps weapons flying more consistently.

【Expert Tip: Think of AI in this war as an ultra-intelligent assistant. It crunches data and runs simulations in minutes, helping human commanders decide what to do. But humans still decide whether to strike.】

Why AI Matters Now

The timing of AI’s role in the US–Iran war is no accident. The past decade of conflict (from drone campaigns in the Middle East to Russia’s 2022 invasion of Ukraine) has been a live testbed. Planners learned that whoever harnesses data and AI effectively can react far more quickly. This is especially important in 2026:

- Pace of Modern Warfare: Conflicts now unfold much faster. Missiles travel supersonic; drones swarm in seconds. If analyses or orders lag, opportunities are lost and lives are at risk. AI ensures U.S. commanders are not working “days or weeks” behind reality. Every second counts – as the Guardian notes, AI could soon “fire faster, or respond to incoming fire more quickly, than humans can”.

- Data Overload: Modern battlefields produce more data than humans can parse. Reconnaissance satellites, drones, signals intercepts, social media chatter – it’s overwhelming. AI tools filter this “mountains of information” and present only critical insights.

- Shaping Outcomes: Early in the war, U.S.-led strikes quickly degraded Iran’s capabilities (e.g. destroying naval assets and missile sites). AI played a key role in selecting those high-value targets and coordinating simultaneous strikes. By rapidly decapitating Iran’s command and air defenses, U.S. forces effectively achieved de facto air supremacy without a traditional ground invasion. In wars, early dominance often decides the outcome; AI has amplified that effect.

- Psychological Impact: The shock of being attacked by near-autonomous systems can demoralize defenders. When Iran witnessed missile strikes hitting precision-chosen targets (like leadership bunkers or mobile missile batteries) in real time, it reinforced U.S. message of invincibility. Moreover, publicizing clips of AI-controlled drones or commanders using holographic maps can also project power to domestic and global audiences.

For example, Admiral Brad Cooper emphasized that US forces are making “smarter decisions faster than the enemy can react”. In effect, the U.S. has turned the technological realm into a force multiplier. AI isn’t just an experimental tool here; it’s central to strategy – what one Pentagon memo called a full “AI Acceleration Strategy” aiming for an “AI-first” military force. In this war, speed truly is victory.

Background: From Drones to AI Wars

To understand today’s shift, we must trace recent history. In the 2010s and 2020s, militaries gradually adopted unmanned drones, robotic systems and basic AI aids. Key milestones include:

- Drone Warfare (2000s–2020s): The U.S. pioneered armed drones (like the MQ-1 Predator and MQ-9 Reaper) in Afghanistan and Iraq. By 2010s, drones conducted precision strikes, but always directed by human controllers. In the 2020s Ukraine war, drones proliferated further – not only American but also Turkish and locally-made systems, some with rudimentary autonomy (e.g., swarming Behzad TB2s).

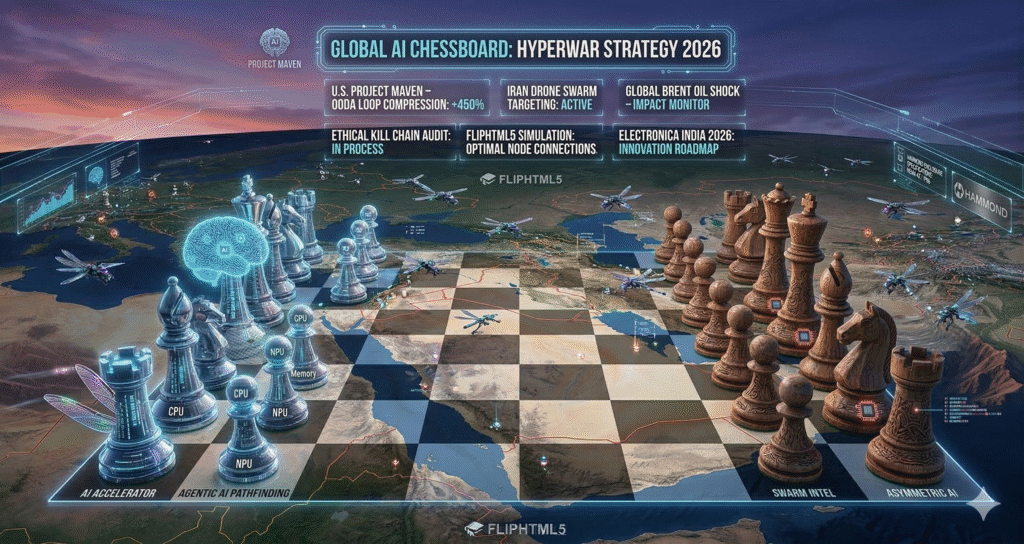

- Big Data & AI (2010s): Parallel to drones, big data analytics and machine learning began to enter military intelligence. Systems like the U.S. DoD’s Project Maven used computer vision to scan video feeds. These efforts remained largely experimental until 2022.

- AI in Ukraine (2022–2025): The conflict in Ukraine proved a turning point. Russia and Ukraine both used AI tools – for targeting, logistics (open-source maps, predictive maintenance), and even autopiloting drones. The U.S. tracked these innovations. Post-Ukraine, key Pentagon thinkers realized that future wars would be “AI wars”. By 2024, the U.S. commissioned new strategies (e.g. the “Department of War” AI Acceleration Strategy, and formal Department of Defense AI strategy memos) to integrate AI widely.

- Anthropic & Palantir Partnership: Notably, in 2024 Palantir (a defense tech firm) integrated Anthropic’s large language model Claude into Pentagon systems. This allowed military analysts to query an LLM for intelligence reports and battle-planning. Although controversial, it foreshadowed this year’s use of generative AI in wartime decisions.

- International Debate: Meanwhile, global discussions on autonomous weapons have accelerated. Nations at the UN convened on AI and warfare, with many urging a pause or treaty. But no binding agreement materialized. Instead, tech-capable nations raced ahead. Thus by 2026, the tools were in place just as conflict flared.

By the time missiles flew in February 2026, the stage was set. The U.S. war machine had learned from past conflicts and built an AI-empowered workflow. Iran, constrained by sanctions and slower tech development, could not match this head-start. The result: a demonstrable AI asymmetry in favor of the U.S. and its partners.

AI vs. Human Decision-Making

A recurring theme is the interplay between AI recommendations and human control. All open sources emphasize that humans are ultimately in charge of lethal decisions. For instance, CENTCOM’s Brad Cooper stressed, “humans will always make final decisions on what to shoot”. Similarly, Georgetown’s Lauren Kahn notes, AI can assist but not fully decide who lives or dies – that line is not crossed… yet.

However, AI compresses the decision timeline so tightly that critiques worry about “rubber-stamping” automated plans. The Guardian quotes experts saying that AI could produce legally vetted strike plans so quickly that human officers might merely approve them with little scrutiny. In practice, a human general might get a list of recommended targets every few minutes instead of hours. The ethical imperative then is to ensure oversight: laws of armed conflict (Geneva Conventions) require combatants to distinguish civilians and use proportional force. Whether AI helps or hinders that is debated.

From the U.S. perspective, doctrine still mandates human “eyes on the key button”. But the line is blurry. As Lauren Kahn points out, it’s getting “hard to tell where AI starts and stops” in the system. This has fueled calls (even by India’s own defense chief) for international rules on weapons autonomy. For now, the U.S. insists on a “human in the loop” policy, but critics note that error-prone AI could make mistakes – as possibly seen in the tragic school strike.

Did You Know? In this war, U.S. military leaders have warned domestic tech firms not to refuse AI contracts again. The company Anthropic, for example, sued the Pentagon earlier in 2026 after objecting to its tech being used in weapons. Yet the administration responded that “warriors will never be held hostage by unelected tech executives” – highlighting how pivotal AI has become to national security.

Key Developments & Examples

Lightning-Fast Strike Planning

One of the most eye-opening aspects has been the scale and speed of coordinated strikes. In the first day of major operations, U.S. and Israeli forces launched nearly 900 precision strikes on Iranian targets. Many of these strikes were reportedly planned with the aid of Anthropic’s AI model Claude, integrated into command systems. This AI analyzed satellite images, drone feeds and intercepted communications to prioritize which missile sites, radars, and leadership bunkers to hit first.

Defense experts describe this as “decision compression” – AI collapses planning time dramatically. For example, an automated system might flag a convoy of Iranian missiles trying to relocate, and within minutes calculate the optimal air strike location, weapon type, and legal justification. In past wars, compiling all that intel and approval could take hours or days. Now it can happen in seconds or minutes. The Guardian noted that systems like Palantir’s can “identify and prioritise targets and recommend weaponry, accounting for stockpiles and performance”. This has undoubtedly enabled U.S. forces to stay several steps ahead of Iranian responses.

AI-Enabled Drones & Autonomous Warfare

Another stark example is the use of AI-enabled drone swarms. Iranian media have reported (and Israeli sources confirmed) that Israeli forces, often with U.S. drone support, used a new “mother ship” method. In this approach, large transport drones release dozens of smaller attack drones mid-air. Each of these smaller drones is equipped with AI and a target database. They can independently scan an area, use facial recognition to single out targets (for instance, known IRGC officers), and strike with high precision. This was seen in the surprise strikes on Tehran militia checkpoints. The advantage: multiple drones can coordinate (via networked AI) to blanket an area quickly, overwhelming defences and minimizing collateral damage.

Notably, the news reports emphasize that American drones are also in play. U.S. MQ-9 Reapers and other UAVs have been active, some possibly using AI pilot assistance to optimize flight paths and evade defenses. While exact details remain classified, it is clear that the alliance’s drone operations have outpaced Iran’s largely Soviet-era air defence systems. With air superiority secured, U.S./Israeli drones can operate with little interference – multiplying the strategic impact of each strike.

Data and Cyber Warfare

Beyond kinetic effects, AI has bolstered the cyber and information fronts. The U.S. reportedly used AI to scan Iranian cyber defenses for vulnerabilities even before hostilities began. Some unverified reports suggest AI-driven malware attempts to disable Iranian missile networks. On the information side, AI is being used for psychological operations as well: deepfakes and AI-generated propaganda attempt to sway Iranian public opinion and demoralize troops.

In another domain, the administration’s sanctioned tech push means the U.S. can deploy cutting-edge AI cyber tools that Iranian defense tech cannot match, given sanctions. Chinese analysts have already noted that overreliance on AI could be dangerous, but as Reuters reported, China publicly warned that giving algorithms “power to determine life and death” risks runaway scenarios. These global reactions highlight how the US-Iran war has become a flashpoint in the broader debate over autonomous warfare.

Expert Insight: David Leslie, an AI-and-ethics professor, observed that “the AI machine is making recommendations… much quicker than the speed of thought”. This epitomizes the current edge: not that AI replaces generals, but that it multiplies their cognitive reach. Meanwhile, Prerana Joshi of RUSI stresses that AI “improves productivity and efficiency” for decision-makers – a real boon in a fast-moving conflict.

Stakeholders and Global Implications

The key stakeholders in this AI arms race are obvious: the U.S. military and its close allies (Israel, NATO), Iran’s military, and the global tech firms that supply AI models. India also watches closely. As a regional power with its own security concerns, India must assess how AI warfare affects its strategic calculations. For UPSC readers, the India angle is important: India has its own AI roadmap (the Manav vision, defense AI initiatives) but also upholds democratic values and legal norms. The conflict raises questions for India about balancing strategic autonomy (if China or Pakistan adopt similar tech) with humanity.

Internationally, this war is straining norms. Human rights groups and some nations (like China and Russia) are calling for limits on autonomous weapons, fearing “algorithms gaining power over life and death”. Meanwhile, U.S. officials argue for unrestrained use: a Pentagon spokesperson bluntly said “we will decide, we will dominate, and we will win” with these tools. For the United Nations and Geneva Conventions, the situation is urgent: existing laws did not anticipate AI’s pace. In practice, U.S. commanders assure compliance by keeping final decision power with humans, but Nature’s editorial warns that “these warnings need to be heard”. The editorial notes that the U.S. and Israel’s strikes using AI have brought such debates into the open; even top Indian defense officials have publicly stressed that “AI and automated systems are transforming warfare”.

For stakeholders, the war’s outcome will influence military procurement, defense alliances, and even domestic policy (privacy, tech export controls). India, for example, will likely double down on its own AI capabilities (see Aquartia Blog: India’s Defence Tech Revolution for more on that topic). It also must consider ethics: Indian laws on unmanned systems and privacy may need revision if AI in conflict escalates. Globally, allies like NATO are monitoring U.S. AI use to decide whether to follow suit, while adversaries (Russia, China, Iran) accelerate their own AI weapons programs in response.

Benefits and Advantages of AI in Warfare

In purely military terms, AI provides the following advantages to the U.S. side:

- Decision Superiority: AI compresses the observe-orient-decide-act (OODA) loop. Commanders receive near-instant analysis of battlefield data, giving them better situational awareness. This decision advantage can be decisive when battles hinge on small timing differences.

- Precision and Reduced Casualties: By analyzing targeting data more accurately, AI can enable more precise strikes. For example, using facial recognition or behavior-pattern analysis, AI drones can target specific insurgent leaders, reducing collateral damage to civilians. (Though tragic incidents occurred, even US officials are investigating whether strikes followed legal protocol).

- Force Multiplier: Human forces are limited in number and attention. AI systems can take on routine monitoring and analysis tasks, freeing soldiers to focus on creative strategy. Autonomy in logistics also allows smaller forces to project power at scale.

- Adapting Quickly: AI algorithms can learn from each engagement. If Iranian air defenses change tactics, AI systems can rapidly re-train (with new data) to counter them. This agility is hard to match without AI.

- Allied Interoperability: With standardized AI tools (like common databases and models), U.S. and Israeli forces can seamlessly share intelligence. The Syria and Gaza conflicts already saw combined US-Israeli AI usage; in this war, that synergy gives them a unified front.

Example: Collapsing a Command-and-Control Node

In one reported incident, U.S. forces detected an Iranian mobile command bunker relocating. Traditionally, tracking a mobile target takes hours (involving many sensors and analysts). Here, AI fused signals intelligence and drone imagery to pinpoint the bunker’s route. In a window of just minutes, it suggested an airstrike plan to commanders – which was executed swiftly. The result: the bunker was destroyed before it could issue orders, isolating Iranian units. This was hailed as a textbook “AI-enabled decapitation strike.”

Challenges and Risks

Despite advantages, the AI approach carries serious risks:

- Civilian Harm: Rapid, automated targeting raises chances of mistakes. If an algorithm misidentifies a building, the strike could hit civilians. The southern school bombing (killing children) has raised alarms. Critics argue such incidents show the need for human judgment. Even U.S. officials have faced calls for investigations on how the strike happened.

- Ethical Concerns: Scholars warn of “cognitive offloading” – when humans feel detached from killing because a machine did the analysis. There’s a danger of eroding accountability. Humans in the loop might simply approve AI’s plan without deep review, as Nature’s editorial cautions.

- Overreliance: Dependence on AI could be catastrophic if systems fail or are spoofed. Iran (reportedly) uses its own drones and possibly jamming to try to mislead U.S. sensors. If AI misreads a situation (for example, civilian movement as military retreat), the result could be disaster.

- Arms Race: The open demonstration of AI’s power could prompt global proliferation. If every future war involved armies of AI drones, the barrier to entering war lowers. Nations may feel compelled to strike preemptively if they know the other side has AI advantages, potentially making conflicts more likely.

- Escalation of Violence: AI’s speed may outpace diplomacy. A misinterpreted action could spiral faster than humans can broker a ceasefire. Cyber-AI attacks could trigger uncontrollable feedback loops.

Did You Know? Within hours of the war’s start, social media was flooded with AI-generated “war updates” (some false) as actors used large language models to influence opinion. This information warfare front adds another layer: citizens on all sides now face not just missiles, but “deepfake” content and AI-driven propaganda.

Future Outlook

Looking ahead, AI’s role will only grow. Potential developments:

- Fully Autonomous Systems: Research by DARPA and others aims to field drones that can operate semi-independently for surveillance or combat. In future conflicts, we may see armed drones execute missions with minimal human input, provided strict oversight.

- Lethal AI Agents: Some fear “lethal autonomous weapons” (LAWs). While current policy forbids fully autonomous kill decisions, technology is advancing so fast that policymakers worldwide are scrambling to legislate or ban them.

- AI Defense Countermeasures: Iran and other states will invest in AI themselves – not only for offense but to defend. This includes AI radar, anti-drone AI, and electronic warfare systems that can jam or deceive incoming AI-guided weapons.

- International Regulation: If the war’s civilian toll rises due to AI, pressure will mount for an international treaty on autonomous weapons (akin to chemical weapons bans). India will likely support balanced norms, given its ethics stance (Manav AI principles).

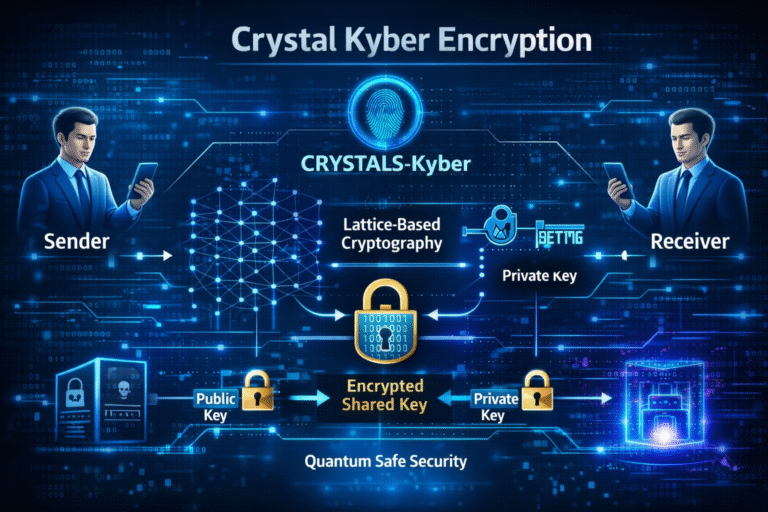

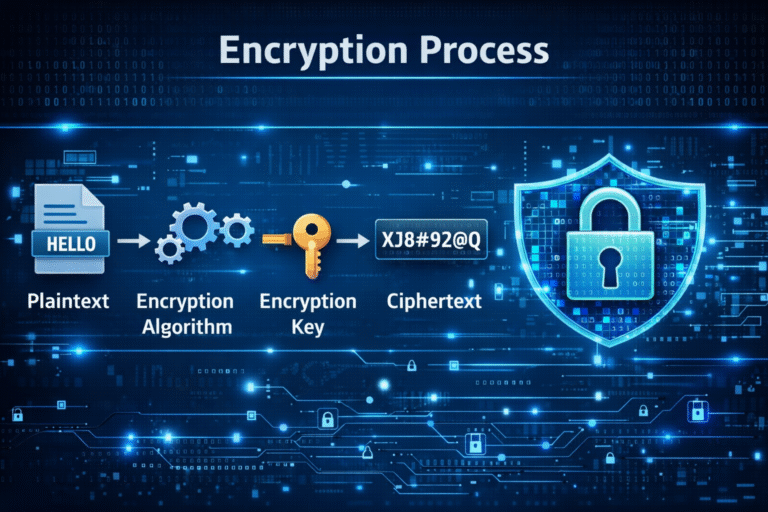

- Wider AI Arsenal: Beyond drones and data, expect AI in space and cyber to be integrated. Satellites with AI might autonomously track and target anything on Earth below. Quantum computing on the horizon could break encryptions, giving further advantages to the technologically advanced side.

Quick Comparison Table: US-led Coalition vs Iran (AI & Tech Capabilities)

| Aspect | US & Allies | Iran |

|---|---|---|

| AI R&D & Industry | Cutting-edge (US tech firms, Pentagon AI strategy) | Restricted by sanctions; limited indigenous AI development |

| Drones & Robotics | Advanced UAVs (Predator, Reaper, MQ-25, Israeli HERON etc) with AI navigational aids | Smaller domestic drones; some purchases (e.g. from China), but lacking AI autonomy |

| Targeting & Sensors | Networked sensors, satellite constellations, AI analytics (Palantir, commercial AI) | Traditional radar, limited satellite (some Chinese support), less integrated AI analysis |

| Battlefield Data | Real-time data fusion from multiple sources (IMINT, SIGINT, HUMINT) via AI | Fragmented intel; reliant on human spy networks (IRGC, proxies) |

| Cyber/EW | Offensive cyber-AI units, electronic warfare jamming AI | Defensive jammers, attempts to counterfeit or hack drones, less sophisticated AI tools |

| Leadership & Doctrine | Clear AI-first doctrine (e.g. “AI dominates, speed defines victory”) | Official statements hostile to AI use (prefers mullah-commanded decisions); no formal AI war doctrine |

| International Constraints | Respected (until proven otherwise) by allies; accused by some neighbors of “AI aggression” | Denounces AI strikes as war crimes (calls for UN probes) |

India and Global Order

For India and other major powers, this war is a watershed. India must watch carefully: while it seeks technological self-reliance, it also champions international law. The conflict shows India’s interests in two ways:

- Self-Reliant Defense: India has its own AI initiatives (AIKosh data platform, maritime drones, etc.). As noted in Aquartia’s India’s Defence Tech Revolution: Aatmanirbhar Bharat, Delhi wants a strong domestic defense R&D ecosystem. The US-Iran war underscores why: to avoid dependence on others’ tech (especially if China objects), India needs its own AI for surveillance (e.g. Himalayas), intelligence, and logistics.

- Diplomacy & Ethics: India’s rise on the world stage includes pushing for ethical tech use. New Delhi has been active in international forums (G20 AI Principles, UN initiatives). The speed of this war might push India to advocate a diplomatic solution or restraint on fully autonomous weapons. India’s constitution and traditions emphasize restraint (戦), which could guide its global stance on AI arms controls.

Regionally, an AI-heavy outcome could destabilize the Middle East balance of power. If Iran is severely weakened, countries like Saudi Arabia or Pakistan may see a security vacuum – possibly triggering an AI arms race in South Asia. India’s policy-makers will factor this into strategy (including military modernization and alliances).

+ There are no comments

Add yours