The landscape of generative artificial intelligence has undergone a seismic transformation by the spring of 2026. Image generation is no longer characterized by disjointed visual artifacts, blurry background elements, or robotic abstractions.

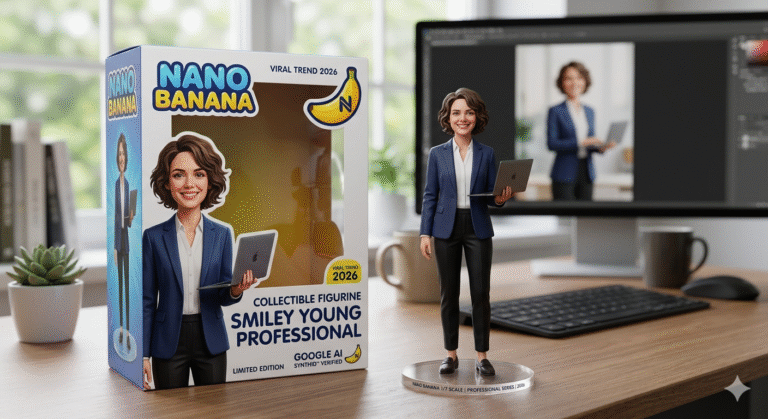

Instead, the introduction of Google’s Gemini 3.1 Flash Image—internally known by its viral codename, “Nano Banana 2″—has entirely redefined the intersection of photorealism, reasoning speed, and semantic accuracy.

Initially tested under an anonymous pseudonym on the LM Arena in August 2025, the Nano Banana architecture quickly gained viral traction for its hyper-realistic aesthetic and unparalleled text rendering capabilities.

With the official global rollout of Nano Banana 2 on February 26, 2026, creators and enterprise developers gained access to a production-scale visual creation engine. This new architecture merges the deep reasoning intelligence of the Gemini 3 series with lightning-fast generation speeds.

This comprehensive report serves as an exhaustive analysis of the Google Gemini AI photo ecosystem in 2026. It details technical mechanisms, strategic policy implications, and practical implementation frameworks for serious knowledge-seekers.

Why This Topic Matters Today

The democratization of high-fidelity visual generation represents a fundamental shift in digital media, commerce, and national security.

Historically, achieving character consistency across multiple AI-generated images or rendering highly legible typography required disjointed workflows, manual masking, and expensive post-production software.

Today, models like Gemini 3 Pro Image (Nano Banana Pro) can execute complex multi-turn editing and maintain strict identity locks using up to 14 visual references within a single conversational prompt.

However, this unprecedented generative capability also introduces profound socio-political challenges. The ability to create stunning, indistinguishable digital realities in seconds has necessitated sweeping global regulatory frameworks.

For professionals, researchers, and candidates preparing for policy-centric examinations such as the UPSC, understanding both the technical utility of Gemini and the legislative guardrails surrounding it is indispensable.

Familiarity with frameworks like India’s Information Technology Amendment Rules of 2026 and the M.A.N.A.V. vision is now a prerequisite for operating in the digital economy. Understanding these shifts ensures professionals can leverage AI innovation without running afoul of stringent compliance mandates.

Key Highlights

- Architectural Overhaul: The deprecation of Imagen 4 endpoints by June 2026, funneling all enterprise traffic into the unified Gemini 2.5 and 3.1 Flash Image infrastructure.

- Advanced Reference Allocation: The strict utilization of the 14-reference image allocation limit (10 object fidelity plus 4 character consistency inputs) for brand and character synchronization.

- Thinking Mode Integrations: The application of deep multimodal reasoning budgets to process massive contextual datasets prior to pixel generation.

- Regulatory Compliance Mandates: The impact of India’s M.A.N.A.V. vision and the mandatory 3-hour takedown windows for synthetic content under the 2026 IT Rules.

- Troubleshooting Workflows: Resolving persistent 429 RESOURCE_EXHAUSTED errors and navigating the hidden 0 IPM (Images Per Minute) limits on free API tiers.

Background / Context

To fully comprehend the current state of Google’s generative media suite, one must trace the rapid technological deprecation cycles characteristic of the 2025–2026 timeline.

For years, the Imagen series served as the primary workhorse for Google Cloud’s Vertex AI and consumer ecosystems. The Imagen 4 models introduced critical enterprise features such as digital watermarking, C2PA content credentials, and granular output resolution manipulation.

Models like imagen-4.0-fast-generate-001 offered high throughput, while imagen-4.0-ultra-generate-001 prioritized maximum visual fidelity. However, the underlying diffusion architecture was fundamentally constrained.

Imagen 4 lacked native support for complex subject customization using few-shot learning, mask-based inpainting, outpainting, or negative prompting. Recognizing these critical bottlenecks, Google initiated a massive infrastructure migration strategy.

Official documentation now mandates that developers update their model endpoints before June 30, 2026. This requires transitioning legacy endpoints, from imagegeneration@002 through 006, directly to the unified gemini-2.5-flash-image and subsequently to the gemini-3.1-flash-image pipelines.

This migration is not merely a rebranding exercise; it represents a hard transition from isolated diffusion models to natively multimodal systems. In these new systems, image generation is inextricably linked with a 131,072-token context window and real-time Image Search Grounding.

Core Explanation

The Gemini image generation ecosystem is currently bifurcated into two primary operational branches: models optimized for rapid throughput and models optimized for profound contextual reasoning.

What is Nano Banana 2 (Gemini 3.1 Flash Image)?

Released in late February 2026, Nano Banana 2 acts as the commercial standard for high-efficiency visual creation. It combines the deep reasoning power previously reserved for Pro models with the speed of Google’s Flash architecture.

It accepts both text and multimodal inputs (Image/PDF) and can output multiple resolutions ranging from 0.5K to 4K across vast aspect ratios, including extreme formats like 1:8 and 8:1.

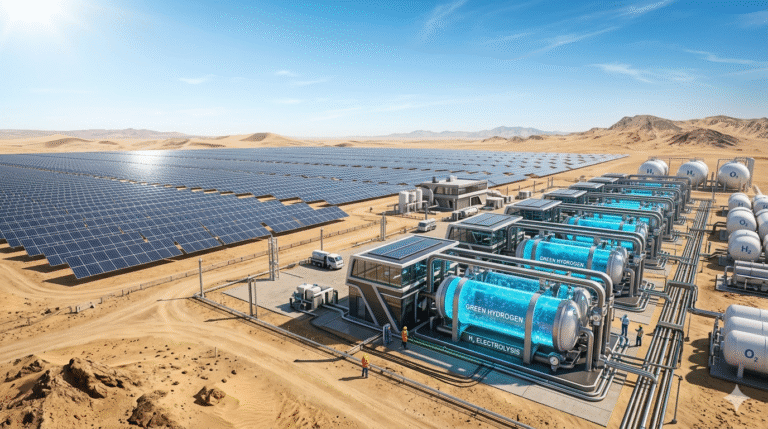

The model uniquely features a native “Thinking Mode” and real-time Image Search Grounding. This allows the AI to autonomously cross-reference global knowledge bases to ensure that architectural, anatomical, and geographical elements in the generated photo are perfectly accurate.

What is Nano Banana Pro (Gemini 3 Pro Image)?

For studio-quality workflows requiring absolute technical precision, the Gemini 3 Pro Image model serves as a professional design engine.

It features a robust reasoning core specifically designed for complex multi-turn editing and highly legible text rendering. Crucially, it provides stable identity locking for consistent character generation.

This means that facial features, core anatomical structures, and specific product branding remain identical even when poses, lighting, and backgrounds are entirely reimagined across dozens of different prompts.

How “Thinking Mode” Transforms Prompting

One of the most defining upgrades in 2026 is the integration of “Thinking Mode” into the image generation pipeline. When activated via the Google Vertex AI platform, the model does not immediately generate pixels.

Instead, it utilizes a configurable “Thinking budget” to deeply interpret complex user goals, analyze multi-page reference documents (such as corporate brand guidelines), and logically plan the visual composition before rendering.

However, this feature introduces new operational bottlenecks. Users processing heavy inputs—such as combining a 30-page PDF with detailed style instructions while Thinking Mode is active—frequently encounter session timeouts.

To mitigate this, developers recommend isolating relevant text sections, splitting tasks into distinct analysis and generation phases, or manually adjusting the thinking budget slider via the Google AI Studio console.

Key Stakeholders and The 14-Reference Architecture

A persistent challenge in early AI photography was the inability to keep a subject looking identical across different frames. Gemini addresses this through a structured reference image architecture supporting up to 14 visual inputs.

A critical operational nuance, often misunderstood by enterprise stakeholders, is the strict systemic allocation of these 14 slots. In the Gemini 3.1 Flash Image Preview, the system hardcodes specific category limits.

The system permits a maximum of 10 images dedicated to “Object Fidelity” (ensuring products, merchandise, or props remain identical). Simultaneously, it permits 4 images dedicated to “Character Consistency” (maintaining human facial and bodily structures).

A prompt will immediately fail to achieve the desired outcome if a user attempts to upload 14 character faces and zero object references, as this violates the backend categorical quotas. Proper allocation is the key to mastering commercial AI photography.

Conceptual Breakdown

To effectively deploy these modern generative models, a deep understanding of their precise technical parameters and API constraints is required.

Model Specifications and Rate Limits

| Specification / Property | Gemini 3.1 Flash Image (Nano Banana 2) | Gemini 3 Pro Image (Nano Banana Pro) | Legacy Imagen 4 (Deprecated mid-2026) |

| Input Modality | Text, Image, PDF | Text, Image, PDF | Text only |

| Max Context Window | 131,072 tokens | 1 Million+ tokens | Not Applicable |

| Output Resolutions | 0.5K, 1K, 2K, 4K | Up to 4K Studio Quality | Max 2816×1536 |

| Aspect Ratios | Extreme variations (1:8 to 8:1) | Extreme variations (1:8 to 8:1) | Standard (1:1, 16:9, 9:16, 4:3, 3:4) |

| Character Consistency | Supported (14 reference split) | Supported (Strict Identity Lock) | Not Supported |

| Text Rendering | High precision typography | Flawless, precise typography | Basic / Often garbled |

| API Cost Per Image | $0.067 | $0.134 | Varies by throughput type |

| Free Tier API Quota | 0 IPM (Requires Billing for Tier 1) | 0 IPM (Requires Billing) | Available on Fast models |

Data sourced from Google Vertex AI documentation and official pricing tables as of March 2026.

Expert Tip Section: When building automated pipelines, developers must note that the free tier for Gemini image APIs effectively instituted a 0 Images Per Minute (IPM) limit in late 2025. To avoid instantaneous 429 errors, ensure that billing is enabled to activate Tier 1 access, even if your usage is purely experimental.

Real-World Examples / Case Studies

The shift from basic diffusion models to reasoning-based engines like Nano Banana 2 requires a fundamentally different approach to prompt engineering. The most effective outputs in 2026 rely on structured, formulaic logic.

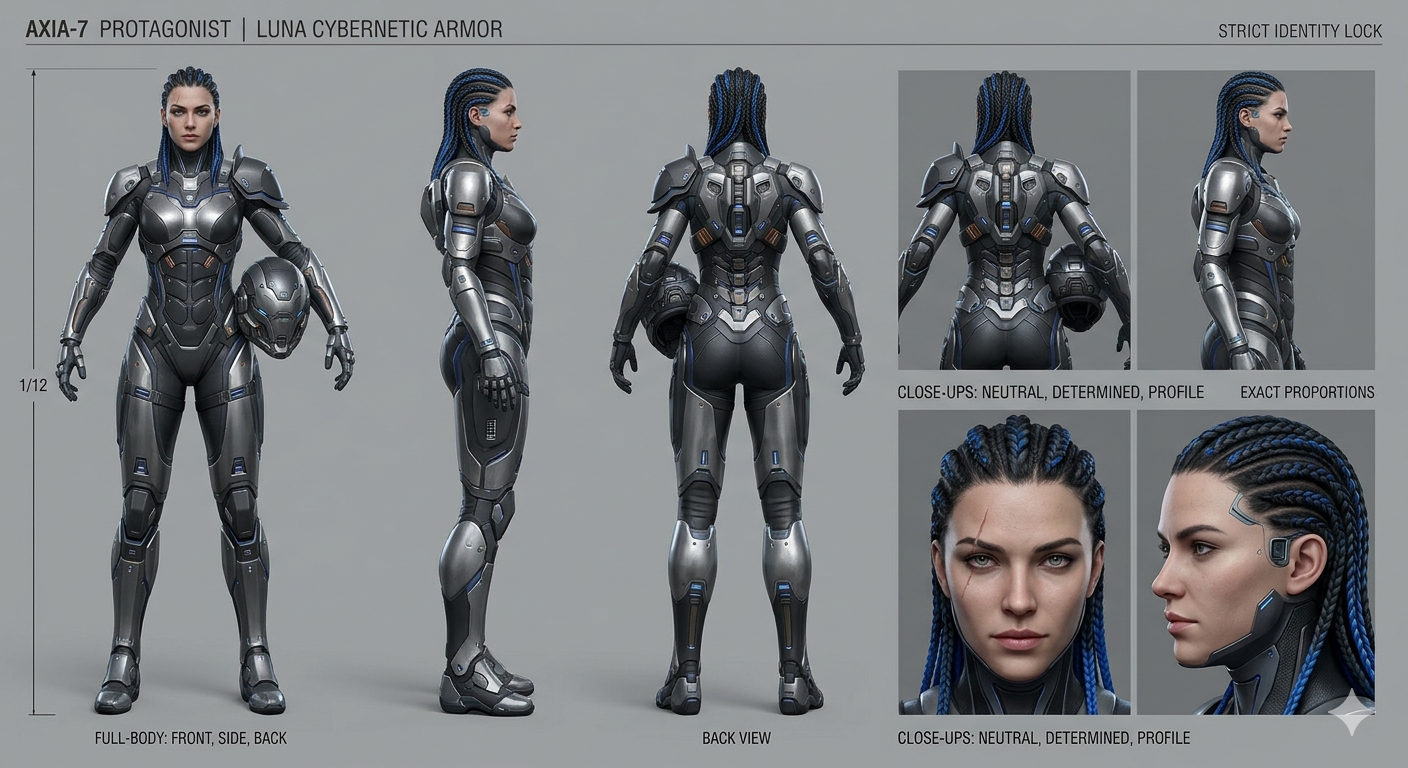

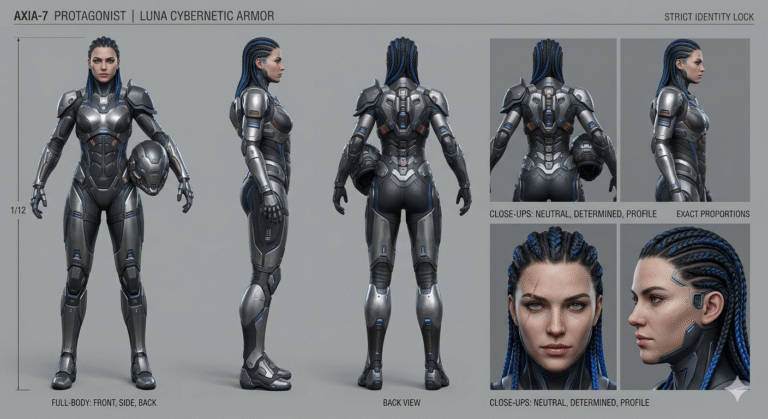

Case Study 1: The Character Sheet Generation

For digital creators requiring uniform assets for 3D modeling or graphic novels, generating a character turnaround sheet is essential. An optimal prompt utilizes clear spatial instructions and camera logic to guide the AI’s internal reasoning.

- Prompt Architecture: “DSLR Character Sheet on a grey backdrop. With three face profiles (front, 45°, and side) and four full body portrait profiles (front, 45°, side, and back). Photographed with a Sony Alpha 7IV with 24-70mm lens. Maintain consistency. The model depicted is Emma. Emma has emerald eyes, bronze shoulder-length wavy hair with a reddish hue, and a rose tattoo on her left collarbone.”.

- Result: The model natively understands the spatial layout required for a turnaround sheet and locks the specified visual traits across all seven distinct profiles without structural degradation.

Case Study 2: E-Commerce Product Integration

When integrating specific physical products into lifestyle environments, commercial users must leverage the Object Fidelity quotas effectively.

- Prompt Architecture:

+ [Environmental Context] + +. - Execution: “Integrate the uploaded coffee packaging into a cozy independent bookshop interior. Golden afternoon light streaming through dust-flecked windows, highlighting the texture of the packaging on a wooden counter. A striped cat napping in the background out of focus. Cinematic, warm, photorealistic, shot on an 85mm lens with shallow depth of field.”.

Case Study 3: Professional Corporate Headshots

Using the 4-slot Character Consistency limit, users can transform casual selfies into studio-grade professional photography.

- Execution: “Professional corporate headshot of the subject in the reference image, mid-30s, wearing a charcoal tailored blazer over a white shirt. Soft studio lighting with a subtle gradient grey background. Neutral confident expression, slight smile, direct eye contact. Shot on an 85mm lens, magazine-quality sharpness.”.

- Result: The 85mm lens instruction simulates flattering portrait compression, while the specific lighting instructions override the ambient lighting of the original reference selfie.

Advantages

The benefits of the Gemini generative suite extend well beyond mere visual aesthetics. Deep integration into the broader Google ecosystem transforms these tools from novelty generators into true enterprise solutions.

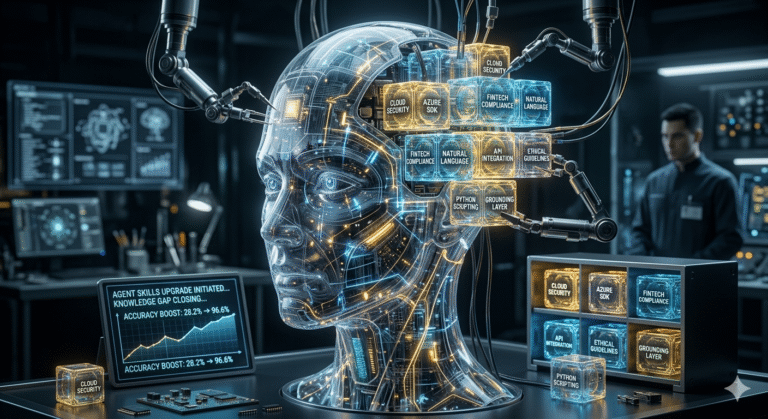

The Rise of Agentic Workflows

The integration of Project Mariner—Google’s advanced computer-use AI agent system—allows for the automation of complex, multi-step creative workflows.

These sophisticated AI agents can visually observe a user’s web browser, reason through a stated goal, and independently source reference images from Google Drive. Furthermore, they can autonomously write prompts and generate assets directly into Google Workspace applications like Docs and Slides.

For a deeper understanding of how these agentic systems are reshaping professional environments, read our extensive analysis on(https://blog.aquartia.in).

Enterprise Scaling via AI Ultra

The Google AI Ultra subscription tier grants enterprise and advanced users access to a 1-million token context window.

This allows massive archives of corporate brand assets, typography guidelines, and historical marketing data to be analyzed simultaneously by the model.

This synergy of Flash speed, expansive multimodal memory, and automated reasoning enables marketing agencies to scale image and video production into a daily, hyper-personalized content engine seamlessly.

Did You Know fact: Through the AI Ultra Access add-on, teams can now scale video production using Veo 3.1 directly within Google Vids, generating professional-grade videos and AI avatars that seamlessly match the static images created by Nano Banana Pro.

Criticism

Despite its extraordinary capabilities, the Gemini 2026 ecosystem is fraught with technical, operational, and ethical hurdles that users must navigate carefully.

The “Ghost 429” Rate Limit Crisis

Developers and API users frequently battle an opaque and confusing rate-limiting system. The most notorious issue is the HTTP 429 RESOURCE_EXHAUSTED error. Analytical deep-dives in early 2026 reveal that this singular error code actually masks four distinct operational failures.

- Zero IPM Free Tier: As previously noted, the free tier for Gemini 3.1 Flash Image operates at zero Images Per Minute.

- RPM Burst Limits: Exceeding standard per-minute request allowances during high-volume generation scripts.

- Daily RPD Quotas: Exhausting overall daily usage limits set by the billing tier.

- The Ghost 429 Bug: A known infrastructure synchronization error affecting recently upgraded accounts, where the system fails to recognize the new tier status for several hours.

Unpredictable Model Regression

Early adopters of Nano Banana 2 noted that the model, while highly capable of creative style transfers, occasionally struggled with rigid, exact tasks like flat 2D photo restoration.

When questioned about generating digital artifacts during simple image restoration tasks, the Gemini model itself admitted that generative AI development is often a game of “statistical trade-offs”—a frustrating “two steps forward, one step back” reality for end-users relying on absolute consistency.

Furthermore, strict and often unpredictable over-censorship triggered by the IMAGE_SAFETY block-reasons frequently halts legitimate creative workflows, requiring users to constantly rewrite prompts to bypass hyper-sensitive safety filters.

Global Implications

The hyper-realistic capabilities of models like Gemini 3.1 Flash Image have profound geopolitical and regulatory implications. The ease with which synthetic media can be generated poses severe risks to electoral integrity, financial markets, and personal privacy.

For students of public policy and UPSC aspirants, the governance mechanisms established globally in 2026 are critical areas of study.

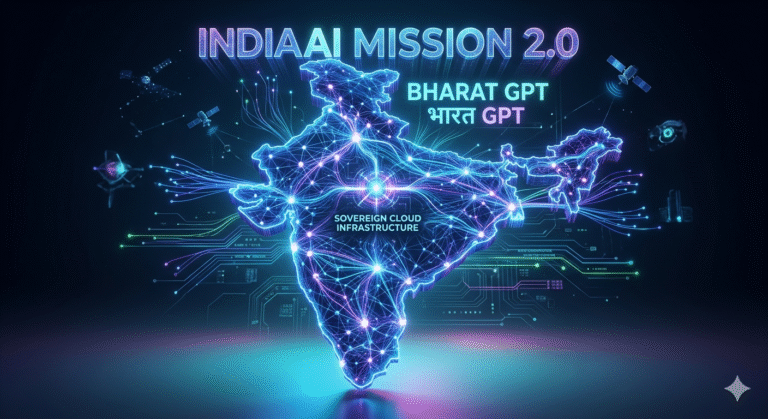

The India AI Impact Summit 2026 and the M.A.N.A.V. Vision

In February 2026, the India AI Impact Summit held at Bharat Mandapam fundamentally redefined global technology governance.

Shifting the prevailing narrative from a Western-centric focus on “existential risk” to a Global South focus on “AI for inclusive development,” the summit laid out the core Three Sutras: People (human-centricity), Planet (sustainability), and Progress (equitable economic growth).

Central to this geopolitical stance is the M.A.N.A.V. vision, articulated by the Prime Minister. This comprehensive roadmap stands for:

- M – Moral and Ethical Systems: Emphasizing human oversight and integrating computational thinking directly into the National Education Policy 2020.

- A – Accountable Governance: Powered by the ₹10,300 crore IndiaAI Mission, which actively expands compute infrastructure from 38,000 to 58,000 GPUs within weeks, providing subsidized access to local creators.

- N – National Sovereignty: Developing indigenous models and resilient digital public infrastructure to ensure strategic autonomy. For deeper insights into silicon sovereignty, explore(https://blog.aquartia.in).

- A – Accessible and Inclusive AI: Democratizing access to computing power via the MeghRaj GI Cloud and AI Data Labs.

- V – Valid and Legitimate Systems: Ensuring that AI systems remain lawful and verifiable through strict regulatory frameworks and algorithmic auditing.

The summit’s massive scale—convening over 900,000 virtual viewers, delegations from over 100 countries, and setting a Guinness World Record with 250,946 validated pledges to an AI responsibility campaign—cemented India as a global “bridge power” in technology governance.

The IT Rules Amendment of 2026

Falling under the “Valid and Legitimate Systems” pillar of M.A.N.A.V., the(https://www.meity.gov.in/) formally notified the Information Technology (Intermediary Guidelines and Digital Media Ethics Code) Amendment Rules, 2026, on February 10, 2026.

This aggressive legislative update is specifically designed to combat the rising threat of deepfakes and mandates strict compliance protocols for all AI generators:

- Swift Takedown Windows: Digital platforms must remove content deemed illegal within an unprecedented 3 hours. For severe violations, such as non-consensual deepfake nudity, the window is tightened to just 2 hours to mitigate viral trauma.

- Mandatory Labeling and Metadata: Significant Social Media Intermediaries (SSMIs) must ensure that Synthetically Generated Information (SGI) carries visible visual watermarks or audio disclaimers. Crucially, digital fingerprints and provenance markers must be embedded into the file’s metadata to trace the origin of a deepfake back to specific models like Gemini.

- User Declarations and Technical Verification: Users uploading content must legally declare if it was AI-generated. Platforms can no longer rely solely on user honesty; they are legally bound to deploy automated technical tools to verify these declarations prior to public display.

- Grievance Redressal Acceleration: Platforms must now acknowledge user complaints regarding synthetic content within 7 days, down from the previous 15-day window, forcing companies to dramatically scale their moderation teams.

These regulations shift the burden of transparency directly into the backend architecture of tools like Google Gemini. It necessitates the mandatory integration of invisible watermarking technologies, such as Google’s SynthID, to remain legally compliant under these new statutes.

Future Trends

Looking beyond the immediate horizon of 2026, image generation is rapidly evolving from static text-to-pixel processing toward entirely autonomous agentic workflows.

Google’s Project Mariner exemplifies this trajectory. As a multimodal reasoning agent, Mariner can visually observe a user’s desktop, interpret goals via natural language, and take multi-step actions across various applications.

In the context of visual design, future iterations of Mariner integrated directly with the Gemini API could autonomously scour competitor websites, identify emerging visual trends, and instruct Nano Banana 2 to generate thousands of optimized, culturally relevant marketing assets overnight—all without human intervention.

Additionally, the integration of static image models with state-of-the-art cinematic video generation will deepen. Tools like Veo 3.1 and Veo 3.1 Lite will allow users to transition seamlessly from generating a static character turnaround sheet in Gemini to producing fully synchronized, natively audio-backed cinematic films featuring that exact character in a matter of minutes.

Conclusion

Mastering Google Gemini AI photo generation in 2026 requires significantly more than a passing familiarity with basic prompt engineering. It demands a holistic understanding of how deep reasoning budgets operate, how strict reference image quotas are mathematically structured, and how national regulations govern the final digital output.

As powerful models like Nano Banana Pro and autonomous AI agents like Project Mariner continue to rapidly dissolve the traditional barriers between ideation and final execution, the ultimate competitive advantage will belong to those who can strategically deploy these tools.

Success relies on navigating the complex ethical, technical, and legal guardrails of the modern digital economy. Readers are highly encouraged to test the advanced prompting formulas provided in this guide and actively engage with the ongoing, rapid evolution of visual AI technology.

What has been your experience using the new Nano Banana 2 models? Drop a comment below or share your best generated images with us!

FAQ Section

Q: Is Google Gemini AI photo generation free to use in 2026? A: Yes, Gemini offers a highly generous free tier powered by the Gemini 3 Flash model, allowing everyday users access to chat and robust image generation via the web interface. However, developers and enterprise users utilizing the API must note that the free tier for Gemini 3.1 Flash Image currently operates at a strict 0 IPM (Images Per Minute) limit. You must enable billing to reach Tier 1 and generate images programmatically.

Q: What exactly is Nano Banana 2 and how does it differ from Imagen 4? A: Nano Banana 2 is the popular public codename for Gemini 3.1 Flash Image, a highly advanced model released in February 2026. Unlike the deprecated Imagen 4 models (which completely sunset on June 30, 2026), Nano Banana 2 utilizes a massive 131,072-token context window. It also supports deep multimodal reasoning via “Thinking Mode” and allows for strict character consistency using up to 14 visual reference images.

Q: How do I fix the Gemini Thinking Mode taking forever to generate an image? A: If the generation process hangs endlessly or for over 30 minutes, it is likely due to a high-volume data source combined with the deep Thinking Mode. Users should immediately reduce the workload by isolating relevant text, separating the document summarization and image generation tasks into two distinct prompts, or manually adjusting the thinking budget limit slider directly within Google AI Studio.

Q: How does the 14-reference image limit actually work for character consistency? A: Gemini allows up to 14 visual references, but they are strictly, systemically categorized. A user can upload a maximum of 10 “Object Fidelity” images (specifically for products or props) and 4 “Character Consistency” images (specifically for maintaining faces and identities). Exceeding the hardcoded quota for either specific category will result in an immediate prompt failure.

Q: What are the new deepfake disclosure requirements under India’s IT Rules 2026? A: Enacted in February 2026, the amended IT Rules require platforms to ensure that all Synthetically Generated Information (SGI) contains highly visible watermarks or clear audio disclaimers, alongside embedded metadata provenance. Furthermore, users must legally declare AI-generated content upon upload, and platforms face incredibly strict 2-to-3-hour takedown windows for illegal synthetic media.

Q: Can I completely turn off Thinking Mode in Gemini Pro? A: No, Thinking Mode cannot be completely disabled for advanced models like Gemini 2.5 Pro and Gemini 3 Pro. The reasoning architecture is natively integrated into exactly how the model processes complex data. However, users can adjust the “Thinking budget” via the drop-down selector in the Vertex AI console to minimize excessive processing time for simpler tasks.

Q: Why am I getting a persistent 429 RESOURCE_EXHAUSTED error in Google AI Studio? A: In 2026, the frustrating 429 error can stem from four distinctly different backend issues: a complete lack of enabled billing (triggering the 0 IPM limit on the free tier), exceeding the RPM (Requests Per Minute) burst limit, hitting the daily RPD (Requests Per Day) quota, or a known “ghost bug” that temporarily affects account synchronization shortly after upgrading billing tiers.

Q: How does Gemini compare to Midjourney V7 for professional use cases? A: Midjourney V7 remains the gold industry standard for purely artistic, abstract, and cinematic stylization. However, Gemini 3.1 Flash Image excels significantly in hyper-photorealism, precise text rendering, and overall workflow speed. Furthermore, Gemini offers robust native API access and integrates directly with Google Workspace, making it vastly superior for automated enterprise marketing tasks.

Key Takeaways Box

| Category | Strategic Takeaway |

| Model Transition | Migrate all legacy workflows from the Imagen 4 ecosystem to gemini-3.1-flash-image endpoints strictly before the June 30, 2026, deprecation deadline to avoid total service disruption. |

| Prompting Mastery | Utilize strict camera terminology (e.g., 85mm lens, shallow depth of field) and leverage the exact 10+4 reference image structure to guarantee perfect character and product consistency across multiple generations. |

| Troubleshooting Error 429 | Address persistent 429 errors by verifying active billing status and systematically isolating text-heavy reference documents from direct image generation commands to prevent Thinking Mode session timeouts. |

| Legal & Policy Compliance | Ensure all synthetic outputs retain their native SynthID metadata and carry appropriate visual SGI labels to comply seamlessly with the rapid 3-hour takedown mandates of the India IT Rules Amendment 2026. |

+ There are no comments

Add yours