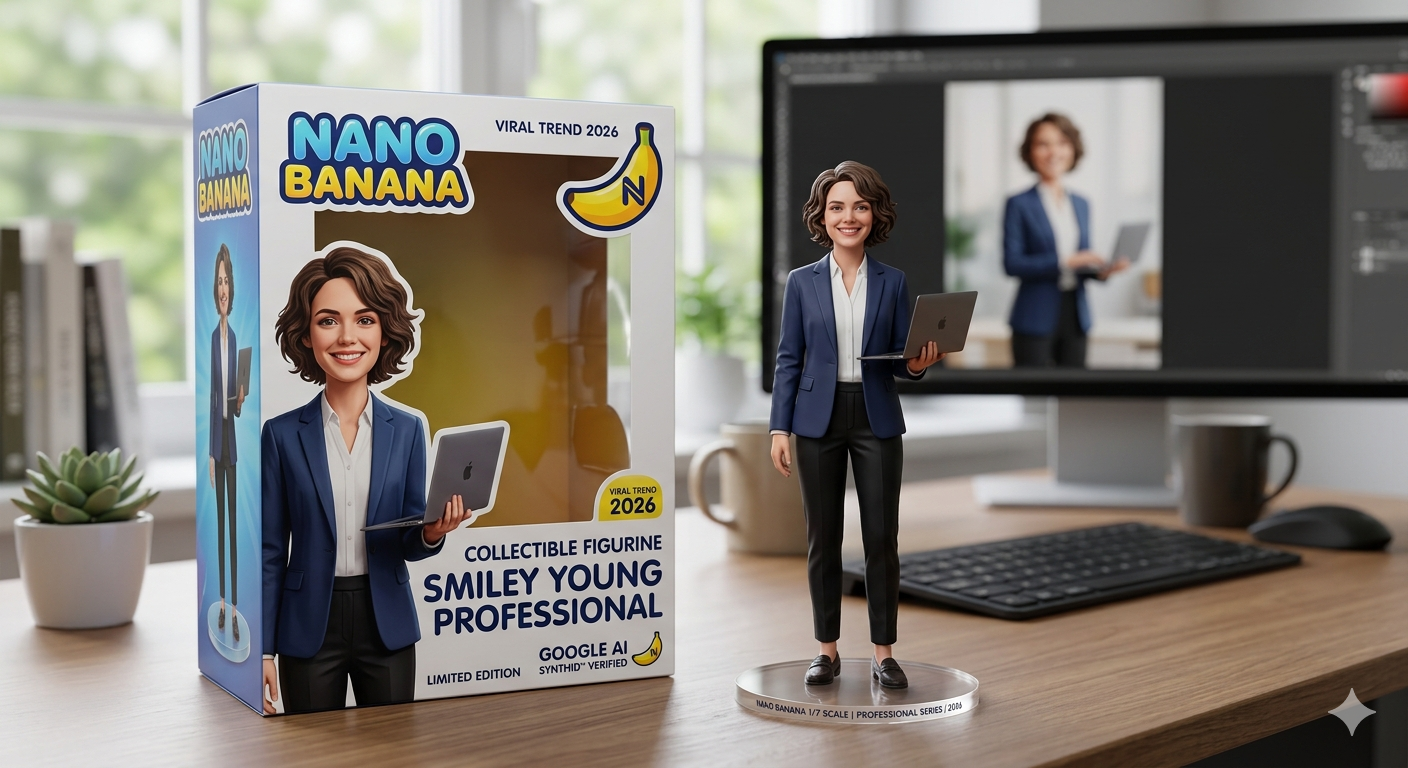

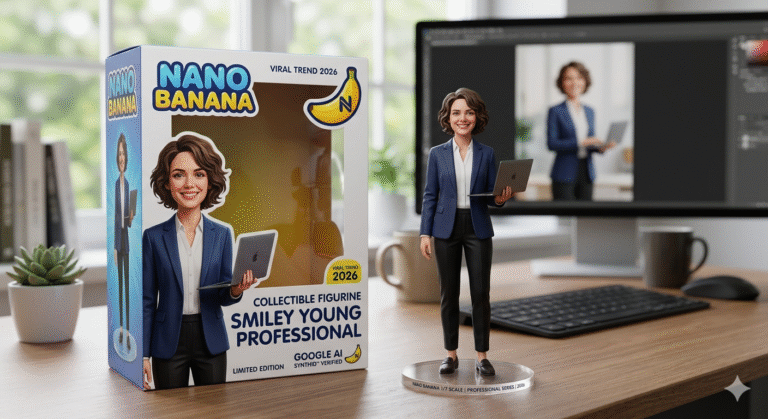

A seemingly lighthearted internet craze has fundamentally rewritten the rules of generative artificial intelligence in 2026. Across social media platforms, millions of digital avatars, resembling highly detailed Pixar characters and Funko Pop figurines, have flooded timelines.

Behind these hyper-realistic digital toys is a sophisticated machine-learning engine codenamed “Nano Banana.” Initially perceived as a quirky placeholder name originating from a late-night development scramble at Google DeepMind, Nano Banana has emerged as the definitive visual AI model of the year.

Nano Banana—officially integrated as Gemini 3.1 Flash Image—is not merely an image generator. It is a natively multimodal reasoning model capable of analyzing text, images, and logic simultaneously. It allows users to transform ordinary photographs into stunning 3D collectibles, execute pixel-perfect surgical edits, and render complex typography with unprecedented accuracy.

For casual users, it is a viral meme engine that makes content creation effortless. For enterprise designers, it is an indispensable production tool that cuts commercial photography budgets. For global policymakers, it represents a technological leap requiring immediate regulatory frameworks, forever altering the digital landscape.

Why This Topic Matters Today

The transition of artificial intelligence from experimental novelties to embedded daily utilities has reached a critical inflection point. The Nano Banana phenomenon demonstrates that algorithmic consistency—the ability of an AI to remember a character’s exact facial features, clothing, and proportions across infinite generations—has finally been solved.

This technological milestone dismantles previous limitations associated with AI hallucinations, disjointed asset creation, and creepy, distorted anatomical generation. Brands can now rely on AI for consistent digital campaigns without human touch-ups.

Furthermore, the scale of this adoption introduces urgent policy dialogues globally. As AI models become capable of perfectly mimicking established artistic styles and generating photorealistic media in seconds, questions regarding data sovereignty, copyright erosion, and synthetic media regulation dominate geopolitical discussions.

Understanding Nano Banana is essential not only for mastering modern digital content creation but also for grasping the economic and legal paradigms defining the late 2020s. Civil service candidates and policy analysts must track these trends closely to understand the future of digital governance.

Key Highlights

| Highlight Area | Core Insight |

| Viral Catalyst | The 3D Figurine phenomenon enabled the transformation of standard portraits into premium, toy-like collectibles, driving massive consumer adoption. |

| Architectural Leap | Powered by Gemini 3.1 Flash Image, the model utilizes a “Thinking Phase” to reason through prompts and real-world physics before pixel diffusion begins. |

| Unmatched Consistency | Solves the historic challenge of identity coherence, allowing characters to be reused seamlessly across varied environments without feature distortion. |

| Geopolitical Impact | The rapid deployment of such models catalyzed the New Delhi Declaration on AI Impact 2026, establishing global guardrails and democratic tech access. |

| Market Disruption | With generation speeds under five seconds and low token costs, it directly challenges premium subscriptions like Midjourney v8. |

Background and Context

The trajectory of the Nano Banana model illustrates the rapid, almost aggressive evolution of multimodal artificial intelligence over a very short period. In mid-2025, Google introduced its Gemini 2.5 Flash Image model, which quickly gained traction for its speed and integration into the broader Google ecosystem.

However, it was the subsequent iterative leaps—Nano Banana Pro and ultimately Nano Banana 2 (Gemini 3.1 Flash Image)—that triggered global viral adoption. The technology matured from a novelty into a commercial juggernaut almost overnight.

The peculiar name “Nano Banana” originated internally within Google DeepMind. During a late-night engineering sprint, a pair of personal developer nicknames merged into a placeholder title. This internal joke unexpectedly resonated with early beta testers, prompting the company to embrace the unconventional branding for its consumer-facing campaigns to make the highly complex technology feel approachable.

By early 2026, the technology had matured beyond simple text-to-image capabilities. It became a multimodal powerhouse capable of processing interleaved text, video, and image references simultaneously.

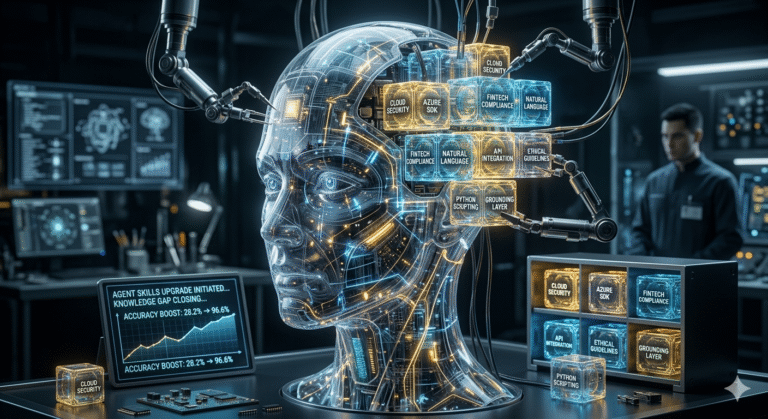

This evolution reflects a broader industry shift, one that moves from generative to agentic AI, where systems no longer just generate media but understand context, adhere to strict visual constraints, and perform complex analytical reasoning before executing creative tasks.

Core Explanation

What Exactly is Nano Banana?

At its core, Nano Banana is the colloquial and commercial moniker for Google’s Gemini 3.1 Flash Image and Gemini 3 Pro Image models. It operates as a highly advanced generative AI system accessible via Google AI Studio, Vertex AI, and the consumer-facing Gemini application.

The system acts as a digital translator, taking natural language instructions and combining them with visual reference materials to output high-fidelity original images or heavily modified photo edits. Unlike earlier iterations of AI art generators, it does not just guess what an image should look like; it plans the image mathematically.

How the Model Works

Older diffusion models relied on statistical guesswork to place pixels based on text associations, which often resulted in floating objects, missing limbs, or garbled text. The Nano Banana architecture introduces a dedicated “Thinking Phase” to solve this.

When a prompt is submitted, the model functions similarly to a reasoning Large Language Model (LLM). It calculates spatial relationships, verifies real-world physics (such as light interaction and gravity), checks text spelling constraints, and plans the composition before the image generation actually begins.

This deep semantic understanding prevents common AI failures, ensuring that a requested coffee cup sits firmly on a table rather than floating slightly above it.

Key Components and Stakeholders

| Stakeholder Group | Primary Use Case and Impact |

| Casual Creators | Utilizing the model for viral social media content, particularly the 3D figurine and pet transformation trends, driving organic growth. |

| Enterprise Developers | Leveraging the Gemini API for high-volume, low-latency tasks, such as automated product photography and dynamic e-commerce wireframing. |

| Governance Bodies | Organizations monitoring the deployment of digital watermarking technologies like SynthID to trace synthetic media origins. |

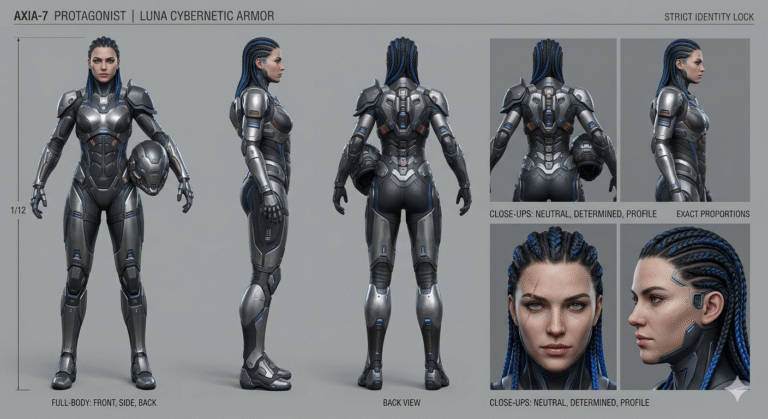

| Professional Artists | Adapting to the technology as a collaborative tool for concept art, storyboarding, and rapid prototyping, while navigating copyright concerns. |

Technical and Conceptual Breakdown

To fully grasp the superiority of the Nano Banana architecture, a technical examination of its capabilities is required. The model represents a paradigm shift in how machines process visual data.

The Gemini 3.1 Flash Image model supports an astonishing input token limit of 131,072 tokens. This massive context window allows the system to ingest enormous amounts of reference data—including extensive design documents, brand guidelines, and up to 14 reference photos—simultaneously.

The architecture is entirely multimodal native. The model does not bolt an image generation layer onto a text engine as an afterthought. It processes images, code, and text through the exact same neural pathways. This enables users to upload a complex spreadsheet and request an infographic visualization in a single, fluid step.

Furthermore, Nano Banana allows for conversational editing through advanced semantic masking. Users can upload a photograph and provide a text command such as, “Change the background to a sunset beach but keep the ambient lighting on the subject realistic.” The AI automatically infers the boundaries of the subject without requiring manual lassoing tools.

Visual Explanations Suggestion: Imagine a digital assembly line. Instead of a painter immediately throwing paint on a canvas, Nano Banana acts as a director, architect, and set designer—building a 3D blueprint in its “mind” before a single pixel is rendered.

Real-World Examples and Case Studies

The 3D Figurine Phenomenon

The most visible application of this technology in 2026 has been the 3D figurine trend. Creators start with a standard portrait or selfie and apply specific, director-style prompts to generate digital collectibles.

A standard successful workflow involves prompts dictating the scale (e.g., “1/7 scale hyper-realistic collectible”), the environment (“modern computer desk with acrylic base”), and the packaging style (“retro 80s collector’s edition”).

Because the model maintains perfect identity coherence, the resulting digital toy retains the exact facial proportions, hairstyle, and even specific clothing items of the original human subject. This has spawned entire micro-economies of users offering bespoke digital toy creation on platforms like TikTok and Instagram.

Commercial Product Photography

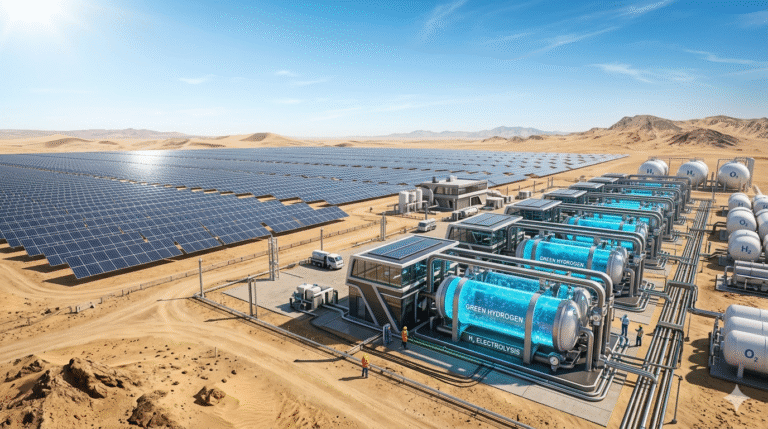

Marketing agencies have drastically reduced overhead by adopting Nano Banana for product visualization. Instead of organizing extensive physical photoshoots, brands upload a basic image of a product—such as a wristwatch—and instruct the AI to build “object mythology” around it.

A prompt placing the watch on a “worn wooden table inside a dimly lit room with cinematic mystery lighting” instantly generates high-end commercial assets that rival traditional studio photography. This capability has effectively democratized high-tier commercial branding for small businesses.

Benefits and Advantages

The adoption of the Nano Banana model offers distinct operational and creative advantages over legacy systems.

Speed and cost-efficiency are unparalleled. Nano Banana 2 generates hyper-realistic images in approximately three to five seconds. Furthermore, the Flash-Lite variant operates at a highly economical $0.25 per one million input tokens, making enterprise-scale generation financially viable for the first time.

Identity coherence is another massive leap. The absolute preservation of character features across different prompts eliminates the need for complex, manual post-production touch-ups. A brand mascot can be placed in a beach scene, a snowy mountain, and a futuristic city without losing its core identity.

Granular camera control is built into the system’s DNA. The AI understands deep photographic terminology. Prompts dictating specific hardware (e.g., “Fujifilm color science,” “macro lens”) or lighting setups (“Chiaroscuro,” “three-point softbox”) yield technically accurate results, giving professional photographers precise control over the output.

Challenges, Risks, and Criticism

Despite technological triumphs, the widespread deployment of advanced generative models carries significant, often existential, risks for specific industries.

The “Ghibli Paradox” and Copyright Erosion

Legal scholars and policy analysts frequently cite the “Ghibli Paradox” when discussing models of this caliber. In late 2025, users flooded platforms with AI artwork mimicking the exact aesthetic of Studio Ghibli.

While these images were not direct copies of existing copyrighted frames, they perfectly replicated the studio’s proprietary style, severely diluting its market value. This phenomenon reveals a massive flaw in traditional intellectual property cycles.

If an AI can ingest a lifetime of human artistic development in days and replicate it flawlessly, the statutory “protection period” designed to reward human creators is economically circumvented. The concept of originality is fundamentally challenged.

Ethical and Synthetic Media Risks

The flawless realism of Nano Banana raises substantial ethical concerns regarding deepfakes, misinformation, and political manipulation. Although Google mitigates this through SynthID watermarks, malicious actors continually attempt to bypass metadata tracking.

The democratization of such powerful tools also accelerates workforce displacement, particularly for freelance illustrators and commercial photographers whose primary services can now be executed via a single API call. The ease of use lowers the barrier to entry, but it simultaneously devalues human craftsmanship.

Strategic, Policy, and Global Implications

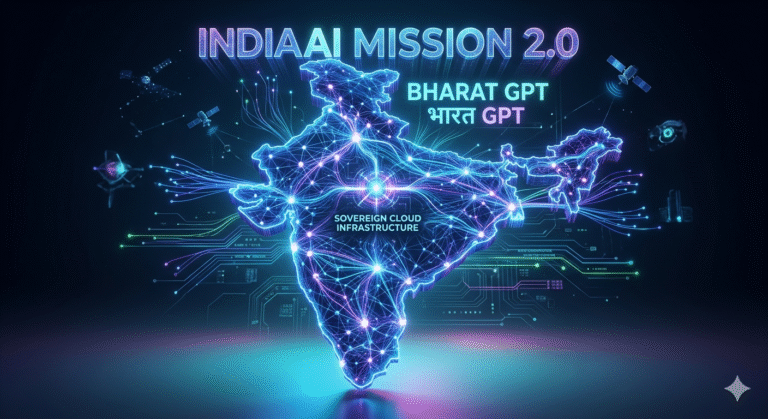

The socio-economic ramifications of AI capabilities demonstrated by Nano Banana prompted a massive regulatory response in 2026, culminating in the India AI Impact Summit. This section is critical for understanding the geopolitical shift in technology governance.

The New Delhi Declaration on AI Impact 2026

Signed by 88 countries, the New Delhi Declaration represents a monumental shift from focusing purely on AI existential safety toward utilizing AI for developmental equity. Grounded in the Indian philosophy of Sarvajan Hitaya, Sarvajan Sukhaya (Welfare for all, Happiness for all), the framework introduced a completely new paradigm for global tech regulation.

The summit established the Three Sutras of AI governance: People, Planet, and Progress. These pillars dictate that AI must empower citizens, drive sustainable climate resilience, and catalyze economic growth in developing nations.

To operationalize this, the declaration outlined the Seven Chakras of AI implementation. These working groups focus on specific domains such as democratizing AI resources, developing safe and trusted systems, and advancing human capital through rapid reskilling programs.

The IndiaAI Mission and Sovereign AI

In response to Western monopolies over Large Language Models (LLMs) and compute hardware, the IndiaAI Mission successfully shifted the global paradigm toward “Sovereign AI”. By developing indigenous compute infrastructure, India aims to ensure its data remains within its own borders, governed by its own laws.

Furthermore, India formally joined the U.S.-led Pax Silica initiative to co-secure the entire AI technology stack, ranging from critical minerals to advanced semiconductor fabrication. This move marks a strategic departure from traditional non-alignment to a “Trusted Trade” paradigm.

This “Third Way” of AI governance balances rapid innovation with strategic autonomy and public safeguards, offering a distinct alternative to the heavily deregulated models of the West or the state-controlled models of authoritarian regimes. For nations in the Global South, the establishment of the Global AI Impact Commons—a repository for sharing successful AI solutions—ensures that tools like Nano Banana benefit agriculture, healthcare, and education as much as they benefit digital marketing.

Did You Know? The 2026 India AI Summit secured over $250 billion in global investment commitments, firmly establishing the subcontinent as the leading hub for AI infrastructure outside of Silicon Valley.

Future Trends and Outlook

As 2026 progresses, the isolated use of image generators will decline, replaced by the integration of AI models into broader, autonomous systems. As noted in discussions surrounding how AI tools go mainstream in education and creativity, future systems will act as collaborative digital coworkers rather than simple tools.

Rather than a human writing a prompt to create an image, an AI agent will identify a marketing gap, write the promotional copy, interface with Nano Banana to generate the supporting graphics, and publish the campaign autonomously.

This shift to semantic, agentic logic means search algorithms will also evolve. SEO strategies are moving away from outdated Latent Semantic Indexing (LSI) toward deep semantic entity mapping. Content creators will need to ensure their images contain visually clear context that AI visibility platforms can interpret and index accurately.

Comparison Table: Leading AI Image Generators (2026)

| Feature / Metric | Nano Banana 2 (Gemini 3.1 Flash Image) | Nano Banana Pro | Midjourney v8 Alpha | DALL-E 3 |

| Primary Strength | Lightning speed, cost-efficiency, simple edits | Unmatched precision, text rendering, logic | Pure artistic flair, cinematic depth, surrealism | Seamless text overlays, basic ad creatives |

| Generation Speed | 3 – 5 Seconds | 2 – 5 Minutes | Moderate (~60 seconds) | Moderate (~30 seconds) |

| Text Rendering | Good | Flawless (Long sentences, accurate layout) | Weak (Short words only) | Strong (Short-to-medium phrases) |

| Character Consistency | High | Exceptional (Locks identity permanently) | Variable (Struggles across multi-edits) | Moderate |

| Pricing / Cost | Free tiers available, API at $0.25/1M tokens | Requires Gemini Advanced Subscription | $10-$120/month subscription | Included with ChatGPT Plus |

| Best Use Case | Social media, rapid prototyping, viral trends | Commercial photography, infographics, UI/UX | Concept art, fantasy illustrations, storytelling | Paid marketing creatives, lifestyle ads |

FAQ Section

1. What is the Nano Banana 3D figurine trend? The Nano Banana 3D figurine trend is a viral social media craze where users utilize Google’s Gemini 3.1 Flash Image AI to transform their personal selfies into hyper-realistic, toy-like 3D collectibles. The AI maintains exact facial features and clothing, rendering the subject as a premium action figure or Funko Pop-style character housed in realistic digital packaging or dioramas.

2. Is Nano Banana the official name of the AI? No, “Nano Banana” originated as an internal developer nickname and placeholder that gained viral popularity online. Officially, the underlying technology operates under the names Gemini 3.1 Flash Image and Nano Banana Pro (powered by the Gemini 3 Pro framework). Google leaned into the viral naming convention for marketing its consumer-facing tools to make them more approachable.

3. How much does Nano Banana cost to use? For casual users, basic access to Nano Banana features is available for free through the standard Gemini application, subject to daily credit limits. For developers and enterprise environments utilizing APIs, the Gemini 3.1 Flash-Lite model is highly cost-efficient, priced at approximately $0.25 per one million input tokens. Advanced professional tiers require a Gemini Advanced subscription.

4. What makes Nano Banana different from older AI image generators? Older diffusion models generated images through statistical guesswork, often failing at complex logic, spatial awareness, or text spelling. Nano Banana incorporates a “Thinking Phase” similar to reasoning language models. It calculates physics, plans composition, and verifies constraints before generating pixels, resulting in superior character consistency, legible text, and highly accurate surgical edits.

5. Can Nano Banana generate text inside images? Yes. Unlike many earlier models, Nano Banana excels at typographical rendering. It accurately produces complex sentences, supports multiple font styles, and can render text correctly in over ten different languages. This capability makes it a preferred tool for generating infographics, UI wireframes, educational diagrams, and commercial posters.

6. What is the “Ghibli Paradox” associated with generative AI? The Ghibli Paradox refers to the economic and legal threat generative AI poses to human artists. It describes how AI models can ingest copyrighted material and perfectly learn an artist’s or studio’s proprietary aesthetic in days. While the generated outputs are not direct copies, they flood the market with indistinguishable mimics, effectively rendering copyright protection periods economically meaningless.

7. How does the New Delhi Declaration on AI impact this technology? Signed in 2026 by 88 countries, the New Delhi Declaration provides a voluntary global framework for responsible AI governance. Guided by the Indian principle of “Sarvajan Hitaya” (welfare for all), it promotes democratic access to AI resources for the Global South. It encourages the establishment of “Trusted AI Commons” to ensure the safe, secure, and ethical deployment of models like Nano Banana.

8. What is Sovereign AI and how does it relate to Nano Banana? Sovereign AI is the strategic push by nations, notably India via the IndiaAI Mission, to build indigenous computing infrastructure and AI models. Rather than relying entirely on Western tech giants (like Google’s Nano Banana), nations aim to process data locally to protect national sovereignty, reflect local linguistic diversity, and secure supply chains through partnerships like the Pax Silica initiative.

9. What is the difference between Nano Banana 2 and Nano Banana Pro? Nano Banana 2 is built for extreme speed and cost-efficiency, generating images in seconds, making it ideal for rapid ideation and social media trends. Nano Banana Pro operates slightly slower but offers deep reasoning capabilities, exceptional text rendering, and precise adherence to complex, multi-tiered prompts required for high-end professional and commercial use.

10. How do I write an effective prompt for Nano Banana? Experts recommend a structured, “director-style” approach. Start with a strong action verb, explicitly describe the subject, and dictate the composition and environment. Crucially, define the technical elements using photographic terms like “macro lens,” “golden hour lighting,” or “Fujifilm color science” to guide the AI toward high-quality, non-generic outputs instead of basic descriptions.

Key Takeaways

| Key Insight Area | Summary of Takeaway |

| Technological Shift | Generative AI has successfully evolved from random pixel diffusion into deep multimodal reasoning, enabling models to “think” before they draw. |

| Consistency Mastered | The ability of Nano Banana to lock character identities across various prompts fundamentally redefines digital content creation workflows for brands and creators. |

| Prompt Engineering | Success no longer relies on keyword stuffing; it relies on technical, director-style vocabulary and conversational iteration. |

| Global Governance | The rapid scale of tools like Nano Banana has necessitated robust frameworks like the IndiaAI Mission and New Delhi Declaration to secure digital sovereignty and ethical use. |

Conclusion

The discourse surrounding Nano Banana in 2026 extends far beyond the production of viral digital figurines and social media memes. It represents a watershed moment in artificial intelligence, where deep reasoning, multimodal processing, and algorithmic consistency converge to create a utility of unparalleled power.

While it radically democratizes high-end visual creation and streamlines enterprise workflows, it simultaneously demands rigorous global governance to navigate the complex challenges of intellectual property erosion, workforce displacement, and synthetic media authenticity.

For technologists, policymakers, and digital creators, mastering the mechanics and implications of Gemini 3.1 Flash Image is not merely advantageous—it is essential for navigating the future digital economy. As AI evolves from generative novelty to collaborative agentic worker, adapting to this technology is the only path forward

+ There are no comments

Add yours