KEY TAKEAWAYS

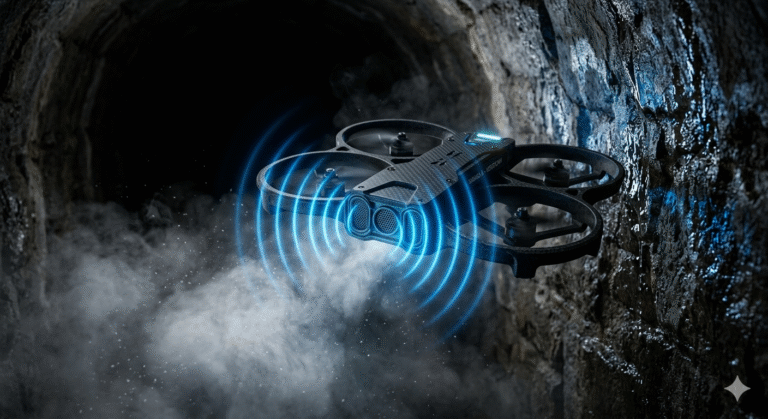

- Non-Visual Navigation: Camera-less drones successfully use bat-inspired ultrasonic echolocation to fly autonomously through total darkness, thick smoke, and dense fog where traditional cameras and LiDAR systems fail entirely.

- Extreme Efficiency: The Saranga drone’s acoustic sensing payload operates on a mere 1.2 milliwatts, representing a 1000× power reduction and 100× cost reduction compared to standard optical arrays, drastically increasing flight endurance.

- AI Noise Filtration: By utilizing physical acoustic shields and advanced CNN-LSTM deep learning networks, these drones can actively filter out the deafening noise of their own spinning propellers to hear faint obstacle echoes.

- Privacy by Design: Operating without cameras inherently ensures absolute compliance with strict data privacy laws (like GDPR) by completely eliminating the drone’s ability to record faces, license plates, or private property.

- Disaster Ready: Camera-less micro-drones are the ultimate tool for hazardous, GPS-denied emergency environments, such as collapsed urban infrastructure, foggy mountain borders, and underground coal mine search and rescues.

Imagine a rescue drone flying through a collapsed, pitch-black mining tunnel filled with thick, choking dust. Traditional optical sensors would instantly fail, leaving the drone entirely blind and useless.

However, a groundbreaking technological shift is happening in aerial robotics. Engineers have successfully developed drones that navigate without a single camera or light-based sensor.

By mimicking the natural echolocation abilities of bats, these revolutionary aerial vehicles use high-frequency ultrasound and deep learning to “see” their surroundings. This is the dawn of camera-less drone navigation.

It is a paradigm shift that solves some of the most complex challenges in autonomous flight. From operating in severe weather conditions to preserving human privacy in urban environments, non-visual sensing is rewriting the rules of the sky.

Why This Topic Matters Today

The urgency for reliable, non-visual drone navigation has never been greater. Climate change and rapid urbanization are triggering a sharp rise in complex disasters, from massive structural collapses to widespread forest fires.

During these critical emergencies, first responders need immediate aerial intelligence. Unfortunately, traditional helicopter search and rescue missions can cost up to $100,000 per deployment and are easily grounded by poor visibility.

Furthermore, as commercial drones become a daily reality in our cities, public concern regarding mass surveillance is skyrocketing. People do not want flying cameras constantly recording their neighborhoods.

Camera-less drones provide an elegant solution to both crises. They can fly through visually degraded environments to save lives, while simultaneously guaranteeing absolute privacy because they lack the physical hardware to record human faces.

Key Highlights

- Acoustic Echolocation: Next-generation drones use high-frequency ultrasound to navigate through dense fog, thick smoke, and 100% darkness without optical cameras.

- MilliWatt Efficiency: Advanced ultrasonic perception stacks consume only 1.2 milliwatts of power, offering massive energy savings over traditional LiDAR.

- Deep Learning Filtration: Custom neural networks actively filter out the extreme acoustic noise generated by the drone’s own spinning propellers.

- Privacy by Design: Because they operate without cameras, these drones inherently comply with strict data protection laws like GDPR, preserving civilian privacy.

- Disaster Resilience: Ultrasound drones are uniquely equipped to handle subterranean emergencies, such as collapsed tunnels or underground coal mine rescues.

Background

To appreciate the genius of camera-less navigation, we must first understand how traditional drones fly. Most commercial and industrial drones rely on Simultaneous Localization and Mapping (SLAM).

Visual SLAM utilizes continuous high-definition camera feeds to identify environmental features like edges, corners, and textures. The drone tracks these points frame-by-frame to deduce its movement and build a 3D map.

However, visual SLAM possesses a fatal flaw. When a drone enters a visually degraded environment—such as a dark room, a smoke-filled corridor, or a featureless snowy landscape—the algorithm loses its visual reference points and fails.

To counter this, the industry turned to LiDAR (Light Detection and Ranging). LiDAR fires millions of laser pulses to measure distance. Yet, LiDAR struggles in dense fog because water droplets scatter the light beams omnidirectionally.

Furthermore, high-end LiDAR arrays are heavy, expensive, and consume tremendous amounts of battery power. This makes them highly impractical for tiny, palm-sized micro-drones designed to squeeze through tight spaces.

Nature, however, bypassed visual limitations millions of years ago. Microbats thrive in dark, damp, and dusty caves using echolocation. Translating this biological marvel into a mechanical quadrotor has been the ultimate goal for modern roboticists.

Core Explanation

The most prominent breakthrough in this field comes from researchers at Worcester Polytechnic Institute (WPI). Led by Dr. Nitin J. Sanket, the team developed “Saranga,” a palm-sized aerial robot that navigates entirely via ultrasound.

What It Is

Saranga is a low-power, ultrasound-based perception stack integrated into a micro-quadrotor. The project derives its name from Hindu mythology; Saranga is the celestial bow of Vishnu, symbolizing the system’s ability to pierce through impenetrable visual conditions.

How It Works

The system operates on the principles of acoustic time-of-flight. The drone continuously emits ultrasonic sound waves into the environment.

When these high-frequency waves strike a physical obstacle, they bounce back to the drone’s dual sonar array. By calculating the time delay between the emission and the returning echo, the drone determines the exact distance to the obstacle.

However, making this work on a drone is notoriously difficult. Quadrotor propellers spin at incredibly high speeds, generating deafening acoustic noise that completely buries the faint returning echoes.

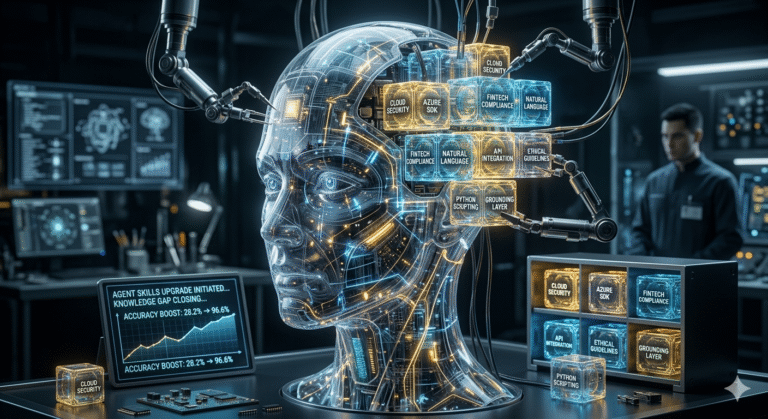

Key Components / Stakeholders

To solve this physics problem, the WPI research team implemented two revolutionary components. First, they engineered a physical acoustic shield.

Inspired by the cartilage structure of a bat’s ear, this metamaterial shield sits directly between the drone’s sonar sensors and its noisy propellers. It physically blocks a massive amount of motor interference.

Second, they deployed an advanced AI brain. The onboard computer runs a specialized deep learning network trained to hunt for legitimate echo patterns hidden deep within the remaining propeller noise.

💡 Did You Know? Bats are nature’s ultimate navigators. Despite weighing as little as two paper clips, they can detect obstacles as thin as a single human hair in pitch-black caves using only sound waves. This biological efficiency directly inspired the Saranga drone’s low-power acoustic shield!

Conceptual Breakdown

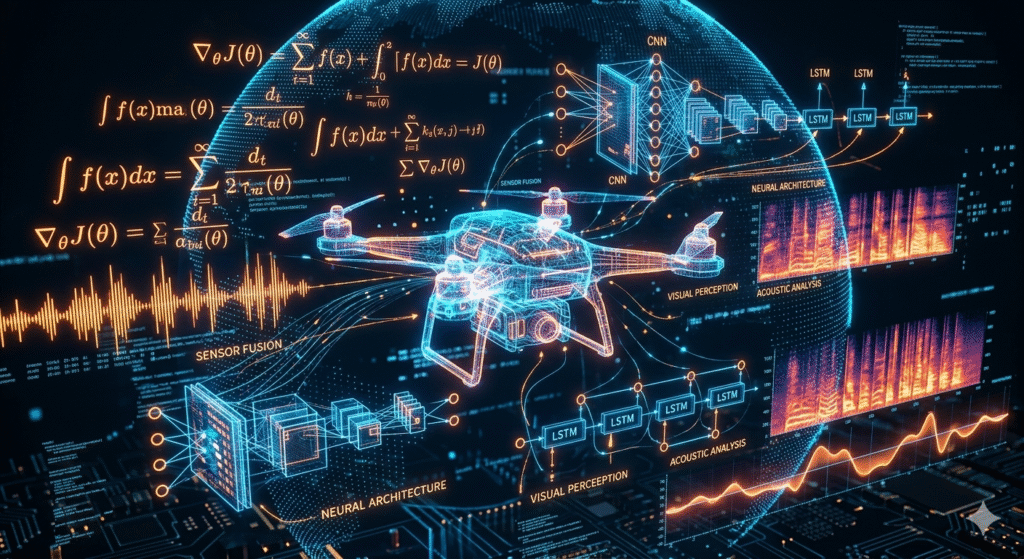

Understanding the artificial intelligence behind this system is crucial for engineering professionals and computer science researchers.

The raw acoustic data captured by the drone features a terrible Peak Signal-to-Noise Ratio (PSNR) of -4.9 decibels. Classical signal processing algorithms fail entirely at this level of interference.

To overcome this, the drone utilizes deep neural networks to process time-series acoustic data.

- Time-Series Analysis: Unlike static images, acoustic echoes are continuous streams of temporal data. The drone must analyze a “long horizon” of sound to find hidden patterns.

- CNN-LSTM Architectures: Many advanced robotic systems rely on(https://www.mdpi.com/2072-4292/15/14/3626). The CNN layers extract immediate spatial features from the sound waves, while the LSTM layers remember previous acoustic states to predict moving obstacles.

- Transformer Models: Modern iterations are moving toward Transformer architectures. These use self-attention mechanisms to weigh the importance of all acoustic data points simultaneously, drastically reducing processing latency.

- Synthetic Training: Gathering real-world crash data is expensive. The Saranga network was trained primarily using a synthetic data generation pipeline, augmented with only limited real noise data, proving that simulation-to-reality AI training is highly effective.

Real-World Examples

The theoretical genius of ultrasound navigation translates into massive operational capabilities for real-world disaster management.

The Silkyara Tunnel Rescue

In November 2023, the Silkyara tunnel in Uttarakhand, India, suffered a catastrophic collapse, trapping 41 workers inside a highly unstable, two-kilometer stretch of debris.

During the exhaustive rescue, agencies like the National Disaster Response Force (NDRF) relied heavily on drones to monitor the site and attempt ingress. However, collapsed tunnels are GPS-denied, pitch-black, and filled with blinding construction dust.

Traditional visual drones struggle immensely in these jagged, featureless voids. An ultrasound-guided micro-drone, however, could autonomously map the rubble piles and find a safe ingress route using sound waves, completely unbothered by the darkness.

Underground Coal Mine Inspections

Subterranean coal mining is arguably the most hazardous industrial sector. Following a seismic event, human rescue teams face the immediate threat of toxic gas pockets and secondary collapses.

Mining engineers desperately need robotic assistance for initial site assessments. Yet, optical drones cannot see through dense coal dust, and their heavy electronics can accidentally ignite volatile atmospheric gases.

A camera-less drone utilizing a 1.2-milliwatt ultrasound payload provides a lightweight, intrinsically safe alternative. It maps the structural integrity of the mine shaft entirely via sound, keeping human responders out of harm’s way.

Advantages

The transition toward camera-less acoustic perception brings a multitude of operational advantages:

- Extreme Power Efficiency: The Saranga ultrasound system operates on just 1.2 milliwatts of power. This represents a 1000× power reduction compared to bulky LiDAR systems, massively extending the drone’s flight battery life.

- Unrestricted Environmental Flight: Supply chain and medical delivery drones are frequently grounded by bad weather. Ultrasound navigation guarantees continuous autonomous flight through thick fog, heavy snowfall, and nightfall.

- Cost Democratization: Precision LiDAR arrays cost thousands of dollars. Ultrasonic sensors cost a fraction of that, offering a 100× cost reduction that makes autonomous safety tech accessible to developing nations.

- Weight Reduction: Shedding heavy camera gimbals and laser spinners allows manufacturers to build much smaller drones. These micro-drones can easily navigate through tight disaster rubble or dense industrial piping.

Criticism

Despite its revolutionary potential, acoustic drone navigation faces several distinct engineering challenges.

First, ultrasonic sensors have a relatively short detection range. While heavy LiDAR can map terrain from hundreds of meters away, micro-ultrasound sensors are typically limited to shorter proximities (often under 10 meters).

Second, sound waves are susceptible to physical absorption. Hard surfaces like concrete reflect sound beautifully, but soft, porous materials—like thick fabric or deep snowbanks—can absorb the ultrasound waves, weakening the return echo and causing blind spots.

Finally, the computational load required for onboard AI denoising is significant. Running advanced CNN-LSTM networks in real-time requires powerful, lightweight flight controllers, which drives up the complexity of the internal software architecture.

Global Implications

For policymakers and administrators—particularly those studying for the UPSC GS Paper III (Science & Technology and Disaster Management)—this technology represents a crucial paradigm shift in governance.

India has cultivated a rapidly expanding drone ecosystem, boasting over 38,500 registered UAVs. This growth is fueled by progressive regulations like the Drone Rules of 2021, which established the digital ‘eGCA’ single-window platform and declared 90% of Indian airspace as accessible Green Zones.

Camera-less navigation aligns perfectly with major Indian policy initiatives. For instance, the SVAMITVA scheme currently relies on visual drones for rural land mapping. Integrating ultrasound depth-sensing could allow these drones to map terrain hidden beneath dense, dark forest canopies.

Furthermore, the Namo Drone Didi scheme deploys agricultural drones for crop management. Ultrasound sensors ensure these drones maintain perfect micro-precision altitude over uneven, dusty farmlands, preventing costly crashes.

Crucially, camera-less drones resolve the global crisis of civilian privacy. Drones equipped with high-resolution telephoto lenses frequently violate the General Data Protection Regulation (GDPR) by inadvertently capturing personal data.

By adopting a “Privacy by Design” approach, camera-less drones physically eliminate the risk of facial recognition and illegal surveillance. The drone simply registers a human as a spatial acoustic obstacle, completely ignoring their identity.

Outlook

The next decade of autonomous flight will be defined by sensor fusion and next-generation connectivity.

The rollout of 6G networks will introduce Integrated Sensing and Communication (ISAC). ISAC allows the same radio frequencies used for telecom to double as a radar system, mapping the environment. By combining 6G ISAC data with onboard ultrasound, drones will achieve hyper-accurate spatial awareness without a single camera.

Furthermore, the(https://blog.aquartia.in/index.php/2026/04/01/end-of-monolithic-chips-chiplets-are-rewriting-future-of-computing/) will allow engineers to embed dedicated AI accelerators directly onto the drone’s motherboard. This edge-computing power will process acoustic echoes instantaneously, removing the need for latent cloud connections.

Finally, camera-less tech is ideal for drone swarms. As analyzed in(https://blog.aquartia.in/index.php/2026/03/24/data-driven-warfare-and-ai-are-rewriting-rules-of-global-conflict/), coordinating hundreds of optical drones requires massive video bandwidth. Acoustic telemetry requires tiny data packets, allowing massive military or rescue swarms to coordinate flight paths seamlessly, just like a flock of birds.

🛠 Expert Tip:

When designing automated systems for industrial inspections, always employ multi-modal sensor fusion. Combining short-range ultrasound with long-range radar creates a robust perception net that functions flawlessly even when optical cameras fail in heavy dust.

Comparison Table: Drone Perception Sensors

| Feature | Ultrasonic Sensing (e.g., Saranga) | LiDAR (Light Detection) | Radar (Radio Waves) |

| Operating Principle | High-frequency sound waves | Laser light pulses | Electromagnetic radio waves |

| Power Consumption | Ultra-Low (~1.2 milliwatts) | Extremely High | Moderate to High |

| Performance in Smoke/Fog | Excellent (Penetrates particles) | Poor (Severe scattering) | Excellent |

| Performance on Transparent Glass | Excellent (Reflects sound) | Poor (Light passes through) | Good |

| Hardware Weight | Negligible (Micro-sensors) | Heavy (Requires gimbals) | Moderate |

| Data Privacy | Absolute (No optical data) | High (Structural data) | High |

Conclusion

The implications of camera-less drone navigation stretch far beyond laboratory demonstrations. For emergency responders digging through the terrifying rubble of a collapsed mine, it offers a lifeline.

For privacy advocates battling the endless expansion of urban surveillance, it offers a highly ethical technological alternative. And for nations seeking to scale their commercial and military drone ecosystems, it provides unmatched, all-weather resilience.

As 6G networks and edge-AI chips mature, the skies of tomorrow will not be filled with flying cameras, but with highly intelligent, self-contained agents navigating the unseen world with the effortless precision of a bat in the night.

FAQ SECTION

1. How do camera-less drones navigate in total darkness?

Camera-less drones use alternative perception technologies, primarily ultrasonic sensors (echolocation) or radar. By continuously emitting high-frequency sound waves or radio pulses and measuring the precise time it takes for those echoes to return, the drone’s onboard computer constructs an accurate spatial map. This allows the drone to autonomously dodge obstacles and chart flight paths without needing any ambient light or optical cameras.

2. What is the Saranga drone and who developed it?

Saranga is a revolutionary, palm-sized experimental quadrotor developed by a research team at Worcester Polytechnic Institute (WPI), led by Dr. Nitin J. Sanket. It navigates visually degraded environments entirely through ultrasound. It utilizes a bat-inspired physical acoustic shield to block its own propeller noise and a specialized deep learning neural network to filter out remaining interference, operating on just 1.2 milliwatts of power.

3. Why is ultrasound better than LiDAR in foggy conditions?

LiDAR uses millions of laser light pulses to measure distance. However, in dense fog, smoke, or heavy snow, airborne water droplets and particulates scatter the light beams omnidirectionally, rendering the LiDAR blind and useless. Ultrasound relies on sound waves, which physically penetrate through suspended particles without scattering, allowing the drone to maintain its spatial awareness in the harshest visual environments.

4. How do drone propellers affect ultrasound sensors?

Quadrotor propellers spin at exceptionally high speeds, generating massive amounts of acoustic interference and wind noise. This deafening noise easily drowns out the incredibly faint ultrasonic echoes bouncing back from obstacles (resulting in a negative Peak Signal-to-Noise Ratio). To fix this, drones like Saranga use a physical metamaterial shield placed between the sensors and propellers, coupled with aggressive AI denoising software.

5. What are the privacy benefits of camera-less drones under GDPR?

Drones equipped with high-resolution optical cameras frequently capture Personally Identifiable Information (PII), such as faces, window interiors, and license plates, which heavily violates GDPR principles. Camera-less drones use sound waves, meaning they only detect physical geometry (a solid object to avoid). Because they physically lack the hardware to record optical data, they inherently guarantee absolute privacy and legal compliance.

6. How are drones utilized in disaster management in India?

The Indian government and the National Disaster Response Force (NDRF) actively deploy drones for real-time terrain mapping, locating trapped victims, and delivering emergency supplies. During events like the Silkyara tunnel collapse, drones proved vital for monitoring. Adopting non-visual, ultrasonic drones will drastically enhance India’s disaster capabilities, allowing continuous rescue operations despite heavy monsoon fogs or deep, dark subterranean collapses.

7. What is the role of AI and deep learning in drone echolocation?

Because drone motors create overwhelming background noise, raw acoustic data is too chaotic for standard navigation software. Drones use deep learning—specifically Convolutional Neural Network-Long Short-Term Memory (CNN-LSTM) and Transformer architectures—to process the sound in real-time. The AI is trained to recognize the hidden, specific patterns of legitimate obstacle echoes buried deep within the drone’s own mechanical noise.

8. Can ultrasound drones be used in underground coal mines?

Absolutely. Traditional visual drones fail in underground mines due to total darkness, thick airborne coal dust, and a lack of GPS signals. Furthermore, their heavy electronics can ignite combustible mine gases. A micro-drone equipped with a low-power ultrasound payload is intrinsically safer and highly effective. It can map the structural integrity of a collapsed shaft entirely via sound, protecting human rescue teams.

9. How will 6G technology impact future drone navigation?

The upcoming 6G telecommunications network will introduce Integrated Sensing and Communication (ISAC). This means the exact same radio waves used to transmit internet data will double as an environmental radar system. By combining 6G ISAC data with onboard ultrasonic sensors, future drones will achieve hyper-accurate, multi-layered spatial awareness, allowing massive swarms of drones to coordinate seamlessly without ever activating a camera.

10. What are the limitations of ultrasonic drone sensors?

While incredibly power-efficient, ultrasonic sensors have limitations. They possess a relatively short detection range (usually under 10 meters) compared to the long-range capabilities of heavy LiDAR. Additionally, while sound waves bounce perfectly off hard surfaces like concrete, highly soft or porous materials (like thick fabric or deep snow) can absorb the sound waves, weakening the return echo and potentially causing navigational blind spots.

+ There are no comments

Add yours