Key Takeaways Box

- Physical Limits Reached: Monolithic chips are no longer economically viable for cutting-edge computing due to massive defect rates and reticle size limitations.

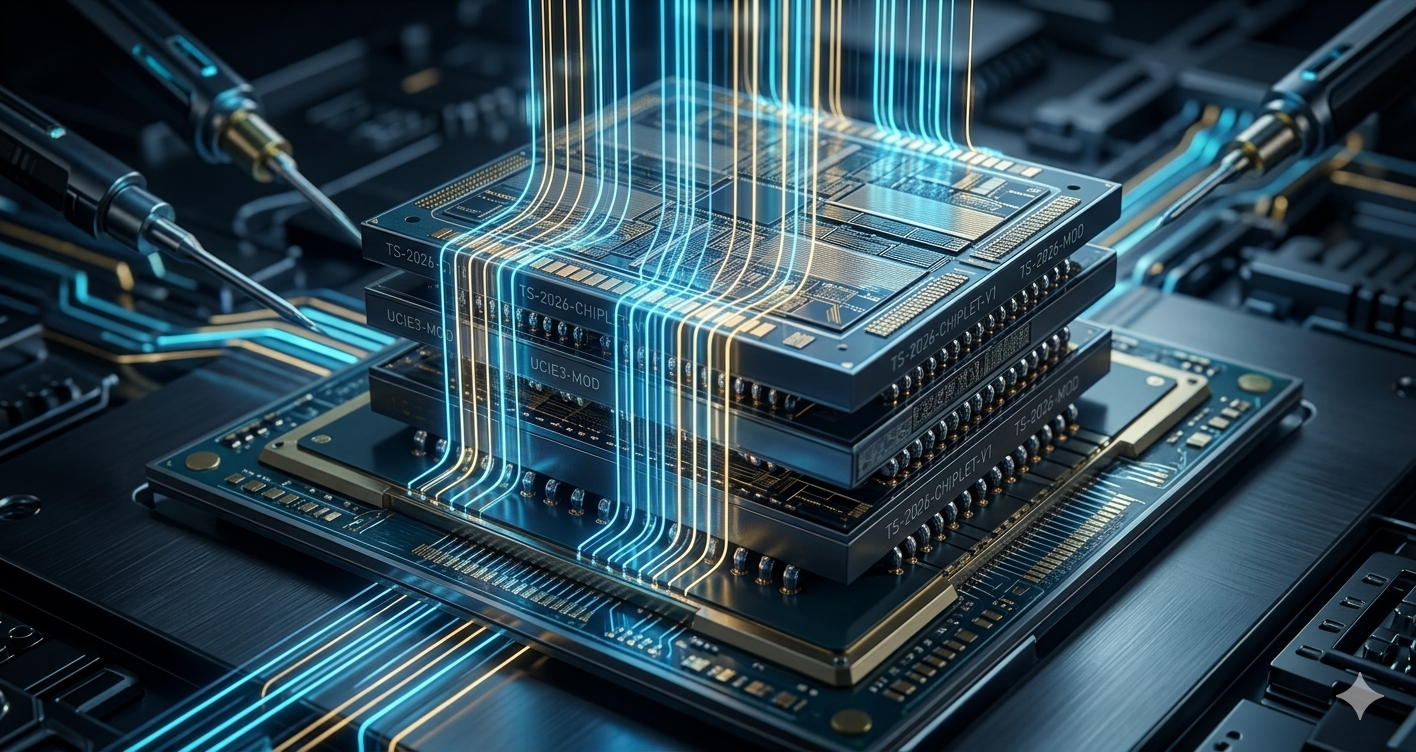

- The Power of Modularity: Chiplets allow the combination of logic, memory, and networking from different manufacturers and process nodes into a single, high-yield package.

- Standardization is Key: The UCIe 3.0 standard guarantees 64 GT/s interoperability, paving the way for an open, vendor-agnostic hardware ecosystem.

- Economic Shift: AI hardware, driven by advanced packaging, is projected to push the semiconductor industry to a $1.6 trillion valuation by 2030.

- Geopolitical Impact: The complexity of assembling multi-die systems creates new supply chain chokepoints, prompting aggressive national infrastructure programs like India’s ISM 2.0.

For decades, the technology industry operated on a simple, unspoken rule: to make computers faster, you pack more microscopic transistors onto a single, solid piece of silicon. This approach, known as monolithic chip design, gave us the smartphone revolution, the internet age, and the dawn of artificial intelligence.

But in 2026, the laws of physics have finally caught up with us. The era of the giant, monolithic chip is effectively over.

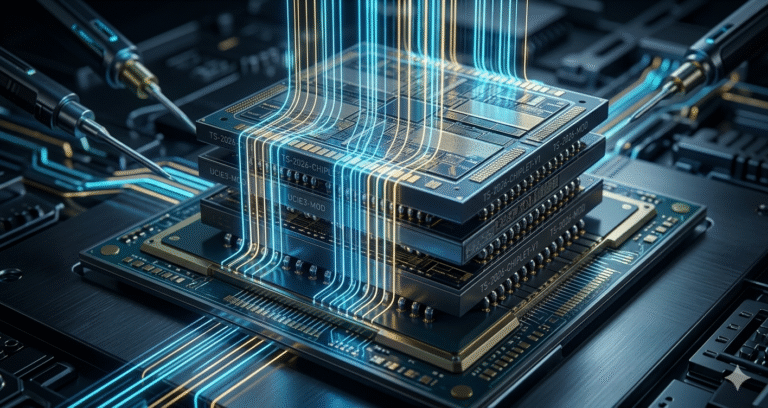

We have reached a hard physical barrier where shrinking transistors further on a single die is becoming economically impossible and prone to massive defect rates. The solution rewriting the future of hardware is “Chiplet Architecture.” Instead of relying on one massive brain, engineers are now splitting processors into smaller, highly specialized “tiles” or “chiplets” that are stitched together with molecular precision. This modular approach is not just a passing trend; it is the definitive foundation for the next century of computing.

Why This Topic Matters Today

The transition toward modular silicon is driven by an unprecedented convergence of technological necessity and geopolitical strategy. The exponential growth of computing workloads requires massive datasets and complex neural networks. These applications demand compute capabilities that traditional monolithic dies can no longer physically or economically provide.

At the same time, global supply chains are experiencing intense fragmentation. Control over advanced semiconductor manufacturing is now viewed as a critical pillar of national security. Nations are scrambling to secure domestic supply chains because relying on a single overseas factory for monolithic chips is a strategic vulnerability.

In 2026, chiplet architecture allows manufacturers to bypass advanced process bottlenecks, mitigate the financial risks of multi-million-dollar manufacturing masks, and establish resilient, localized supply chains. Understanding this paradigm shift is essential for engineers designing tomorrow’s hardware, policymakers safeguarding digital sovereignty, and anyone invested in the future of the digital economy.

Key Highlights

- Manufacturing Efficiency: Disaggregating large monolithic designs into smaller chiplets drastically improves manufacturing yields, reducing overall development costs by 30-40% for complex systems.

- Standardization Breakthroughs: The Universal Chiplet Interconnect Express (UCIe) 3.0 specification now delivers 64 GT/s speeds, establishing an open ecosystem that ends proprietary vendor lock-in.

- AI Infrastructure Demand: The AI chip market is projected to reach $500 billion in 2026, making advanced packaging and High-Bandwidth Memory (HBM) integration absolutely critical.

- Geopolitical Powerplays: The India Semiconductor Mission (ISM) 2.0 and the ₹33,600 crore BHAVYA scheme highlight aggressive national strategies to build robust, indigenous semiconductor ecosystems based on modular packaging.

- Advanced Packaging Dominance: 2.5D silicon interposers and 3D heterogeneous integration using hybrid bonding are replacing traditional circuit boards, achieving terabyte-per-second communication speeds.

Background

The historical trajectory of semiconductor design has been governed by Moore’s Law, an observation predicting the predictable shrinking of transistors every two years. For fifty years, this scaling led to simultaneous improvements in performance, power reduction, and cost efficiency. Manufacturers capitalized on this by designing massive monolithic chips that integrated logic, memory, and networking onto a single silicon die.

However, scaling below the 5-nanometer (nm) process node introduced severe technical friction.

The primary barrier is the “reticle limit.” This is the maximum physical area—typically around 858 square millimeters—that a photolithography scanner can expose onto a silicon wafer. Modern AI accelerators require transistor counts exceeding 100 billion, pushing designs hard against this physical boundary.

Furthermore, as die sizes increase, the probability of a microscopic manufacturing defect ruining the entire chip rises exponentially. Following the Bose-Einstein yield model, large monolithic dies suffer from prohibitively low yields, rendering them economically unviable. Compounding this issue is the stagnation of certain silicon components. While computational logic continues to scale down to 3nm and 2nm, Static Random-Access Memory (SRAM) and analog input/output (I/O) components effectively stopped scaling at the 5nm node.

Producing an entire monolithic chip on a cutting-edge 3nm node—when half the die consists of non-scaling SRAM—represents a massive misallocation of capital. The industry required a solution that decoupled these components.

Did You Know? Leading-edge mask sets for large designs near the reticle limit can cost between $30 million and $50 million just to set up the manufacturing line. A single flaw in a monolithic design means that entire investment is wasted.

Core Explanation

What is Chiplet Architecture?

Chiplet architecture is a design methodology where a system is constructed from multiple smaller, specialized, and unpackaged integrated circuits, rather than a single large piece of silicon. These individual components are known as chiplets.

Each chiplet is optimized for a specific function. One chiplet might handle processing power, another might handle memory storage, and another might route internet traffic. Once manufactured, these modular blocks are assembled closely together within a single advanced package. To your computer’s operating system, the packaged unit functions seamlessly, acting identically to a traditional monolithic processor.

How It Works: The Art of Assembly

The viability of a modular system relies entirely on the speed and efficiency with which the individual dies communicate. If the data transfer between chiplets is too slow, or if it consumes too much power, the advantages of modularity are completely lost.

To overcome this, engineers utilize advanced die-to-die (D2D) interconnects. These interfaces route data across micro-bumps and specialized wiring embedded in the packaging substrate beneath the chips. By bringing the dies physically closer together—often separated by fractions of a millimeter—the electrical signals travel shorter distances. This minimizes signal degradation, drastically reduces latency, and lowers the power required to drive the communication bus.

Key Components and Stakeholders

The shift to modular design has transformed the global semiconductor supply chain, demanding deep collaboration across a broader ecosystem:

- Foundries (e.g., TSMC, Samsung): The massive factories responsible for fabricating the raw, individual dies across various process nodes.

- Fabless Designers (e.g., AMD, Nvidia, Apple): The architects who design the specialized computing blocks but do not manufacture them.

- OSATs (Outsourced Semiconductor Assembly and Test): Companies critical for assembling the multiple disparate dies into a final, complex package using advanced methodologies.

- IP Providers: Organizations developing the standardized communication protocols and interconnect designs that allow different chiplets to interface securely.

Conceptual Breakdown

The successful implementation of modular silicon depends heavily on two foundational engineering pillars: advanced packaging techniques and high-speed interconnect standards.

Advanced Semiconductor Packaging (2.5D and 3D)

Traditional 2D packaging places chips side-by-side on a standard printed circuit board (PCB). This introduces high latency and footprint constraints because the signals must travel relatively long distances. Advanced packaging solves this through high-density proximity.

In 2.5D Integration, multiple dies are placed side-by-side atop a foundational layer called a silicon interposer. The interposer acts as a high-density communication bridge. It contains microscopic wiring and Through-Silicon Vias (TSVs) that route electrical signals and power to the underlying substrate. This configuration allows logic processors to sit immediately adjacent to High-Bandwidth Memory (HBM), facilitating the massive memory speeds required by AI accelerators.

Taking this a step further, 3D Heterogeneous Integration (3DHI) elevates density by stacking chiplets vertically. By utilizing TSVs or advanced hybrid bonding techniques—where copper pads are fused directly without traditional solder bumps—vertical stacking eliminates inter-chip communication bottlenecks almost entirely. This significantly reduces the physical footprint and improves power efficiency by ensuring the absolute shortest possible distance for signal traversal.

The Universal Chiplet Interconnect Express (UCIe 3.0)

Historically, large companies developed proprietary interconnects to link their modular designs. In an effort to democratize the technology, an industry consortium established the Universal Chiplet Interconnect Express (UCIe) standard.

The recent release of the UCIe 3.0 specification in 2026 marks a watershed moment for the industry. This open standard allows a chiplet made by Intel to communicate seamlessly with a chiplet made by AMD.

| UCIe 3.0 Technical Specification | Details and Industry Impact |

| Maximum Bandwidth | Delivers massive 48 GT/s and 64 GT/s speeds, doubling the previous 2.0 generation. |

| Signaling Mechanics | Uses Quarter-Rate Signaling (QDR) to capture data on multiple clock edges, ensuring stability at high speeds. |

| Power Efficiency | Targets an ultra-low 0.75 picojoules per bit (pJ/bit), crucial for energy-hungry data centers. |

| Manageability | Extends sideband channels to 100mm, allowing complex star-topology connections and priority thermal throttling. |

| Firmware Booting | Allows firmware to distribute across all chiplets instantly, bypassing legacy hardware startup delays. |

Real-World Examples

The practical application of modular silicon is highly visible across leading technology developers, defining the high-performance hardware landscape in 2026.

NVIDIA: Blackwell and Rubin Platforms

Nvidia’s architectural evolution highlights the necessity of modularity for complex training and inference workloads. The Blackwell (B200) architecture utilized a dual-die package where two reticle-limit dies behaved as a single logical GPU connected via a 10 TB/s proprietary interconnect.

However, the subsequent Rubin (R100) platform pushes the boundaries further. Expected to deliver a massive 336 billion transistors, Rubin transitions to next-generation HBM4 memory, delivering up to 22 TB/s of bandwidth per GPU. By utilizing an advanced modular framework and NVLink 6, Rubin significantly lowers the inference token cost and reduces the hardware footprint required to train massive models.

AMD: The Instinct MI300 Series

AMD’s Instinct MI300X illustrates the economic and structural advantages of a highly disaggregated design. The processor incorporates four central I/O dies manufactured on a mature 6nm process, with eight high-performance compute chiplets stacked vertically on top using 3D packaging.

This extreme decoupling yields massive financial advantages. By keeping the logic die sizes small, AMD achieves an estimated 77% yield on a 300mm wafer, compared to an estimated 50% for larger monolithic equivalents. Furthermore, the MI300 explicitly exposes its Non-Uniform Memory Access (NUMA) characteristics to developers, allowing for architecture-aware software optimizations that maintain exceptional processing efficiency.

Apple: UltraFusion Technology

Apple’s approach to the M-series processors utilizes a custom chip-to-chip communication architecture named UltraFusion. To create top-tier workstation processors, Apple essentially joins two smaller “Max” dies together. A silicon interposer bridges the two halves, acting transparently so the operating system recognizes a single unified chip. This allows Apple to scale performance linearly without designing an entirely separate, massive die architecture.

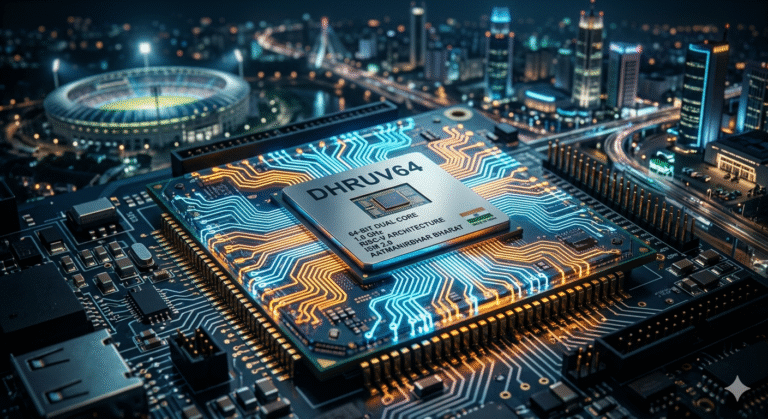

India’s Indigenous Push: C-DAC DHRUV64

In the realm of national technological sovereignty, India’s Centre for Development of Advanced Computing (C-DAC) recently launched the DHRUV64 microprocessor. Developed under the Microprocessor Development Programme and the Digital India RISC-V (DIR-V) initiative, Dhruv64 is an indigenous 1.0 GHz, 64-bit dual-core processor designed for industrial automation, IoT, and embedded systems.

While currently relying on mature node paradigms (28nm with 30 million gates), the open-source nature of the RISC-V instruction set creates a foundation for Indian startups and academia to experiment with future indigenous modular designs. Developing sovereign hardware is critical to supporting Generative AI models without relying on imported silicon.

Advantages

The shift to modular integration offers profound industrial and financial benefits across the board.

Because defect probability scales with die size, manufacturing smaller elements drastically increases the number of viable chips per wafer. Economic models indicate this can slash development costs by 30% to 40%. Designers are also no longer forced to manufacture simple components on ultra-expensive 3nm nodes. They can mix 3nm logic cores with 6nm I/O controllers and HBM stacks from entirely different foundries, optimizing both cost and performance.

This modular nature enables parallel development teams to work on different functional blocks simultaneously. Reusable Intellectual Property (IP) tiles can be swapped into new product lines without undertaking entirely new silicon designs, significantly reducing Non-Recurring Engineering (NRE) costs. Vertical 3D stacking also shortens physical interconnect lengths dramatically, leading to lower signal losses, reduced latency, and a highly efficient power envelope.

Criticism

Despite the overwhelming advantages, the disaggregation of silicon introduces a host of complex engineering and supply chain hurdles that the industry is still wrestling with in 2026.

The financial savings generated by higher silicon yields are partially offset by the immense expense of advanced packaging. Silicon interposers and hybrid bonding require specialized, highly precise manufacturing environments, increasing reliance on a limited number of top-tier OSAT providers.

Furthermore, stacking high-performance computing dies vertically creates unprecedented thermal density. Dissipating heat from the bottom layers of a 3D package through the upper layers remains a critical physics challenge, often requiring advanced, expensive liquid cooling systems in data centers.

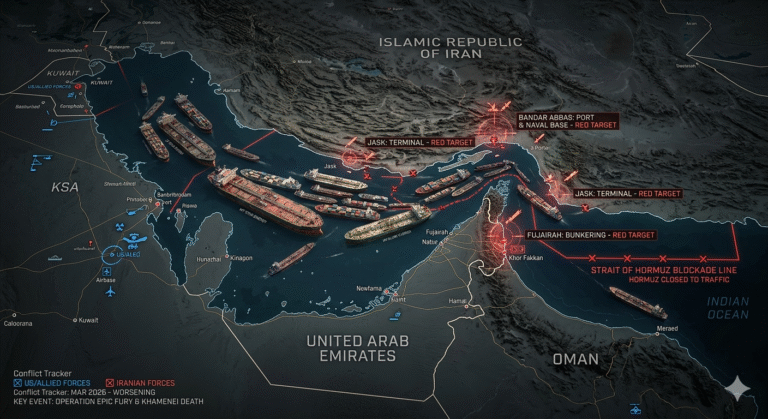

In a modular system, comprehensive testing must occur before assembly to ensure only “Known Good Dies” (KGD) are packaged. If one defective block is integrated, the entire expensive package fails, complicating the quality control pipeline. Finally, the reliance on diverse vendors for different tiles creates significant geopolitical chokepoints. An interruption at any point in the multi-vendor assembly line can halt production entirely.

Global Implications

Semiconductors dictate the pace of modern economic and military supremacy. Consequently, the supply chain is heavily influenced by policy and national security directives. The global market is increasingly fragmented by trade restrictions.

Access to Extreme Ultraviolet (EUV) lithography equipment and sub-3nm Electronic Design Automation (EDA) software is heavily restricted by governments seeking to protect strategic advantages. These bottlenecks compel nations to pursue domestic self-sufficiency. Because packaging logic and HBM together is crucial for high-end computing, locations performing advanced packaging are scrutinized, potentially forcing industry players to restructure supply lines toward “friend-shoring”.

The India Semiconductor Mission (ISM 2.0)

Recognizing the strategic vulnerability of import reliance, India has aggressively pivoted from policy intent to execution scale in 2026.

The Union Budget 2026-27 allocated ₹1,000 crore for the India Semiconductor Mission (ISM) 2.0, signaling a decisive push beyond basic fabrication toward building a holistic ecosystem. The broader framework includes an ₹8,000 crore outlay focusing on expanding the Design Linked Incentive (DLI) scheme to support fabless startups and develop full-stack Indian semiconductor IP.

A major component is the ATMP/OSAT Scheme, which targets the approval of 9 new units, driving an expected ₹11,000 crore in investments and generating thousands of specialized jobs. Projects in Gujarat and Assam focus heavily on flip-chip and integrated system-in-package (ISIP) technologies to secure the backend of the supply chain.

Coupled with the massive ₹33,600 crore BHAVYA Scheme designed to fortify electronics components and industrial parks, India is establishing itself as a critical node in the global hardware supply chain. These policy frameworks ensure that India not only absorbs technology but actively participates in the era of autonomous Agentic AI by controlling the underlying hardware that powers it.

Outlook

The technological trajectory for the remainder of the decade indicates a complete saturation of modular architectures within high-performance sectors.

As traditional transistor scaling yields diminishing returns, the industry will rely almost entirely on spatial scaling and architectural optimization to deliver performance gains. To handle the massive data traffic between high-performance chips, traditional copper pathways will be replaced. Co-Packaged Optics (CPO) and Linear Pluggable Optics (LPO) will integrate photonic light connections directly into the logic packages, drastically improving bandwidth and lowering power consumption.

Additionally, the sheer complexity of routing signals through 2.5D and 3D packages will increasingly require advanced machine learning software. The industry will utilize intelligent algorithms to accelerate the physical design and verification cycles of these highly complex heterogeneous systems.

Comparison Table

To understand how chiplet architecture transforms the manufacturing process, it is critical to compare it directly against the legacy monolithic approach.

| Feature Metric | Monolithic Architecture | Chiplet Architecture |

| Manufacturing Yield | Very low for large dies; a single defect wastes the entire expensive silicon wafer. | High; small dies have fewer defects, and only Known Good Dies (KGD) are assembled. |

| Process Node Efficiency | Forces all components (Logic, SRAM, I/O) onto a single, ultra-expensive process node. | Heterogeneous; uses leading-edge nodes for logic, and mature/cheaper nodes for I/O. |

| Design Scalability | Rigid; any upgrade requires a complete redesign and a massive $30M+ mask set. | High; individual blocks can be swapped, reused, or upgraded independently (IP reuse). |

| Packaging Complexity | Low; utilizes traditional flip-chip or wire-bonding techniques. | Extremely High; requires advanced 2.5D/3D interposers, TSVs, and hybrid bonding. |

| Thermal Density | Moderate; heat is distributed across a large, flat surface area. | Severe; heat traps between vertically stacked logic layers requiring advanced cooling. |

Conclusion

The era of the single, monolithic slab of silicon acting as the undisputed engine of computing is officially over. In its place, the semiconductor industry has embraced an ecosystem of highly specialized, closely integrated functional tiles. This architectural revolution guarantees that while the traditional interpretation of Moore’s Law may be faltering, the exponential scaling of computational power will continue uninterrupted.

As digital infrastructures scale from basic data processing to complex autonomous systems, the hardware underneath will rely entirely on the efficiency of 2.5D and 3D modular packaging. The defining competitive metric for the next decade will not be how many transistors can be etched onto a single die, but how elegantly and efficiently those disparate dies can be stitched together to form a cohesive whole.

FAQ Section

1. What is the fundamental difference between monolithic and chiplet architecture? Monolithic architecture integrates all computing functions (CPU, GPU, memory controllers) onto a single, large piece of silicon. Conversely, chiplet architecture breaks these functions into smaller, modular dies. These smaller components are manufactured separately and then interconnected closely within an advanced package, improving yields and lowering costs.

2. Why is the semiconductor industry moving away from monolithic chips? As transistor sizes shrink below 5nm, manufacturing large monolithic dies becomes economically prohibitive. Large chips frequently exceed photolithography reticle limits and suffer from high defect rates. Modular design solves this by combining smaller, high-yield functional blocks, saving an estimated 30-40% in development costs.

3. What is the UCIe 3.0 standard, and why does it matter? The Universal Chiplet Interconnect Express (UCIe) 3.0 is an open industry standard that defines how dies communicate within a package. Released in 2026, it doubles data rates to 64 GT/s while maintaining ultra-low power consumption (0.75 pJ/bit). It is critical because it ensures interoperability, allowing manufacturers to mix and match IP from different vendors without relying on proprietary interfaces.

4. How does 2.5D packaging differ from 3D packaging? In 2.5D packaging, dies are placed side-by-side on a silicon interposer, which acts as a foundational communication bridge. In 3D packaging, dies are stacked vertically on top of one another using through-silicon vias (TSVs) or direct hybrid bonding. 3D packaging offers a smaller footprint and faster communication but introduces severe thermal management challenges.

5. What is the India Semiconductor Mission (ISM) 2.0? Announced in the Union Budget 2026-27 with a ₹1,000 crore outlay, ISM 2.0 is a strategic initiative to build a robust domestic semiconductor ecosystem in India. Moving beyond basic fabrication, it focuses on developing full-stack Indian IP, strengthening assembly and advanced packaging (ATMP/OSAT) capabilities, and creating an industry-ready workforce.

6. How do chiplets benefit high-performance computing (HPC) and AI? AI workloads require massive computational power and extreme memory bandwidth. Modular architecture allows manufacturers to place logic processors directly adjacent to High-Bandwidth Memory (HBM) using silicon interposers or 3D stacking. This ultra-close proximity allows data to travel at terabytes per second, minimizing latency and maximizing energy efficiency.

7. Are there any disadvantages or supply chain risks associated with chiplet technology? Yes. While individual die yields improve, assembling multiple dies using advanced packaging is incredibly complex and expensive. Thermal density increases significantly, requiring advanced cooling solutions. Furthermore, the supply chain is highly fragile, relying heavily on a few global OSAT vendors and susceptible to strict geopolitical trade restrictions.

8. What role does C-DAC’s DHRUV64 play in global technology? DHRUV64 is India’s first fully indigenous 64-bit dual-core microprocessor, developed by C-DAC. Based on the open-source RISC-V architecture, it eliminates the need for expensive proprietary licensing. While aimed at industrial and IoT applications, it serves as a critical stepping stone for Indian startups to achieve technological self-reliance in silicon design.

+ There are no comments

Add yours