Key Takeaways

- Expressive AI Speech: ElevenLabs’ new voice models (v3 TTS) support 70+ languages and allow inline emotion cues (e.g. [whispers], [laughs]), making synthesized speech sound remarkably human.

- Enterprise-Grade Tools: They also launched Scribe v2 transcription (90+ languages, context-aware), enabling IT firms to convert speech to text with record accuracy and compliance.

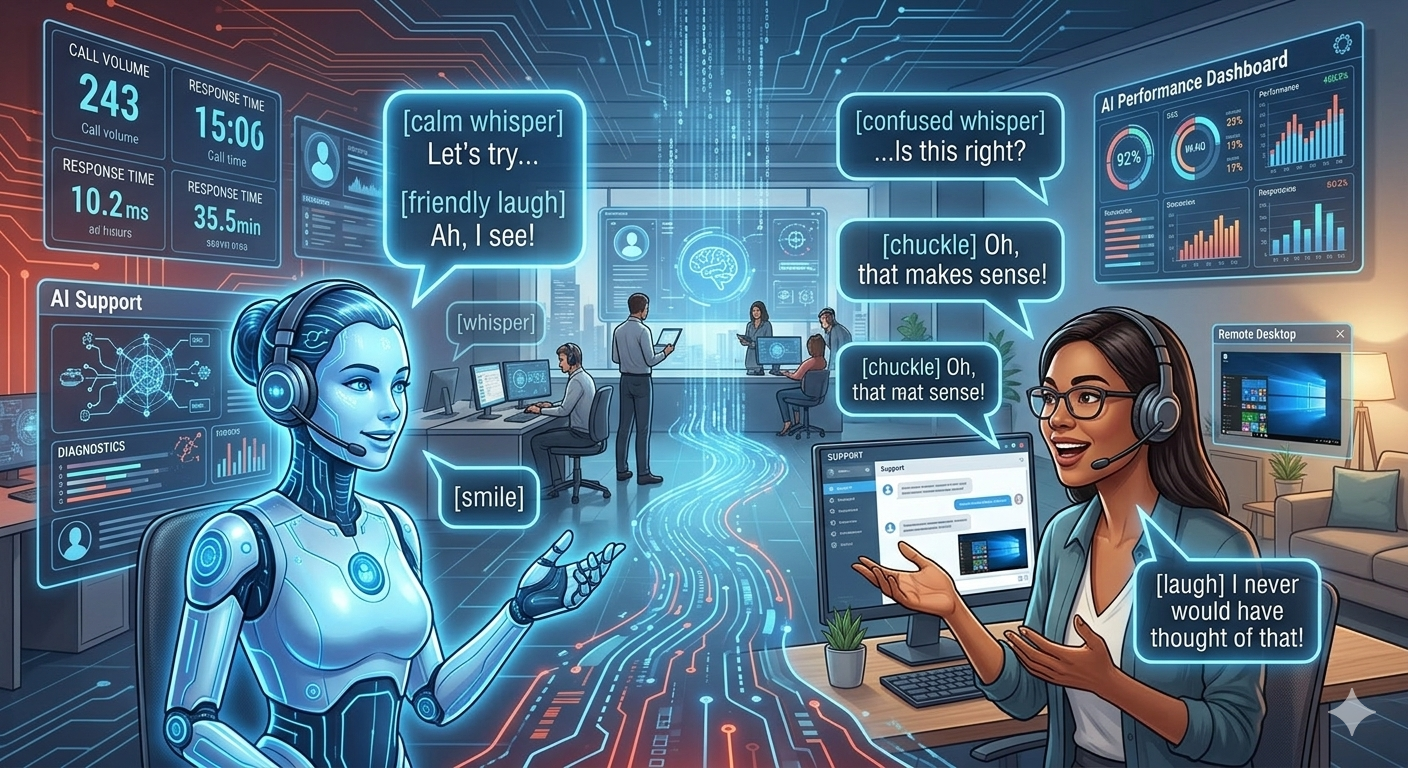

- Revolutionising IT Workflows: These tools are embedded in customer support, content creation, training, accessibility, and more – automating tasks, reducing costs, and improving user experience. Example: voice-based interactive tech support agents.

- Ethical Use is Crucial: Powerful voice AI brings risks like scams and deepfakes. New IT rules mandate warning users about voice cloning misuse. Organizations must implement safeguards and transparency (consent, watermarking).

- Global & Policy Impact: ElevenLabs’ rise (now a $11B AI unicorn) reflects how critical voice AI is for digital growth. Policymakers in India and elsewhere are updating laws to handle synthetic media, underscoring the need for responsible innovation.

Imagine logging into your customer support dashboard and hearing a natural, empathetic AI voice on the other end – one that understands context, seamlessly switches languages, and even expresses emotion. In 2026, this is no longer science fiction but reality, thanks to ElevenLabs’ cutting-edge voice AI technology. From expressive text-to-speech (TTS) and world-class transcription to advanced conversational agents, ElevenLabs has cemented itself as a leader in the voice AI revolution. Major tech companies and startups alike are adopting these tools to streamline processes, enhance user experiences, and tackle new use cases (like multilingual support and accessibility) that were impossible with older voice tech. In this article, we’ll explore how ElevenLabs’ voice tools are revolutionising the IT industry, why they matter now, their history, real-world examples, benefits, challenges, and what the future holds.

ElevenLabs launched in 2022 with a single goal: humanizing digital voices. By 2026, it has raised $500M at an $11B valuation, reflecting investor confidence in its impact. Its co-founder calls it building “the audio layer” of modern tech. Innovations like the Eleven v3 speech model – supporting 70+ languages and new audio “emotion tags” (e.g. [whispers] or [sighs]) – allow IT systems to speak with unprecedented realism. Meanwhile, Scribe v2 offers best-in-class transcription (90+ languages, ultra-low error rates). Together, these advancements are not just incremental; they’re reshaping how software, apps, and services interact with humans, taking voice input and output to new heights.

Why It Matters Today:

The COVID-era jump to remote work and the global rise of voice assistants have made natural speech interfaces a top priority for businesses. Customers expect help over the phone or chat that feels less robotic and more human. Internal IT teams see value in converting documentation and data into voice-first formats for training or accessibility. ElevenLabs’ tools meet these needs head-on. For example, by 2026, many companies use ElevenLabs voices to generate corporate training videos, create customer service voicebots, and even produce multilingual podcasts on the fly. Think about an AI agent that not only answers a support query but does so with perfect tone and clarity, in the caller’s native language. The result: faster issue resolution and higher satisfaction. A recent Reuters story highlights that ElevenLabs’ technology is so in-demand, the company’s 2025 revenue topped $330M, and it plans to double that in 2026. Reuters This surge shows a fundamental shift: voice AI is no longer a novelty; it’s core IT infrastructure for forward-thinking organizations.

Historical Background:

To appreciate ElevenLabs’ impact, we should trace the arc of voice technology. Early text-to-speech (TTS) systems in the 1990s were famously monotone and robotic. Advances in deep learning in the 2010s brought more natural voices, but even Google or Amazon’s AI still lacked true expressiveness (limited emotion, few languages). By the early 2020s, TTS started sounding much better, yet it was mainly used in navigation apps or basic assistants. ElevenLabs burst onto the scene in 2023 by using powerful generative AI to capture subtle human speech patterns. Its initial models “didn’t sound robotic; they sounded human”. Over 2024–25, ElevenLabs added livestream translation (via partnerships), voice cloning, and a developer API. Key milestones include launching ElevenAgents, a platform for deploying conversational AI that can both “hear” and “speak” with emotion, and corporate features like voice libraries for different use cases (radio hosts, support agents, etc.). Essentially, what began as “just TTS” grew into a full-stack audio ecosystem.

ElevenLabs v3: Expressive Text-to-Speech:

In March 2026, ElevenLabs announced Eleven v3, its most advanced TTS model to date. This model supports over 70 languages and introduces inline “audio tags” to control tone (e.g., [excited], [whispers], [sighs] in the script). These tags allow creators to script emotional nuances: a virtual assistant can sound genuinely concerned or a narrator can convey excitement and surprise. According to the company, Eleven v3 voices “sigh, whisper, laugh, and react — producing speech that feels genuinely responsive and alive”. Crucially, v3 dramatically reduces mispronunciation. For instance, its error rate on reading phone numbers, formulas and coordinates dropped by over 68%. In practice, this means IT applications can safely read out technical data (like code or logs) without confusing numeric sequences into plain words. This leap in quality enables novel use cases: live audio documentation (e.g. real-time meeting minutes voiced out loud), interactive voice documentation on demand, and AI dubbing in multiple languages with correct accent and context. For developers building voice-powered features, v3 is a game-changer: as the ElevenLabs blog touts, it’s “breathtaking” for media but (currently) still too slow for instantaneous chat – so they pair it with faster models like Eleven Flash v2.5 for real-time tasks. elevenlabs

Scribe v2: Best-in-Class Speech-to-Text:

On the other side, ElevenLabs has become a heavyweight in transcription. In early 2026, it launched Scribe v2, claiming “the most accurate transcription model ever released”. Scribe v2 supports 90+ languages and delivers ultra-low error rates by using techniques like Keyterm Prompting for specialized vocabularies (brand names, acronyms, code). In fact, internal benchmarks show Scribe v2 achieving the lowest word error rate on industry standards. For businesses, this reliability is huge: global IT teams often have meetings mixing English with other languages or heavy jargon. Scribe v2 can automatically detect and transcribe mixed-language audio without confusion. It also provides features like entity detection and exact timestamps for names, dates or sensitive info, which is invaluable for compliance and review. In 2026, enterprises are using Scribe v2 to transcribe training sessions, customer calls, even conference streams at scale. Since it’s GDPR and SOC2 compliant, companies use it for everything from automating call center logs to creating searchable archives of voice records. Its impact: by converting any conversation into precise text instantly, Scribe v2 streamlines workflows. Developers can plug it into call centers and never worry about a lost memo again. Evidence: According to ElevenLabs, Scribe v2’s improved word accuracy makes subtitle and caption production much faster, saving media teams countless hours. elevenlabs

ElevenAgents & Conversational Voice AI:

Beyond transcription, ElevenLabs has invested heavily in conversational AI. Its ElevenAgents platform allows developers to build “voice agents” that go far beyond scripted chatbots. These agents can handle voice, text, and even integrate with documents or APIs in real time. For instance, a tech support agent built on ElevenAgents can not only listen and respond in a natural voice, but also query the knowledge base, update tickets, or schedule an engineer mid-conversation. Behind the scenes are innovations like “turn-taking models” that manage interruptions or allow the AI to pause and listen (making dialogues feel natural). Companies are already using these in customer service and HR. For example, the language-learning platform Tutore now uses conversational agents for placement interviews, cutting time in half. By 2026, ElevenLabs sees voice agents as ubiquitous – not only replacing phone systems, but also emerging in smart TVs, cars, and business dashboards. Importantly, ElevenLabs ensures these agents are safe for enterprise use: they built new guardrails so that agents can be deployed without fear of them saying something inappropriate. All these developments position ElevenLabs as more than an audio tool company – it’s enabling a voice-driven interface for IT systems.

Benefits and Advantages:

- Hyper-realistic Voices: ElevenLabs’ AI models deliver voices so lifelike that users often can’t tell them apart from humans. This level of realism creates more engaging user experiences in apps and services. (Imagine a GPS app that speaks with emotion or an audiobook that conveys suspense.)

- Time and Cost Savings: Creating custom voice content traditionally required studio recordings. Now, a company can generate an entire training video with one tool in minutes. Audiobook publishers, for instance, report cutting production time by 90% using ElevenLabs voices compared to hiring voice actors.

- Multi-Language Support: Serving global markets is simpler. ElevenLabs’ 70+ language support means a single IT team can generate support content in dozens of languages without multiple voice talent contracts. The technology also supports regional accents and dialects.

- Accessibility: By quickly generating high-quality audio from text, ElevenLabs tools help make digital content accessible (e.g., audiobooks for the visually impaired or voice interfaces for hands-free control). This aligns with inclusive design best practices.

- Integration and Scalability: ElevenLabs offers robust APIs and SDKs. As noted in developer guides, businesses can integrate TTS, transcription, and voice agents into existing apps seamlessly. The platform scales to enterprise loads and complies with security standards (HIPAA, GDPR, ISO 27001), so even healthcare or finance apps use it.

- Innovation Platform: For R&D teams, the continuous model improvements mean new capabilities every few months. For example, the early 2026 upgrade to v3 dramatically improved speech accuracy (e.g. telephone numbers from garbled to correct), enabling new use cases like voice-driven coding assistance.

Challenges and Risks:

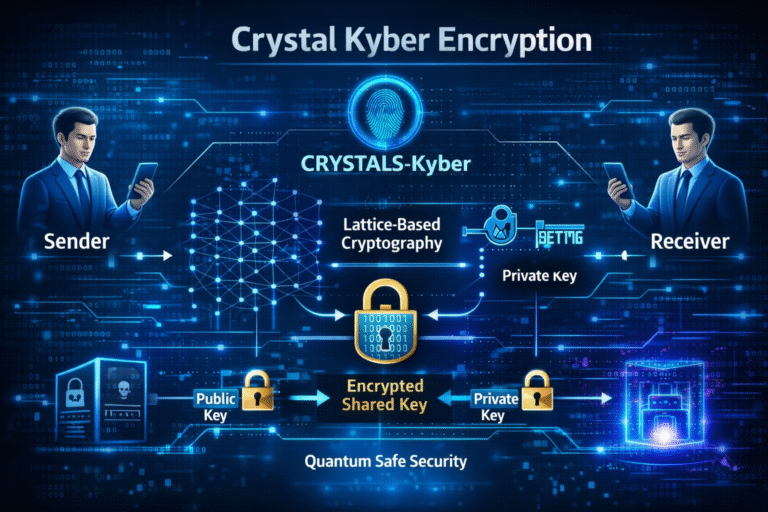

- Misuse & Ethics: The same voice cloning tech that powers convenience can enable fraud. Scammers have used AI to impersonate relatives’ voices in “emergency” calls. UNESCO warned that synthetic voices are so convincing that even experts can’t reliably detect fakes. For IT leaders, this means strict access controls are needed. In response, regulations are evolving: India’s IT rules now require voice-cloning platforms to warn users that misuse “for deception/impersonation may attract legal action”. Companies must also consider user consent and rights (if cloning real people’s voices).

- Overreliance on Automation: Highly automated voice agents may frustrate customers if not carefully designed. A common pitfall is tunnel vision: for example, an AI agent stuck on a fix-it script might fail to solve a novel issue. Thus, enterprises must balance automation with human oversight – e.g., ensuring there’s always a quick hand-off to a live agent when needed.

- Quality Variability: Even the best AI can misunderstand accent or background noise. Although Scribe v2 is excellent, extremely noisy or specialized audio can still cause errors, requiring human review. Similarly, ElevenLabs v3 (especially in real-time) may occasionally mispronounce rare names. IT teams need to set processes for corrections.

- Data Privacy: Voice data is personal. Organizations adopting these tools must comply with privacy laws (e.g., GDPR or India’s DPDP Act) when recording or transcribing calls. ElephantLabs’ compliance (SOC2, etc.) helps, but end-to-end policies are a joint responsibility.

- Vendor Lock-In: Building entire systems around ElevenLabs has benefits, but reliance on any single AI provider carries risk. Businesses should plan for contingencies (e.g., pricing changes, or alternative platforms) and monitor the competitive landscape.

Expert Insight: “Voice AI has entered the era of near-perfect realism,” says Dr. Priya Nair, an AI researcher, “ElevenLabs’ rapid improvements in expressiveness and multi-lingual support mean companies can treat speech as just another user interface. The result is more natural interactions and brand experiences.” Indeed, analysts note that ElevenLabs’ success has spurred competitors to innovate, benefiting the whole industry. As one tech strategist puts it, “When ElevenLabs raised its valuation to $11 billion, it signaled that natural-sounding voice technology is a cornerstone of digital transformation.”

Practical Tips:

- When implementing ElevenLabs TTS, start with scripted use-cases first (e.g. FAQs, help guides) before moving to live calls. This lets your team test voice style and accuracy gradually.

- Use audio tags in Eleven v3 to match brand personality. A cheerful support persona might use [laughs] or [warm], while a serious tutorial voice might use [neutral] tags.

- Leverage Scribe v2’s keyterm prompting to handle domain-specific vocab (e.g., technical jargon). Build a vocabulary list of product names or acronyms to feed into the model.

- Always include fallbacks: allow a human agent to interrupt or override the AI agent. This safety net boosts user trust in voice systems.

- For security: encrypt all voice data in transit and at rest, and limit internal access. Voice AI can be amazing, but it’s still part of your IT security surface.

FAQ

Q: What is ElevenLabs and why is it important for IT?

ElevenLabs is an AI voice technology company that created advanced text-to-speech (TTS) and speech-to-text tools. Its models (like Eleven v3 TTS and Scribe v2 transcription) produce highly realistic, natural-sounding voices and very accurate transcripts. In IT and business, these tools are important because they let software speak and listen with human-like fluency. This means customer service bots can converse smoothly, documentation can be auto-voiced, and multilingual support becomes easy. Essentially, ElevenLabs is enabling developers to integrate speech interfaces into apps and systems that were previously text-only. Its technology streamlines processes and improves user experience by making voice interaction seamless.

Q: What are the key features of ElevenLabs’ voice tools in 2026?

By 2026, key features include: (1) ElevenLabs v3 TTS: Supports 70+ languages and uses “audio tags” like [whispers] or [laughs] in the script to convey emotion. This makes AI speech dynamic and expressive. (2) Scribe v2 Transcription: Best-in-class speech-to-text with multi-language support and advanced features like context-aware keyword prompting, minimizing errors even on technical content. (3) Voice Agents Platform: A framework (ElevenAgents) to build conversational AI that can “talk, type and take action,” meaning it can perform tasks during a call. (4) Enterprise integration: Compliance with standards (SOC 2, HIPAA) and APIs for developers make it easy to embed these tools into any IT application. Together, these features let organizations adopt voice AI for customer support, training, accessibility and more.

Q: How does ElevenLabs v3 improve on previous voice models?

ElevenLabs v3 (released in early 2026) focused on expressiveness and accuracy. Compared to earlier models, v3 can emote – it can whisper, sigh, laugh or express excitement as directed by inline audio tags. It also dramatically reduces pronunciation errors: for example, it correctly reads complex sequences (like phone numbers or chemical formulas) far more reliably. In testing, v3 was preferred 72% of the time over the alpha version. The bottom line: v3 produces speech that sounds alive and contextually accurate, closing the gap between AI voices and real human narration.

Q: What is Scribe v2 and how does it help businesses?

Scribe v2 is ElevenLabs’ latest speech-to-text engine. It automatically transcribes audio with industry-leading accuracy (the lowest error rates on benchmarks) and supports over 90 languages. Businesses benefit by converting any recorded content (meetings, calls, podcasts) into text reliably. This aids documentation, searchability, and accessibility. Scribe v2 offers smart features like detecting speakers, timestamping key terms, and even recognizing multiple languages in one audio file. For example, an IT department using Scribe v2 can instantly caption global conference videos or archive customer service calls. This automation saves time and ensures nothing is “lost” in conversation.

Q: How are companies using ElevenLabs voice tools in practice?

Many IT and tech companies use ElevenLabs for: Customer support bots – voice assistants that answer queries 24/7; Content creation – auto-generating narration for videos and audiobooks; Training – converting tutorials into narrated courses; Accessibility – providing text-to-speech for the visually impaired; and Development tools – giving code or logs a natural-voice interface. For instance, a language-training startup named Tutore uses ElevenLabs voice agents to conduct interview practice, halving their onboarding time. Enterprises have integrated Scribe v2 to manage large call center logs, and media firms generate podcast series with ElevenLabs voices. These real-world cases show how voice AI is a productivity tool, not just a gimmick.

Q: What advantages does ElevenLabs have over traditional TTS solutions?

Compared to older TTS, ElevenLabs voices are far more natural and expressive. Traditional TTS often sounds flat and robotic; ElevenLabs’ AI captures nuances of emotion and timing. It also offers greater customization: developers can tweak tone, speed, and even emotional intent directly in the text. Moreover, ElevenLabs provides an all-in-one platform: voice generation, transcription (speech-to-text), and conversational agents, whereas older systems often required separate tools. ElevenLabs also stays ahead in languages and compliance – supporting dozens of tongues out-of-the-box and meeting stringent data-security standards. In short, it transforms TTS from a basic utility into a versatile medium for communication in IT.

Q: Are there security or ethical concerns with AI voice tools?

Yes, as with any powerful technology. AI voice cloning can be misused for deepfake scams or impersonation. For example, UNESCO reports that voice-cloning has enabled fraudsters to mimic relatives’ voices, tricking victims into sending money. In response, new regulations require clear warnings: India’s IT rules now say a “voice cloning tool must warn users that misuse for deception… may attract legal action”. Companies using these tools must implement safeguards: only clone voices with permission, monitor for abuse, and secure user data. On the positive side, these concerns have led to built-in protections: ElevenLabs and other providers watermark or log AI voices for traceability. The key is balancing innovation with responsibility – ensuring AI voices enrich services without undermining trust.

Q: What future trends can we expect in voice AI?

Looking ahead beyond 2026, voice AI is likely to get even smarter and more ubiquitous. We expect real-time multilingual translation, so a conversation can seamlessly switch languages. AI voices will also get better at “social cues,” understanding emotion and sarcasm. Voice agents will become omniscient colleagues, integrated into every IT service (think voice-controlled coding assistants or system monitoring bots). On the technical side, models will become lighter for offline use and more privacy-focused (voice data staying on-device). As ElevenLabs continues R&D, features like improved voice cloning customization and advanced dialect support will emerge. Industry analysts predict that within a few years, interacting by voice will be as common as typing – and companies using these technologies early will lead the pack in customer engagement.

Q: How does ElevenLabs fit with regulations and industry standards?

ElevenLabs has positioned itself as a compliant enterprise partner. For example, Scribe v2 and its API comply with SOC2, ISO 27001, and even HIPAA for healthcare audio. They offer data residency options (servers in EU and India) and a “zero retention” mode to delete voice data immediately after use. This addresses corporate and legal concerns. On regulation, ElevenLabs voices must be used in line with laws; as noted, many governments now categorize synthetic voice as sensitive content. ElevenLabs typically incorporates consent prompts and watermarking. In practice, IT departments using their tools must also follow policies (e.g., check if a caller consented to be recorded). The technology is robust, but responsible adoption requires governance – which savvy IT managers plan for when deploying AI voice.

Q: What impact does ElevenLabs have on jobs and skills in IT?

Voice AI will certainly change certain roles. Routine tasks like transcribing meetings or creating voiceovers can be automated, freeing staff for creative or analytical work. However, new jobs will grow: “voice UI designers,” conversational AI developers, and voice data analysts (to fine-tune these models). IT professionals will need to learn how to integrate voice APIs, curate voice content, and ensure ethical use. Companies should invest in training: for instance, teaching support staff to handle handoffs from AI agents. In broader terms, ElevenLabs and similar tools shift the skillset toward AI literacy. The Indian government’s AI initiatives (like the Manav vision) already emphasize developing talent in emerging AI fields, and voice AI is a big part of that story.

+ There are no comments

Add yours